Ranked the 9th fastest supercomputer in the world as of the November 2019 Top500 list, SuperMUC-NG located at the Leibniz Supercomputing Centre (LRZ) is powering innovative and energy efficient science in Europe, and delivering ground-breaking visualization results. It is designed as a general-purpose system to support applications across all scientific domains – life sciences, meteorology, geophysics, and climatology, to name a few. Astrophysics has been a dominant user group on LRZ’s supercomputing systems. And most recently LRZ has made their supercomputing resources available for COVID-19 related research.

Built by Lenovo, the SuperMUC-NG system is powered by Intel Xeon Scalable processors and utilizes an Intel OmniPath fabric interconnect. Astrophysicist Hans-Thomas Janka (Scientist, Max-Plank-Institute for Astrophysics and lecturer at the Technical University of Munich) observes, “We are insatiable when it comes to data volumes and computing power.” He continues, “We get a lot of data from stars and explosions from the cosmos by measuring radiation, elementary particles or gravitational waves. But we can only really observe a few developments. Therefore, astrophysicists develop models for evaluation and calculate them with the help of mathematical and physical equations. This easily produces terabytes of data that we can only analyze or visualize with high performance computers.” [i]

Dubbed the “next geneneration” system as indicated by the “-NG” designation, the benefits of the new SuperMUC-NG system will be felt by many as LRZ supplies its high performance computing resources to German national and international research teams. LRZ is a member of the Gauss Centre for Supercomputing (GCS), which combines the three national centers, namely High Performance Computing Center Stuttgart (HLRS), Jülich Supercomputing Centre (JSC), and Leibniz Supercomputing Centre (LRZ), into Germany’s foremost supercomputing institution.[ii]

In a recent development, researchers can use the SuperMUC-NG and the infrastructure of the LRZ for COVID-19 research including the search for vaccines and therapeutics, analyzing and forecasting spread scenarios for contingency planning, as well as exploring the virus and its behavior. [iii]

Dr. Janka explains the extraordinary scientific impact of foundational turbulence calculations performed on the earlier SuperMUC clusters, “Previously, astrophysicists could only perform much smaller calculations and thus only calculate two-dimensional models. For us, SuperMUC was a gift. Even three-dimensional simulations became possible. That was a huge breakthrough for us.” [iv] His statement is based on simulated results that consumed more than 570 million core hours on the earlier clusters.

SuperMUC-NG allows even more detailed models

SuperMUC-NG significantly augments the abilities of researchers to advance the state-of-the-art in research. For example, a team of researchers led by Australian National University (ANU) Professor Christoph Federrath used the system to run the largest magneto-hydrodynamic (MHD) simulation of astrophysical turbulence ever performed. [v] In particular, the inclusion of magnetic fields made the computation twice as challenging. The details of this foundational simulation work are discussed in the 2016 article “The world’s largest turbulence simulations”.

Using Software Defined Visualization (SDVis), the performance benefits and capabilities of the SuperMUC-NG hardware made it possible for a team of experts collaborating with Luigi Iapichino and Salvatore Cielo at LRZ to visualize simulation results using static 3D grid resolutions as high as 100483. “This unprecedented resolution allows for a dynamic range of four orders of magnitude in length scale”. The resolution is extraordinary and the team was able to report that these extreme scale visualizations demonstrate an excellent quantitative characterization of the gas Mach number as a function of spatial scale (the so-called structure function) with theoretical models in both the supersonic and subsonic regimes. [vi]

This visualization work was selected as a finalist in the Supercomputing 2019 “Best Scientific Visualization” contest held in Denver, Colorado (Nov. 17-22). [vii] The LRZ YouTube video titled “Visualizing the world’s largest turbulence simulation” describes the work and illustrates the fine details and dynamics that can be seen in the LRZ simulations at this extreme resolution.

A collaborative effort

The team partnered with visualization experts at LRZ and Intel to create the scientific visualizations presented at SC19. [viii] Each snapshot required more than 23 terabytes of disk space, creating an enormous amount of data to visualize. Using the Intel OSPRay engine and VisIt, the team was able to take advantage of nearly all of SuperMUC-NG’s 6,336 nodes. [ix] The Intel OSPRay library is an open source, scalable, and portable ray tracing engine (e.g. OSPRay) that delivers interactive, high-fidelity visualizations using Intel Architecture CPUs and is part of the Intel oneAPI Rendering Toolkit. The VisIt application is an open-source interactive parallel visualization and graphical analysis tool for viewing scientific data.

The following images illustrate some of the minute details in the LRZ turbulence data. Figure 1 shows the density of the turbulent gas, plus some velocity streamlines. The data are explored in “slabs”, as the full box would contain too many small details.

Figure 2 reflects a first-time demonstration that the extreme resolution 11563 model can resolve the transition between the two sonic scales. The team reports, “This in turns lets scientists infer the width distribution of filamentary structures in star-forming regions and ultimately the critical density for the formation of stars.”[x]

Not just limited to astrophysics, extreme-scale astrophysics turbulence simulations can also help shed light on the general nature of turbulent flow problems, including those found in Earth-bound cases.

Capitalizing on a four-fold increase in performance

The LRZ team built and deployed a custom version of the VisIt software in order to fully utilize the high degree of parallelism (number of cores per node and large vector registers) provided by the SuperMUC-NG Intel Xeon Platinum 8174 processors. The team writes, “This version integrates the Intel OSPRay rendering engine, embodying the software defined visualization concept, optimized for CPU usage without the need of accelerators. OSPRay uses Intel Threading Building Blocks (Intel TBB) for parallel work sharing, and integrates additional features […] which are absent in the standard version of VisIt.”

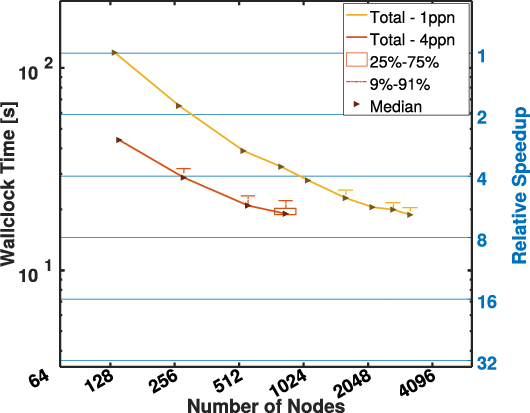

LRZ reports the new Intel Xeon Scalable processors (SKX in the following graph) to deliver much improved scaling and performance compared to the SuperMUC Phase 2 Intel Xeon E5-2697 v3 processors (HSW).[xi] The improvement can be seen in the comparative scaling results reported by the LRZ visualization team when creating their visualizations included below. The introduction of OSPRay (yellow and red lines) brings a further 8x speedup with respect to older methods (blue line). (Note the log scale of the y-axis.)

Rob Farber is a global technology consultant and author with an extensive background in HPC, AI, and teaching. Rob can be reached at [email protected].

[i] https://www.lrz.de/presse/ereignisse/2019-12-04_Interview-Prof-Janka-_EN/

[ii] https://doku.lrz.de/display/PUBLIC/Books+with+results+on+LRZ+HPC+Systems

[iii] https://www.lrz.de/wir/newsletter/neu/

[iv] https://www.lrz.de/presse/ereignisse/2019-12-04_Interview-Prof-Janka-_EN/

[v] https://sciencenode.org/feature/How%20does%20a%20star%20form.php

[vi] https://sc19.supercomputing.org/proceedings/sci_viz/sci_viz_files/svs103s2-file1.pdf

[vii] Visualizing the world’s largest turbulence simulation

[viii] https://sciencenode.org/feature/How%20does%20a%20star%20form.php

[ix] ibid

[x] ibid

[xi] https://www.hpcwire.com/2018/09/26/germany-celebrates-launch-of-two-fastest-supercomputers/