Dell Technologies advanced its hardware virtualization strategy to AI workloads this week with the introduction of capabilities aimed at expanding access to GPU and HPC services via its EMC, VMware and recently acquired Bitfusion units.

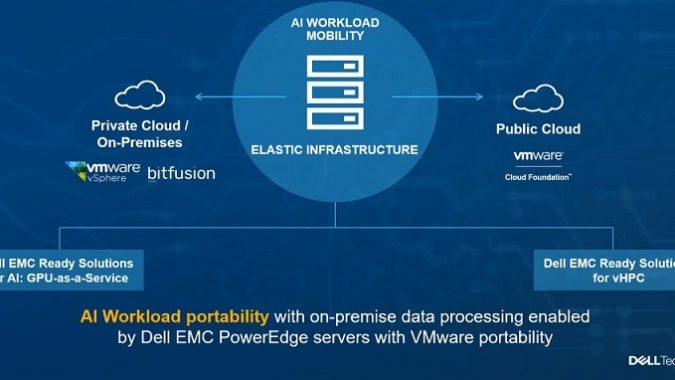

The result would be an “AI anywhere” capability in which machine learning and other workloads could scale on hybrid cloud infrastructure designed to support everything from individual workstations to advanced computing.

Dell, which completed a mega-merger of those and other units last fall, is extending its automated IT framework to help customers manage and run AI workloads on HPC platforms. VMware’s acquisition last year of Bitfusion, which specializes in virtualization of hardware accelerator resources in GPU technology, extended the parent company’s strategy of virtualizing architectures such as those used to train AI models.

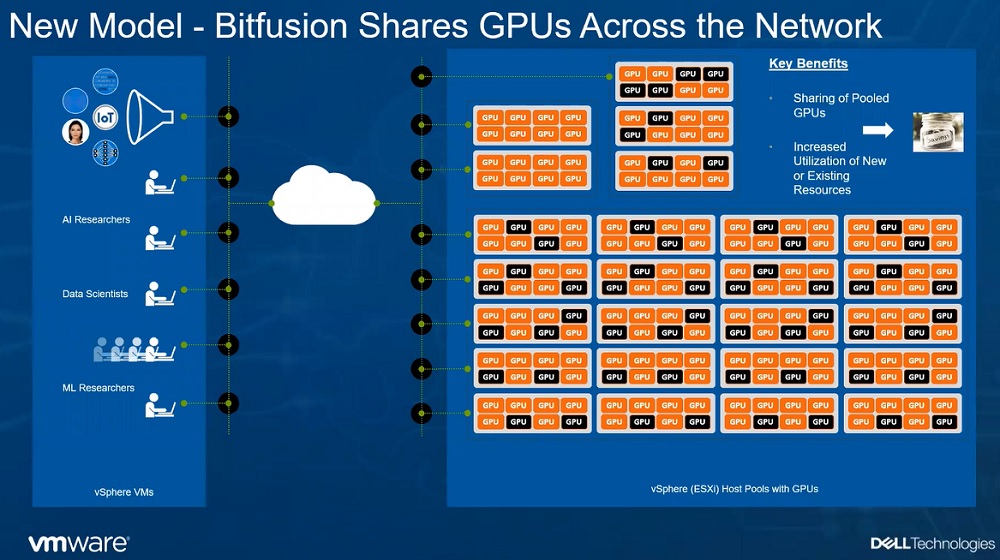

A new GPU service running on the latest version of VMware’s vSphere server virtualization software integrates Bitfusion’s platform to abstract GPUs used accelerate AI model development. The goal is to free up access to GPUs tied to individual servers by creating “virtual GPU pools,” a framework that also has the benefit of boosting resource utilization. (Dell estimates as little as 15 percent of GPU resources are utilized when deployed as a dedicated system.)

“We have this huge gap of access,” said Paul Turner, VMware’s vice president of cloud platforms. Hence, Dell has tuned its vSphere server virtualization along with AI infrastructure acquired via Bitfusion to support GPUs accelerators used for AI applications like vision and image analysis at scale.

“We’ve built-in support for these hardware accelerators directly into the native hypervisor,” Turner said.

The next step is creating a means of aggregating and sharing hardware accelerators. To that end, the vSphere-Bitfusion combination allows GPU pools to be created on-premise. The AI service runs on VMware’s Cloud Foundry stack that integrates vSphere 7 support for Kubernetes cluster orchestration and container applications for AI workloads.

That architecture is also part of Dell’s hybrid cloud push for migrating and running virtualized workloads in the cloud with partners such as Google.

Meanwhile, the push into virtualized HPC architectures seeks to address the convergence of AI development and enterprise requirements for supercomputing capabilities.

“We see the HPC market continuing to grow, and it’s becoming more applicable” across different industries than it was a decade ago, said Ravi Pendekanti, Dell’s senior vice president for server and infrastructure systems. Dell estimates the server-side HPC sector will be a $19 billion market by 2023. “Which essentially means that it is a growing entity, and there is a lot of overlap with everything happening in the AI” market, Pendekanti added.

The company’s vHPC platform also runs on vSphere-Bitfusion combination as a way to ease provisioning and lower the cost of running HPC and AI applications ranging from computer-aided engineering to computational chemistry.

The virtual HPC architecture is touted as reducing the time to train AI models from months to days, the company said.

While AI servers are increasingly “oversubscribed” by developers, those researchers frequently bound to specific application servers are unable to gain quick access to GPU resources. “We’re seeing collections of servers that are dedicated for a particular AI research” project, Turner explained

Along with virtualizing GPUs, Bitfusion is intended to address those resource gaps by expanding AI developers’ access to acceleration hardware.

Bitfusion manages the pool of GPU accelerators virtualized on vSphere. Then, “we’re separately allocating that pool as needed to AI researchers,” creating what amounts to a pay-as-go service, Turner said. “We’re networking GPU servers” similar to the way network-attached storage allows networking of storage devices, he added.

The payoffs are increased access to computing resources and greater utilization of expensive accelerators.