At the European Organization for Nuclear Research (CERN), over 200 physicists across dozens of institutions are collaborating on a project called COMPASS. This experiment (short for Common Muon and Proton Apparatus for Structure and Spectroscopy) uses CERN’s Super Proton Synchrotron to tear apart protons with a particle beam, allowing researchers to see the subatomic quarks and gluons that make up these building blocks of the universe. But particle beams aren’t the only futuretech in play – the experiments are also enabled by a heavy dose of supercomputing power.

“The spatial pattern and the velocities of the fragmenting particles allow us to create a dynamic picture of the proton and other objects composed of quarks,” said Caroline Riedl, a research assistant professor of nuclear physics at the University of Illinois at Urbana-Champaign (UIUC), in an interview with TACC’s Jorge Salazar. When the protons are blasted apart, 240 large tracking devices capture and digitize the results, which are then interpreted using algorithms.

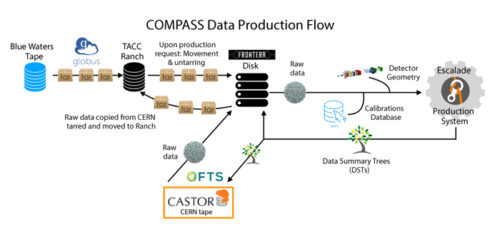

Of course, this requires a massive amount of computing power. Previously, the research team used the Blue Water system (which delivers around 13 peak petaflops) at the National Center for Supercomputing Applications (NCSA) to analyze the data. Using Blue Waters, the team examined the Sivers Transverse-Momentum Dependent distribution (or “Sivers TMD” distribution), which is indicative of the orbital motions of the quarks inside of protons. For the first time, they were able to observe an expected sign change in the Sivers TMD – a major performance milestone for government-funded nuclear physics research.

Now, the team is returning to the same data – which spans 2015 to 2018 – with shinier hardware: the Frontera system at the Texas Advanced Computing Center (TACC). Supplied by Dell EMC, Frontera boasts 8,008 compute nodes equipped with Intel Xeon Platinum 8280 CPUs, 192 GB of memory, and a Mellanox InfiniBand HDR100 interconnect. It also has two subsystems, each equipped with four Nvidia GPUs per node (Quadro RTX 5000s in one subsystem, V100s in the other). Frontera delivers 23.5 Linpack petaflops of performance and ranks fifth on the most recent Top500 list of the world’s most powerful publicly ranked supercomputers.

“The procedure of finding particle tracks emerging from the interaction point and traversing hundreds of COMPASS detector layers is CPU-intensive,” Riedl said. Around three petabytes of data from the 240 tracking devices was moved to TACC for the new data analysis. “The challenge consists of parallelizing the submissions of the tracking code on the computing grid while respecting the system in terms of I/O and numbers of requested computing nodes. A typical production campaign requires about 50,000, ideally parallel, submissions of the tracking code.”

The team is also looking ahead to new data that will emerge from the upcoming COMPASS++/AMBER experiment at CERN, which will involve a wider variety of measurements thanks to new detection hardware. These measurements will help Riedl better examine the motions of quarks within protons, among other fundamental mysteries. The researchers are using Frontera to help design these new detectors using simulated data.

“We run mass productions of COMPASS data on Frontera, determine detector efficiencies, and simulate COMPASS and COMPASS++/AMBER data. The simulated data play a central role in understanding subtle detector effects and complement the experimental data,” Riedl said. “Frontera will allow us to analyze the COMPASS data in a timely manner and at the precision required to obtain an absolute normalization of the data with the smallest possible uncertainties.”

“Only Frontera will allow for the detailed simulations necessary to optimize instrumentation upgrades for the future COMPASS++/AMBER experiment,” she added.

Header image: the Super Proton Synchrotron. Image courtesy of CERN.

To read TACC’s article discussing this research, click here.