Last week the President Council of Advisors on Science and Technology (PCAST) met (webinar) to review policy recommendations around three sub-committee reports: 1) Industries of the Future (IotF), chaired be Dario Gil (director of research, IBM); 2) Meeting STEM Education and Workforce Needs, chaired by Catherine Bessant (CTO, Bank of America), and 3) New Models of Engagement for Federal/National Laboratories in the Multi-Sector R&D Enterprise, chaired by Dr. A.N. Sreeram (SVP, CTO, Dow Corp.)

Yesterday, the full report (Recommendations For Strengthening American Leadership In Industries Of The Future) was issued and it is fascinating and wide-ranging. To give you a sense of the scope, here are three highlights taken from the executive summary of the full report:

- With regard to the first pillar, Federal agencies need to take full advantage of their administrative authorities to partner with industry and academia in new and innovative ways, particularly to ensure the effective transition and translation of early-stage research outcomes into applications at scale. In the area of AI, this includes establishing a joint AI Fellow-in-Residence program, AI Research Institutes in all 50 States, National AI Testbeds, partnerships for curating and sharing large datasets, and joint international programs for attracting and retaining the best global talent, and research and development (R&D) and training for trustworthy AI.

- The second pillar of this report homes in on a new model for leveraging the strength of America’s National Laboratories to enhance and accelerate substantial front-to-back progress in IotF. The cornerstone recommendation involves establishing a new type of world-class, multi-sector R&D institute that catalyzes innovation at all stages of R&D—from discovery research to development, deployment, and commercialization of new technologies. These highly prestigious “IotF Institutes” would support portfolios of collaborative projects at the intersection of two or more IotF pillars, and be structured to minimize burdensome administrative overhead so as to maximize rapid progress. They would utilize innovative intellectual property terms that incentivize participation by industry, academia, and non-profits as a means for driving commercialization of IotF technologies at scale.

- Achieving success with the first two pillars of this report rests upon the Nation’s ability to strengthen, grow, and diversify its science, technology, engineering, and mathematics (STEM) workforce at all levels—from skilled technical workers to researchers with advanced degrees. First and foremost, America must build the Workforce of the Future by creating STEM training and education opportunities for individuals from all backgrounds, STEM and non-STEM, including underrepresented and underserved populations. Employers, academic institutions, professional societies, and other partners should develop programs to provide non-STEM workers with professional competencies that will grant them a role in the STEM Workforce of the Future. Public- and private-sector employers should be recruited to pledge and realize support for hiring newly skilled STEM workers, especially those from non-traditional backgrounds, into STEM positions.

The devil will be in the details. Summer, of course, is the time when diverse public and private groups working on policy – in this case technology policy – formulate their ideas in more concrete forms for consideration by policy-makers. The just-released PCAST report is one example. Recently proposed legislation to remake NSF is another example (See HPCwire article, $100B Plan Submitted for Massive Remake and Expansion of NSF). It’s difficult to gauge the impact of competing proposals at this stage.

Loosely, PCAST provides advice to the President on science and technology issued. It has existed in various forms with varying names stretching back to Teddy Roosevelt. PCAST in its current form dates to President George H. W. Bush who renamed the group and switched it from reporting to White House science advisor to reporting directly to the President. PCAST lives in the Office of Science and Technology and Kelvin Droegemeier, the director of OSTP also chairs PCAST. Droegemeier presided at the most recent meeting.

Current PCAST efforts emphasize expanding industry participation in key technologies including closer collaboration with government and academia. Formally, the PCAST report is the work of three sub-committees: American Global Leadership in Industries of the Future (Gil); Meeting National Needs for STEM Education and a Diverse, Multi-Sector Workforce (Bessant); and New Models of Engagement for Federal and National Laboratories in the Multi-Sector R&D Enterprise (Sreeram).

Perhaps the most relevant, near-term proposal for the HPC community is the report led by Gil whose webinar presentation focused largely on AI and quantum science. Here’s a snippet from Gil’s prepared remarks at the webinar.

“It is time to scale AI. Throughout all our conversations with leaders across federal agencies, universities, nonprofits and industry, it is clear that AI is at the top of the list of technologies that can make the biggest difference to the health prosperity and security of our nation…That is why we’re recommending a 10x R&D investment growth over 10 years in AI research and applied institutes in all 50 states [and] an unwavering commitment to invest in developing and attracting the best talent into this field and industry,” said Gil.

The report notes “industry will invest more than $2 billion between 2020–2025 to design, build, and deploy high-availability quantum computing systems, execute a roadmap that will at least double system performance every year, and build cloud-accessible quantum computational centers and associated services” and recommends a Federal investment of $100 million/year for the next 5 years to create quantum computing user facilities leveraging the output of the multi-billion-dollar investments that industry is undertaking designing and building quantum computing systems.”

Broadly, Gil noted, “The mission of our subcommittee is to collaborate on an action plan for ensuring American leadership in the industries of the future, which include quantum information sciences, artificial intelligence, advanced wireless communications, advanced manufacturing and biotechnology.

“We were asked and tasked with identifying and making recommendations on strategic steps to help bridge critical gaps and augment and strengthen existing federal actions [and] to identify new opportunities and some strategic actions that can be taken to accelerate the industries, and recommend strategies for enhancing cross sector and international cooperation. Our recommendations today are focused on AI and quantum computing, two rapidly advancing technologies and also on the opportunities that are present in their convergence.”

Much of the material is familiar to the HPC community. Given the added funding already promised for AI and quantum, getting more may prove to be a tough sell in the current climate. However, given that PCAST’s charge presumably stems directly from the President’s office, it will be interesting to watch how its recommendations fare.

Gil set the stage, “Over the last decade, powered by exponential growth in computing power, and ever increasing availability of data, technological breakthroughs in AI are enabling intelligent systems to take on increasingly sophisticated tasks and augmenting human capabilities in a new and profound way. It is undoubtedly the case that AI has emerged as one of the most important technologies of our era. And in recent months, during the COVID-19 crisis, AI has demonstrated critical capabilities as well as important potential for the future.”

“We recommend sustained investment growth of a billion dollars per year in non-defense research funding through 2030 as described in the paper,” he said. Noting, the 2020 budget request for AI within NSF is $487 million, he said, “[B]ased on the number of highly rated proposals that currently go unfunded and are of equal marriage to those which are funded. PCAST anticipates that growing the investment $1 billion a year would allow for making at least 1000 additional awards to individual investigators without any loss of quality.”

Here’s a somewhat extended excerpt from Gil’s prepared remarks with more detail on AI recommendations:

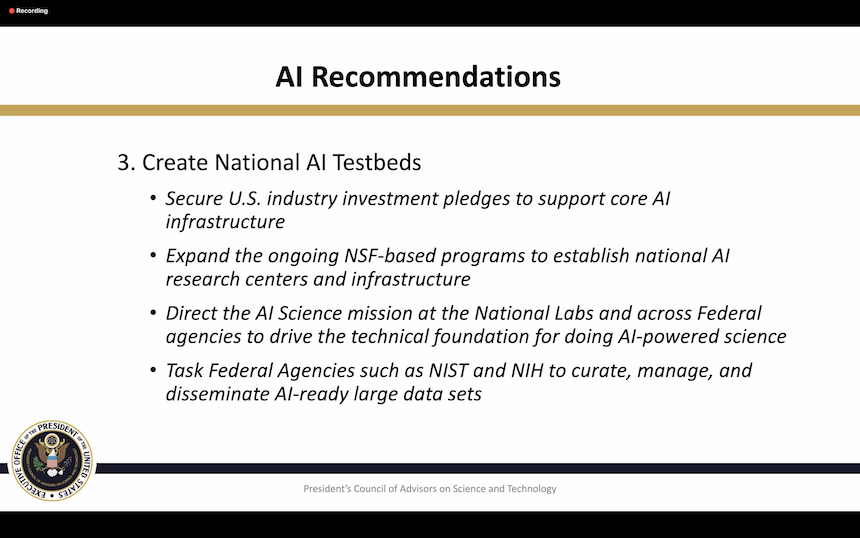

“It is important to create a virtuous cycle aimed at the innovation infrastructure itself to continuously accelerate R&D in AI. The COVID-19 High Performance Computing consortium and the coordinating team data sets are examples of the enormous value of creating platforms for sharing data and computational resources for accelerating efforts in science and technology related to the crisis. The creation of a national research cloud currently being considered by Congress is another good example. We therefore recommend the creation of national AI testbeds, by first securing us industry investment pledges support for AI infrastructure. This would include grants to provide compute infrastructure for research and education related to AI, including things like free cloud credits and high performance computing cluster donations to universities, open source AI frameworks, libraries and tools to democratize access to the latest advances in AI.

“[We] recommend expanding the ongoing NSF based programs to establish national AI research centers and infrastructure with sustained long-term funding to enable cross-cutting research and technology transitions. These centers would enable research on core and applied AI in addition to the AI research institutes that we discussed a minute ago. Also, applied AI Institutes [could] focus on things like agriculture or AI for manufacturing, as well as cross-cutting AI topics such as AI for social good [such as] future of work and harnessing big data. It is quite clear that AI is going to touch every area of science. We therefore recommend directing the AI science mission at the National Labs and across federal agencies to drive the technical foundation for performing scientific research with AI powered methods.

“And because data is the fuel for AI, we recommend tasking federal agents such as NIST and NIH to curate, manage and disseminate large data sets across critical areas for AI applications, working across us agencies, industry partners, and other stakeholders. We cannot emphasize enough how critical it is to get data AI-ready. Let’s remember that 80 percent of the effort of any AI project is typically spent on the data curation and preparation.”

There is a proposal in the report to use AI-driven workflow to improve research and discovery productivity as shown in the figure below.

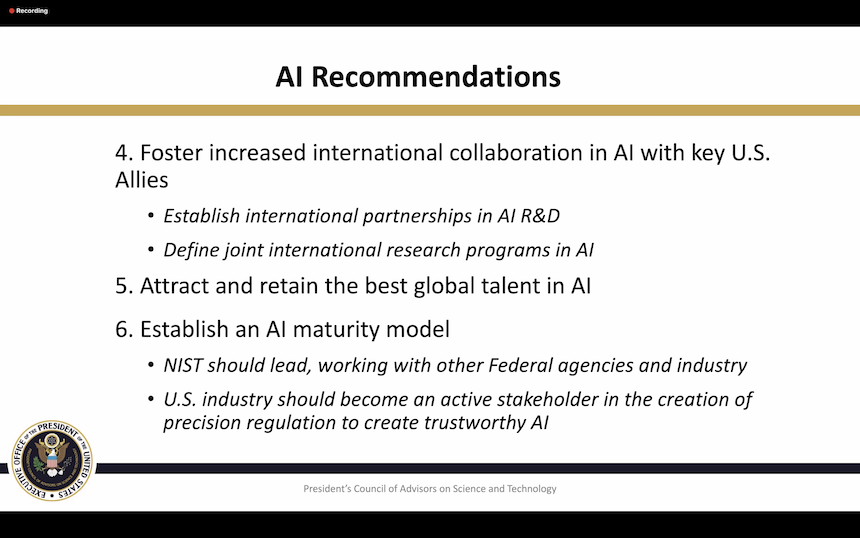

Here are a few more slides with a some of PCAST’s proposed AI activities taken from the webinar. It’s best to consult the full report.

HPCwire will provide further coverage of the report later.

Link to the full report, Recommendations For Strengthening American Leadership In Industries Of The Future, https://science.osti.gov/-/media/_/pdf/about/pcast/202006/PCAST_June_2020_Report.pdf?la=en&hash=019A4F17C79FDEE5005C51D3D6CAC81FB31E3ABC

Link to webinar agenda and meeting all three slide presentations: https://science.osti.gov/About/PCAST/Meetings/202006