Recurrent neural networks (RNNs) have shown phenomenal success in several sequence learning tasks such as machine translation, language processing, image captioning, scene labeling, action recognition, time-series forecasting, and music generation. No doubt, CNNs are the most popular neural networks, but RNNs are no less important. This fact is evident from the fact that in 2016 and 2020, RNNs accounted for 29% and 21% of the TPU (tensor processing unit) workloads run in Google datacenters. RNN training involves a large number of epochs for convergence, which increases the training time and energy overheads. During RNN inference, the dataflow graph of RNN changes based on the input. These factors make underscore the need to accelerate RNNs.

RNN acceleration is no easy joke

RNN computations involve both intra-timestep and inter-timestep dependencies. These dependencies lead to poor hardware utilization and low performance. For example, the dominant computation-pattern of convolution layers is matrix-matrix multiplication, whereas that of RNNs is matrix-vector multiplication. This also means that RNNs have less data-reuse. Further, in their ISCA 2017 paper, Google researchers have compared the performance of MLP (multi-layer perceptron), CNN and LSTM (long short term memory) models on Google’s TPU version 1 which can provide a peak-performance of 92 TOPS/sec. They observed that while CNN0 achieved a performance 86 TOPS/sec, LSTM0 and LSTM1 achieved only 3.7 and 2.8 TOPS/sec, respectively. Evidently, the hardware acceleration of RNNs is more challenging than that of CNNs.

GPU, FPGA and ASIC: Using all arrows in the quiver

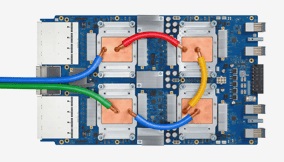

Since all the three computing systems have their own forte, researchers have optimized or designed accelerators using all the three systems. The recent paper I’ve co-authored with Sumanth Umesh (A Survey on Hardware Accelerators and Optimization Techniques for RNNs) reviews state-of-the-art accelerators from both academia and industry. Some of the commercial designs we reviewed include Brainwave (Microsoft), Intelligence Processing Unit (IPU, from Graphcore), TPU v1/v2/v3 (Google), and Volta GPU (NVIDIA). The core-computation engine of these accelerators performs multiplication between vector-scalar, vector-vector, matrix-vector, and matrix-matrix. Of these, we found matrix-vector multiplication engines to be the most widely used, which is also expected since this is the key computation pattern of RNNs. Note that the computation engine used in Brainwave is matrix-vector multiplication, whereas that in TPU, IPU, and tensor cores of Volta GPU is matrix-matrix multiplication.

Our RNN is frittered away by details. Simplify! Simplify!

The fact that RNNs are used for error-tolerant applications provides an opportunity to trade off their accuracy to gain efficiency. For example, hardware-aware pruning, low-precision, and even variable-precision have been shown to provide substantial gains in throughput and energy-efficiency of RNNs. An exemplar hardware-aware pruning technique divides each row of the weight matrix into sub-rows and performs pruning such that the number of non-zero weights is equal in all the sub-rows. This approach balances the workload of each row, which reduces memory contention and allows exploiting parallelism. Further, unlike coarse-grain pruning techniques, this approach does not sacrifice accuracy. The variable-precision technique works by using low-precision when the LSTM cell-state changes slowly and high-precision when the LSTM cell-state shows rapid changes over time. It is noteworthy that low-precision formats such as BF16 are now supported on these accelerators and even the latest CPUs.

Further, it is well-known that a sentence can be summarized without processing all the words of the sentence, and the caption of a video may be generated without seeing all the video frames. Several techniques use these observations to skip unimportant or nearly-similar frames or words to accelerate RNN processing. Some researchers leverage the semantic correlation between the words of a sentence. For example, in the sentence completion task, if the present word is “He,” the following word is more likely to be “is” than “teach,” “are,” or “your.” Based on this correlation, the processing of different words can be speculatively parallelized.

CNN or RNN or both?

Many real-world applications such as “long-term recurrent convolutional networks” (LRCN) used for visual recognition and description, and SHOWTELL used for image captioning, use both CNNs and RNNs. Whether you are a data-center administrator or an end-user, you will most likely deal with CNNs and RNNs and even other deep-learning models. This fact is also evident from the development of benchmark suites such as MLPerf and Fathom, which include a diverse range of machine-learning models. As such, optimizing for either CNN or RNN alone is likely to provide limited gains. In fact, next-generation architectures must be examined based on a wide range of models.

The path ahead

While CNN has a limited receptive field, RNN theoretically offers an infinite receptive field. However, the limitation of RNN is its sequentiality, which means that RNN processes an input step-by-step. Since “attention mechanism” offers constant path length, it overcomes the limitations of both RNN and CNN. For this reason, the “attention mechanism” has drawn a lot of attention in recent years. For example, Google’s “neural machine translation” technique places an attention network between encoding and decoding LSTM layers. After all, deep-learning is a fast-moving field, and as deep-learning algorithms evolve, their system architectures must also change. There are a need and scope of achieving greater synergy between the efforts of researchers in the area of deep learning, computer architecture, and high-performance computing.

About the Author

Dr. Sparsh Mittal is currently working as an assistant professor at IIT Roorkee, India. He received the B.Tech. degree from IIT, Roorkee, India and the Ph.D. degree from Iowa State University (ISU), USA. He has worked as a Post-Doctoral Research Associate at Oak Ridge National Lab (ORNL), USA and as an assistant professor at CSE, IIT Hyderabad. He was the graduating topper of his batch in B.Tech and his BTech project received the best project award. He has received a fellowship from ISU and a performance award from ORNL. He has published more than 90 papers at top venues. He is an associate editor of Elsevier’s Journal of Systems Architecture. He has given invited talks at ISC Conference at Germany, New York University, University of Michigan and Xilinx (Hyderabad).

Dr. Sparsh Mittal is currently working as an assistant professor at IIT Roorkee, India. He received the B.Tech. degree from IIT, Roorkee, India and the Ph.D. degree from Iowa State University (ISU), USA. He has worked as a Post-Doctoral Research Associate at Oak Ridge National Lab (ORNL), USA and as an assistant professor at CSE, IIT Hyderabad. He was the graduating topper of his batch in B.Tech and his BTech project received the best project award. He has received a fellowship from ISU and a performance award from ORNL. He has published more than 90 papers at top venues. He is an associate editor of Elsevier’s Journal of Systems Architecture. He has given invited talks at ISC Conference at Germany, New York University, University of Michigan and Xilinx (Hyderabad).