Quantum computing made its debut at Hot Chips this year with a lengthy ‘tutorial’ session on Sunday including talks from Google, IBM, Intel, Microsoft, and Facebook. What that portends for quantum computing’s closeness to practical application is still hazy – certainly there are no hot quantum chips to buy yet – but it’s clear there’s tangible progress and growing momentum behind quantum computing. There are now POC devices and programs for superconducting, ion trap, silicon spin, cold atom, and photonic qubits. The presenting companies and many more players have funding, real projects, and government-backed enthusiasm to make quantum computing a reality.

Quantum computing ideas and development seem to be percolating everywhere.

Much of what was presented is familiar to regular observers of the QC development community where work on semiconductor-based superconducting qubits (IBM, Google, D-Wave) and recently ion trap technologies (IonQ and Honeywell) have received the bulk of attention. Session moderator Misha Smelyanskiy of Facebook (director of AI) and Jim Martinis (UCSB and formerly Google), both did solid jobs broadly covering quantum computing and progress to date.

One of the more interesting talks was by Jim Clarke on Intel’s silicon spin qubit technology which is based on silicon quantum dots. On balance, Intel has been less active than many in joining the quantum clamor. Many challenges face quantum computing, not least the ability to scale up (number of qubits) from today’s small systems. One hurdle is the requirement for most qubit technologies to operate in near-zero (Kelvin) environments. Just squeezing the required number of control cables into dilution refrigerators is daunting and system size limiting.

Intel believes it has a better way. Tiny CMOS-based silicon quantum dots and cold-hardened control chips, argues Intel, present a much more efficient path for scaling and ultimately practical quantum computing. Not surprisingly the manufacturing expertise to tackle those challenges is an Intel strong suit. Clarke’s pitch boils down to three elements 1) proven scalable manufacturing, 2) promising early spin qubit performance (coherency times), and 3) newly developed cryo-electronic control chips (not unwieldy coax cables).

Here’s Clarke neat recap of the state of affairs.

“Today, the system sizes that we’re operating are between a few qubits and perhaps up to 50 qubits. This is really at the proof of concept stage of this technology where the computational efficiency of these quantum computers perhaps succeeds for some contrived applications of a supercomputer. More importantly, it becomes a testbed for an overall quantum system. Only when you begin to get to 1000 qubits – let’s say 20 to 50 times more than we have today – would you be able to do something perhaps more useful, [with] limited error correction, [on] chemistry and materials, design, and optimization. It’s probably going to take millions of qubits to do something on the commercial scale, something that would change your life or mine, with fault tolerance operation [for] cryptography and machine learning. We have a ways to go,” said Clarke.

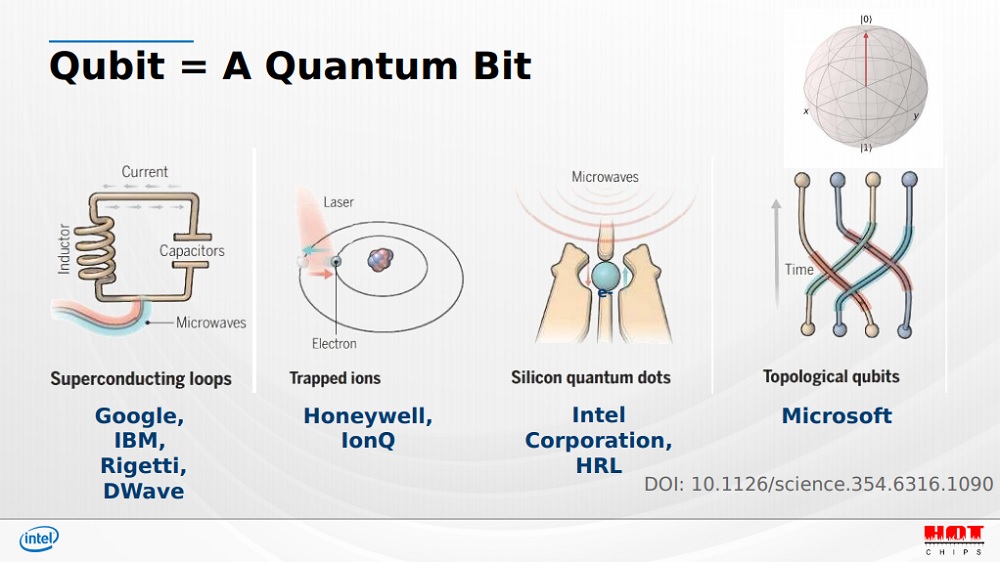

“There are many different qubits out there. The types of systems that are available are superconducting loops. This is basically a nonlinear LC oscillator circuit that creates two artificial levels that that are accessible by microwave frequencies and become the zero or one state of your system. These are the technologies favored by companies such as Google, IBM Rigetti and D-wave. You have the trapped ion technology where you have a laser controlling the excited state of a metal ion. This is very similar to an atomic clock, and this is a technology favored by Honeywell and IonQ.”

There are also topological qubits (Microsoft) where the topological state of the material should prevent errors from occurring as they do in other systems, but as Clarke pointed out, “To date, the topological qubits are a bit more theoretical than reality.”

So what is the silicon quantum dots technology favored by Intel? It’s not like Intel hasn’t explored superconducting qubits. You may recall in 2018 that Intel CEO Brian Krzanich talked about a 49-qubit superconducting quantum test chip, Tangle Lake, in his CES keynote. Tangle Lake measured 3in x 3in on a side and was developed with Intel partner QuTech.

At the time, Mike Mayberry, corporate vice president and managing director of Intel Labs, said, “In the quest to deliver a commercially viable quantum computing system, it’s anyone’s game. We expect it will be five to seven years before the industry gets to tackling engineering-scale problems, and it will likely require 1 million or more qubits to achieve commercial relevance.”

Mayberry’s comments still sound right.

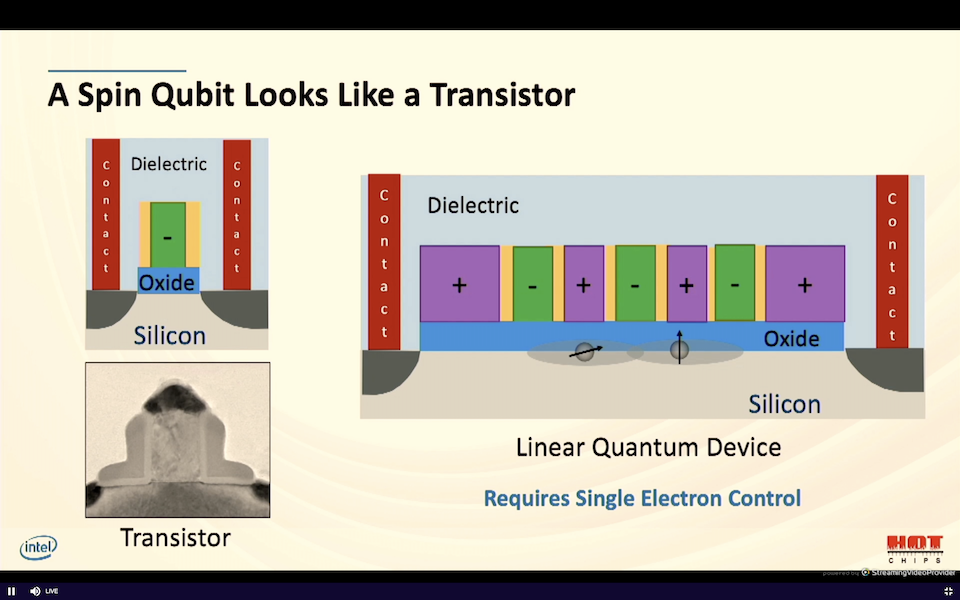

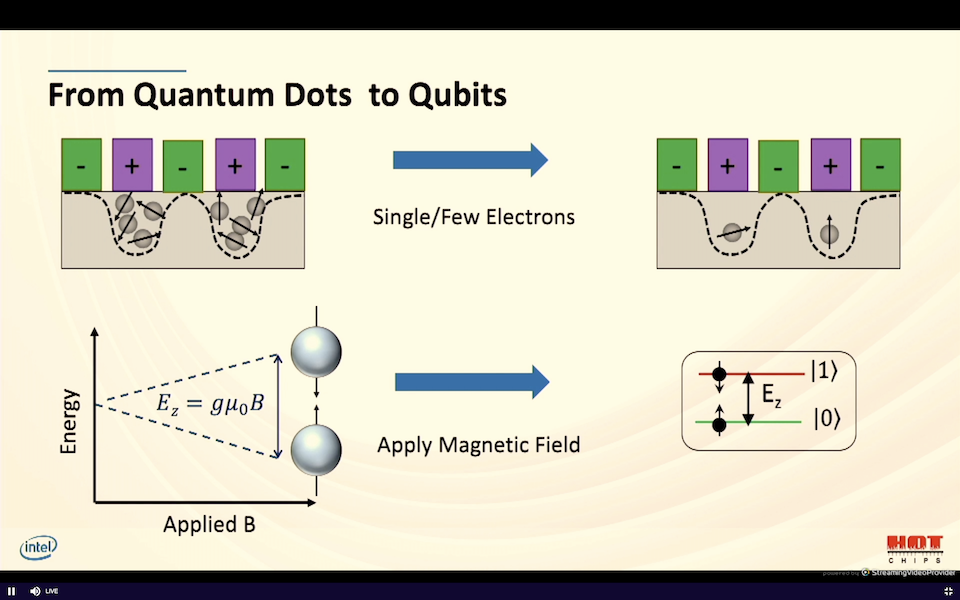

Intel pivoted to silicon quantum dots and spin based qubits for many reasons. For starters, silicon quantum dots look a lot like a transistor. It has a source, gate and drain and when you apply a potential, current flows through the device. Intel has developed technology to finely control the number of electrons flowing. Essentially, Intel fabs an array of transistors and creates pockets of electrons under several of the gates that are nearby (see slide). “I can tune these down by tuning the potential down to just a single or just a few electrons. I adjust the voltages on my transistor gates so that there’s only a single electron. This is done with an electromagnet inside one of the dilution refrigerators,” said Clarke.

“These individual electrons can either have a spin up or spin down that’s separated by a particular energy. The spins of the electrons, up or down, become the zero and one of our qubit. That’s how we encode our information and a spin qubit in silicon.”

The Intel quantum group is located at the Hillsboro, Oregon, site which houses Intel’s advanced manufacturing and the group taps into that infrastructure. “What you see on the left is a 300-millimeter wafer that’s been fabricated on a dedicated pilot line to produce quantum dots and qubits. Within these wafers we produce each die if you will, [and] each chip has multiple test structures. Instead of normal silicon we use silicon28 isotopically pure form of silicon. Another isotope of silicon, silicon29, which is commonly found, causes our qubits to lose their information and affects the fragility of our qubits,” Clarke said.

Intel uses its FinFET technology to make the silicon quantum dot qubits and the early indications are the devices perform well. “We put the electron into the spin up position. We wait a period of time and we see what fraction of the electrons that we test are still in that spin up state. This is done many times, all at the single electron level, so this is a series of experiments. We watch how the energy of those electrons is lost. How long does it take before we go from a spin up electron to a randomized sea of electrons of spin up or spin down random orientation? This is known as the T1 energy decay rate,” he described.

T1 times are on the order of about a second which is impressive according to Clarke.

“Now that we can make a single electron device, let’s do something useful with that single electron. This is where we [make] a qubit. On the top layer of our device we have a microwave ESR line. By applying a microwave to that single electron, we can control whether that electron is in the up state or down state. Under some conditions, we can get coherence time of various types such as CPMG, as long as milliseconds,” he said.

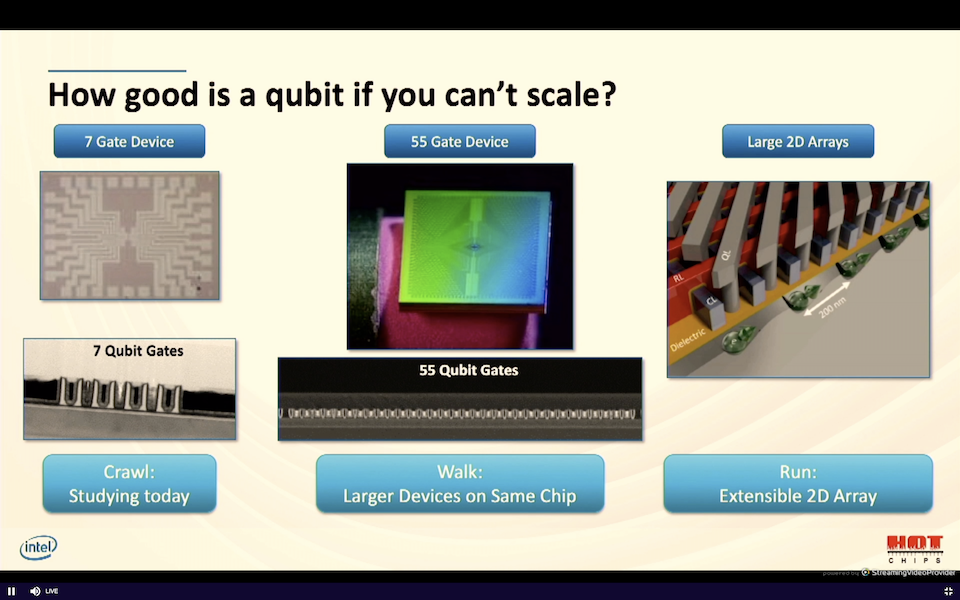

“Today we’re studying a seven-gate device. We’re essentially crawling (slide below). We have relatively few numbers of gates that we’re trying to study. Within the same mask that I showed you today, the same product, we have a 55-gate device. This is essentially leading to walking on larger devices on the same chip. And we have published ideas on extensible arrays of much larger. And so this would be the equivalent of running. But it’s not that easy,” he said.

Like their superconducting brethren, silicon quantum dots still need to operate in highly-controlled cold environments which means dilution refrigerators and squeezing a tangle of control wires inside. Intel tackled this problem by developing a mixed signal controller chip that can operate in cold temperatures.

“If we were to have thousands of qubits, we would need several thousands of coax lines. It’s hard to imagine one of those fridges having several thousand wires going into it. There just isn’t enough space. We have developed a chip called Horse Ridge [named for the coldest place in Oregon] that we’re using to control our qubits. This is an integrated qubit control chip that operates at low temperatures. It’s a mixed signal RFID chip and we’ve used our expertise in quantum core design to develop this. We take into account our packaging and interconnect performance at low temperatures. All of this is using the Intel 22 nanometer FinFET technology, which is the best RF technology on the face of the earth.

“You can see at the very bottom of the image (below) on the right we have our qubit chip, and then a slightly higher part within the refrigerator, we have a control chip mounted on top of a PCB and installed in the fridge. And Horse Ridge has drive capability for the qubits,” said Clarke.

Interestingly, Clarke said Horse Ridge can support both superconducting and spin qubits that allow frequency multiplexing and arbitrary pulse generation. “The key objectives are shown here (slide), we have to prove that the chip works at four Kelvin. We have to make sure it doesn’t fall apart at low temperature. We have to demonstrate that it can control qubits and that it’s in fact matched to room temperature operating equipment that you might buy off the shelf,” he said.

So far, “we’ve shown that we can match the Horse Ridge performance to room temperature electronics, and we’ve executed two-qubit algorithms. Demonstrating frequency multiplexing. That’s something that’s still in progress, but we’re well on our way,” said Clarke.

“Let me summarize. Quantum is going to change the world, but it’s going to require a large system, perhaps millions of qubits. Spin qubits are built on the same technology that transistors are built on today, and they have compelling performance. Finally, quantum computing won’t happen with brute force control or brute force wiring. We have to be elegant about that, and by tapping into our conventional computing capability with something like Horse Ridge (control chip able to work in cryo-environment), we feel that we’re going to get there. These are the areas that Intel is working,” said Clarke.

Intel’s quantum gambit is fascinating. Leveraging CMOS technology for scale would be a huge leap forward, if its silicon spin qubits works reasonably well and if effective cyro-controller chips can be developed. Indeed, selling cryo-controller chips might become a viable business on its own.