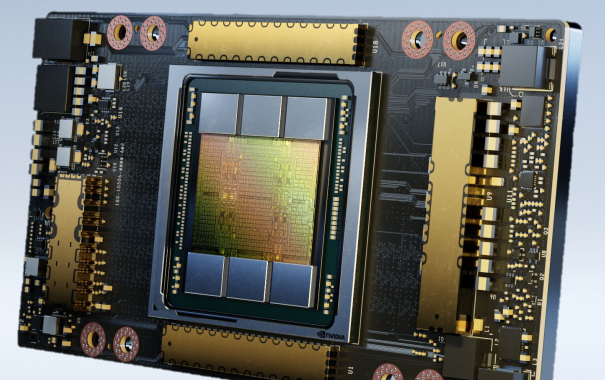

Nvidia has doubled the memory of its A100 datacenter GPUs with its new A100 80GB version, which aims to drive new levels of supercomputing performance in a wide variety of uses, from AI and ML research to engineering and more.

The new A100 80GB GPU comes just six months after the launch of the original A100 40GB GPU and is available in Nvidia’s DGX A100 SuperPod architecture and (new) DGX Station A100 systems, the company announced Monday (Nov. 16) at SC20.

The A100 80GB includes third-generation tensor cores, which provide up to 20x the AI throughput of the previous Volta generation with a new format TF32, as well as 2.5x FP64 for HPC, 20x INT8 for AI inference and support for the BF16 data format. Also included is faster HBM2e (high-bandwidth memory) with more than 2 terabytes per second of memory bandwidth, and Nvidia multi-instance GPU (MIG) technology that doubles the memory per isolated instance, providing up to seven MIGs with 10 gigabytes each. Third-generation NVLink and NVSwitch capabilities are also included, which provides twice the GPU-to-GPU bandwidth of the previous generation interconnect technology and accelerating data transfers to the GPU for data-intensive workloads to 600 gigabytes per second.

Systems powered by the new GPUs are expected to be available in the first half of 2021 or sooner from vendors including Atos, Dell Technologies, Fujitsu, GIGABYTE, Hewlett Packard Enterprise, Inspur, Lenovo, Quanta and Supermicro using HGX A100 integrated baseboards in four- or eight-GPU configurations, according to Nvidia.

Also unveiled by Nvidia Monday was its new DGX Station A100 machine, which the company calls an AI datacenter supercomputer in a box. Marketed as a petascale integrated AI workgroup server, this is the second-generation of the device, which originally debuted in 2017. The DGX Station A100, with 2.5 petaflops of AI performance, is aimed at machine learning and data science workloads for teams working in corporate offices, research facilities, labs and in home offices.

Nvidia also introduced its new Mellanox NDR 400 gigabit-per-second InfiniBand family of interconnect products, which are expected to be available in Q2 of 2021. The lineup includes adapters, data processing units (DPUs–Nvidia’s version of smart NICs), switches, and cable. Pricing was not disclosed. Besides the obvious 2X jump in throughput from HDR 200 Gbps InfiniBand devices available now, Nvidia promises improved TCO, beefed up in-network computing features, and increased scaling capabilities.

The A100 80GB GPU

Paresh Kharya, senior director of product management for accelerated computing for Nvidia, said the latest A100 80GB GPUs provide dramatic performance and efficiency gains for users when combined with all the optimizations in Nvidia’s software platform. For larger scale simulations, performance is 1.8 times faster compared to the A100 model the company announced six months ago.

Keeping to the same 400-watt thermal design parameter (TDP) as the A100 40GB, the A100 80GB is an SXM4 form factor GPU, available in four-way and eight-way HDX board configurations. Customers will still be able to get A100 GPUs in PCIe form factors, but only the 40GB memory variants, said Kharya.

“These are the same GPUs that are also available as a part of our HGX platform,” he said. “And our system maker partners are designing their servers based off of these HGX systems. And you can also expect them to be available more broadly into all kinds of form factors.”

For Nvidia, the new GPUs are part of the company’s approach as a full stack vendor, said Kharya. “We basically are ready to meet our customers at any level in our stack. DGX systems and SuperPods are at the top of our stack with [complete] turnkey solutions. However, if our customers want to buy and engage us at a different level, we engage them at our HGX baseboard level with these new products.”

The key to Nvidia’s latest components is that when they are combined with the company’s software, they provide supercomputer platforms that can help greatly expand scientific research and computing, Kharya told HPCwire sister publication EnterpriseAI.

“Scientific computing is no longer just about simulations,” he said. “The fusion of simulations with AI and data analytics is vital for the progress of science,” he said. “As our customers are designing their next generation supercomputers, it’s a really important consideration for them that they buy and create supercomputers that are not [just aimed] at running traditional simulations alone. [They need to be] great at all the computational methods that are being applied to advanced scientific progress, including AI and data analysis. And all of the all of these methods together, are needed in order to really make progress.”

Pricing for the new hardware will be determined by Nvidia system partners, he added.

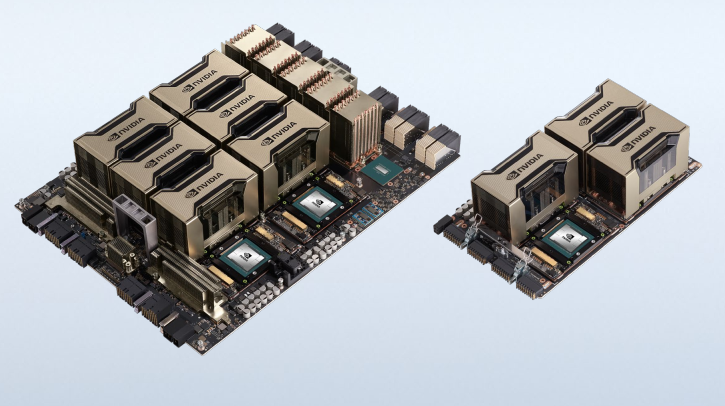

The DGX A100 640GB SuperPOD

The new Nvidia DGX A100 80GB A100s are implemented in Nvidia’s DGX SuperPod Solution for Enterprise, allowing organizations to build, train and deploy massive AI models on turnkey AI supercomputers in units of 20 DGX A100 systems.

The new Nvidia DGX A100 80GB A100s are implemented in Nvidia’s DGX SuperPod Solution for Enterprise, allowing organizations to build, train and deploy massive AI models on turnkey AI supercomputers in units of 20 DGX A100 systems.

The SuperPods are productized clusters of machines from 20 systems to 140 systems, and then in multiples of 140 after that, said Charlie Boyle, vice president and general manager of DGX systems for Nvidia. “It’s the fastest way for a customer to get AI infrastructure into their datacenter, built on top of the recipe that we run internally supported by Nvidia. Any problem that a customer could ever encounter, we have the exact same solution running inside.”

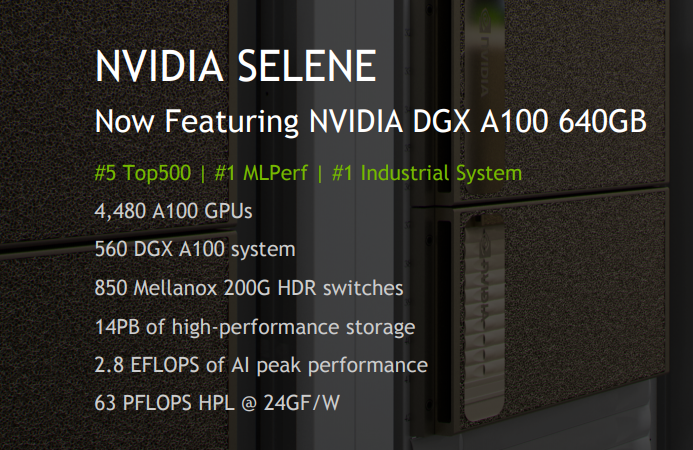

Nvidia in October shipped a number of DGX A100 640GB SuperPod systems to customers and says systems are in production now, including the University of Florida system that was announced in July (the result of a large endowment by UF alumnus and Nvidia cofounder Chris Malachowsky).

Nvidia’s in-house Selene supercomputer has already been upgraded with these new DGX A100 640GB units, boosting the system’s performance from 27.6 petaflops to 63.4 Lipack petaflops, moving Selene up two spots on the newly minted Top500 list into fifth place. Nvidia said that Selene’s DGX A100 320GB systems were upgraded to the 640GB boards to develop and test the customer upgrade process (discussed in more detail further below).

The new 640GB DGXs are also the foundation of the Cambridge-1 AI supercomputer, being built for biomedical research and healthcare. Announced by Nvidia in October, the UK-based supercomputer is scheduled to deliver more than 400 petaflops of mixed-precision AI performance (~8 petaflops of FP64 Linpack performance) with the first-generation components. The new system is named for University of Cambridge where Francis Crick and James Watson and their colleagues famously worked on solving the structure of DNA. It will feature 80 DGX A100s and is expected to be installed by the end of the year and provide access to collaborators in the first half of 2021.

The DGX machines are built in a modular fashion, said Boyle, which will allow customers of first-generation DGX A100 320GB systems to be able to upgrade their machines to the latest specifications with just with a tray change and some field updates. The upgrade, done by an Nvidia-authorized engineer swaps the existing GPU tray of a DGX A100 320GB system with the new 640GB version, and adds an additional 1TB of RAM, four more drives and a 10th CX6 NIC. The memory, drives and NIC are all added to existing open slots in the system.

“We expect those kits to be out starting in first quarter of next year for customers,” said Boyle. “We want to provide that investment protection for people.”

The DGX Station A100 Supercomputer In a Box

With 2.5 petaflops of AI performance, the latest DGX Station A100 supercomputer workgroup server runs four of the latest Nvidia A100 80GB tensor core GPUs and one AMD 64-core Eypc Rome CPU. GPUs are interconnected using third-generation Nvidia NVLink, providing up to 320GB of GPU memory. Using Nvidia MIG capability, a single DGX Station A100 provides up to 28 separate GPU instances to run parallel jobs and support multiple users without impacting system performance, according to the company. The latest DGX Station A100 is more than 4x faster than the previous generation DGX Station, according to Nvidia, and delivers nearly a 3x performance boost for BERT Large AI training.

Boyle said the DGX Station A100 brings customers supercomputers in a short time span, rather than taking months or years to build one on their own.

Prices for the DGX Station A100 are determined by Nvidia Partner Network channel partners who sell the systems for Nvidia, he said. Systems should begin shipping in late January, he added.

Analysts Give High Marks

Karl Freund, a senior analyst for machine learning, HPC and AI with Moor Insights & Strategy, said Nvidia’s A100 announcements are compelling.

“The move to HBM2e was expected, but it did surprise me that the company moved so quickly given the complete lack of any significant competitive pressures,” said Freund. “I suspect this was driven by customer requirements, especially for recommendation engines which demand massive tables and memory.”

And the improved DGX Station A100 product is also impressive and will be welcomed by developers in both HPC and AI, he said. “The net result is that Nvidia just raised the bar again and I don’t see anyone who can clear it to challenge their leadership.”

Another analyst, Addison Snell, principal of Intersect360 Research, said the expansion of memory capacity in the DGX A100 platform makes it suitable to a greater range of mixed-workload environments, which may appeal to traditional supercomputing labs that wish to expand their focus on AI. “That said, this is potentially predominantly attractive to the hyperscale market, which is still the primary driver of AI spending,” he said.