At the RISC-V Summit today, Art Swift, CEO of Esperanto Technologies, announced a new, RISC-V based chip aimed at machine learning and containing nearly 1,100 low-power cores based on the open-source RISC-V architecture.

Esperanto Technologies, headquartered in Mountain View, Calif., with other sites across the U.S. and Europe, was created in 2014 “with the goal of making RISC-V the architecture of choice for compute-intensive applications such as AI and machine learning.” Swift traced the history of the new chip back to 2017, when Dave Ditzel – the founder and chairman of Esperanto – laid out the vision for Esperanto at the seventh RISC-V workshop.

At that workshop, Ditzel set a goal of “laying down 4,000 or more cores on a single device.” Ditzel called for both a simple instruction set through RISC-V and innovation in the realms of custom microarchitectures and proprietary low-power design techniques. “In the three ensuing years, we’ve raised $77 million dollars of venture capital and now are at the point of having completed our first design – the first of a family of AI processors based on RISC-V,” Swift said.

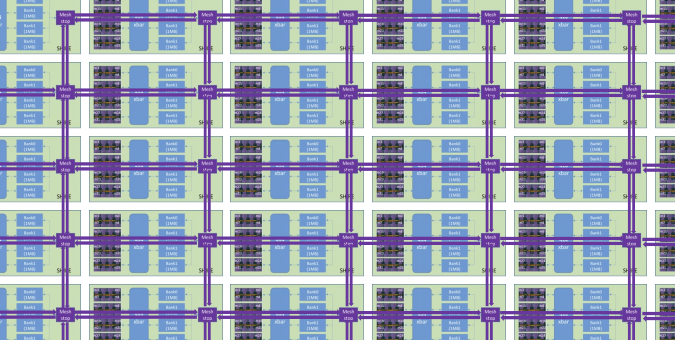

The new chip, called ET-SoC-1, contains two varieties of general-purpose 64-bit RISC-V cores: first, the ET-Maxion, a superscalar out-of-order core (4 per chip); second, the ET-Minion, a “leaner, energy-efficient” in-order multithreaded core containing a large coprocessor for machine learning applications (1,089 per chip, including one service processor).

The chip’s 23.8 billion transistors, spread across 7nm technology from TSMC, are aimed squarely at hyperscale data applications (“particularly inferencing,” Swift said). Swift said that the chip uses a general-purpose architecture to shield the customer from incompatibilities that might be introduced by the evolution of ML models over time.

As Swift explained it, in datacenter applications, the ET-Maxion cores are likely to be overruled by accompanying Intel or AMD host CPUs – but in edge applications, the Maxions become much more important for keeping costs low.

The chips support PCIe 4.0 and DDR4x RAM (up to 32GB), and Swift says they can fit up to six of their chips on a single PCIe card. By way of example, Swift showed off an open-source Glacier Point card with room for six ET-SoC-1 chips. (“This is the whole strategy we have, to leverage as much as possible the open-source community.”)

Along those lines, software-wise, “we support all the common machine learning frameworks,” Swift said, explaining that Esperanto leveraged Facebook’s open-source GLOW compiler as a hub.

While they haven’t yet worked with the physical silicon, Swift shared data based on emulation of the chip. “When we measure our performance versus the actual measured performance of the incumbent solutions in the datacenter,” he said, “we find that we expect to deliver 50 times better performance on key workloads like recommendation networks and up to 30 times better than the incumbents for image classification.”

“But probably more exciting and more important,” he continued, “is the energy efficiency which we’re able to derive. We expect to see 100 times better energy efficiency in terms of inferences per watt than the incumbent solutions.”

Esperanto attributes ET-SoC-1’s performance efficiency to several factors, including RISC-V’s simplicity, the machine learning coprocessors on the ET-Minion cores, a “uniquely optimized” memory hierarchy and custom low-voltage circuits.

Swift repeatedly stressed that ET-SoC-1 is just the first member of Esperanto’s new product lineup, explaining that the chip’s tile-based design made it easy “scale up to more thousands of cores or scale down to hundreds of cores,” serving needs “from hyperscale datacenters to edge AI and everything in-between.”

Esperanto’s announcement comes on the heels of Nvidia’s deal to acquire Arm, which left many wondering if RISC-V would see a post-acquisition surge in interest and adoption. Esperanto is also entering an increasingly crowded market for inference chips, with competition from the likes of Xilinx, Mythic, Groq and Intel’s Habana Labs.

Header image: a cutout from a diagram of ET-SoC-1’s many, many RISC-V cores. Image courtesy of Art Swift.