In this regular feature, HPCwire highlights newly published research in the high-performance computing community and related domains. From parallel programming to exascale to quantum computing, the details are here.

Closing the cloud HPC performance gap

Closing the cloud HPC performance gap

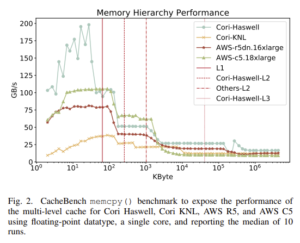

As HPC has become increasingly essential to many research and industrial processes, cloud HPC has become increasingly prevalent and powerful. However, these authors from UC Berkeley and Lawrence Berkeley National Laboratory write, “the question remains open whether cloud computing can provide HPC-competitive performance for a wide range of scientific applications.” Using hardware and software microbenchmarks and user applications, the authors conclude that high-end cloud HPC can deliver HPC-like performance “at least at modest scales.”

Authors: Giulia Guidi, Marquita Ellis, Aydın Buluc, Katherine Yelick and David Culler.

Introducing HPC to non-IT researchers

With the increasing ubiquity of HPC, it is becoming necessary to familiarize non-IT researchers with the operation of HPC technologies. These authors from Spain and Hungary assessed how a series of educational talks and hands-on training enabled non-IT researchers to start using HPC on a specific cluster, concluding that “academia and researchers would benefit from an environment that not only expects researchers to train themselves, but provides structural support for acquiring new skills.”

Authors: Bence Ferdinandy, Ángel Manuel Guerrero-Higueras, Éva Verderber, Francisco Javier Rodríguez-Lera and Ádám Miklósi.

Learning from the first petascale Arm supercomputer

Learning from the first petascale Arm supercomputer

The Arm-based Fugaku supercomputer now leads the Top500, but it was preceded by Astra, the Arm supercomputer that launched at Sandia National Laboratories in 2018. In this paper, a team of Sandia researchers explores the challenges encountered and lessons learned from the deployment and operation of the first petascale Arm supercomputer.

Authors: Kevin Pedretti, Andrew J. Younge, Simon D. Hammond, James H. Laros III, Matthew L. Curry, Michael J. Aguilar, Robert J. Hoekstra and Ron Brightwell.

Developing a new computational architecture for large-scale genomics

“Biomedical and biological research is increasingly shifting toward the collection and analysis of ever-larger datasets,” write these authors, a team from HP and the University of Bonn, stressing that diminishing returns from Moore’s Law are failing to keep up with the increasing needs of genomic analysis. In this paper, they introduce memory-driven computing (MDC), “a novel and alternative in silico architecture that overcomes many of the limitations posed by current approaches.”

Authors: Matthias Becker, Hartmut Schultze, Kirk Bresniker, Sharad Singhal, Thomas Ulas and Joachim L. Schultze.

Deploying a next-gen tape archive for classified computing at Lawrence Livermore

Deploying a next-gen tape archive for classified computing at Lawrence Livermore

In 2019, Lawrence Livermore National Laboratory (LLNL) found itself with “an expensive-to-maintain, aging and outmoded Oracle tape ecosystem,” writes Todd Heer, deployment team leader for the LLNL Data Storage Group. In this report, he outlines how LLNL replaced that tape system with a next-gen Spectra Logic tape system, resulting in “increased bandwidth, capacity, and facility flexibility; realized a reduced cost in overall dollars per GB, inclusive of service and maintenance fees; and resulted in a smaller physical footprint when compared with the previous tape drive infrastructure.”

Author: Todd Heer.

Optimizing a high-resolution ocean model on a million Sunway TaihuLight cores

Sunway TaihuLight is the fourth most powerful publicly ranked supercomputer in the world. In this paper, written by a team from the Qilu University of Technology, the Shandong Computer Science Center and the Shandong Provincial Key Laboratory of Computer Networks, the authors describe how they scaled a high-resolution ocean model (POP2) to a million cores on the system, achieving over 5.5 simulated years per day, as compared to 1.4 simulated years per day on the unoptimized version.

Authors: Yunhui Zeng, Li Wang, Jie Zhang, Guanghui Zhu, Yuan Zhuang and Qiang Guo.

Using direct water cooling to reduce energy use at Lawrence Berkeley National Laboratory

Using direct water cooling to reduce energy use at Lawrence Berkeley National Laboratory

At Lawrence Berkeley National Laboratory (LBNL), a large HPC cluster named Cabernet was retrofitted with an Asetek-provided direct water cooling system. This paper, written by two LBNL researchers and one engineer from kW Engineering, describes the process and examines the results: 4 percent energy savings for the datacenter and 11 percent energy savings from Cabernet alone. The researchers also report that savings “on the order of 15-20 percent” would be possible if the chilled water system weren’t used for rejecting the heat from the Asetek system.

Authors: Shankar Earni, Steve Greenberg and Walker Johnson.

Do you know about research that should be included in next month’s list? If so, send us an email at [email protected]. We look forward to hearing from you.