During the last couple of years the roll out of AI-specialized chips and systems has steadily gained steam. Today, for example, SambaNova, announced availability of its DataScale systems which are based on its ‘reconfigurable dataflow’ chip that the company says outperforms Nvidia’s A100 GPU on many, if not most AI tasks. SambaNova also announced a Dataflow-as-a-Service offering and provided more detail on its near-term market strategy.

While it’s early days for these AI tech startups, the first wave of entrants are winning deployments and bringing products to market. AWS recently announced instances based on the Habana Labs (now Intel) Gaudi chip. Cerebras, like SambaNova, has placed systems at national labs where a number of AI technology testbeds have sprung up in addition to the labs putting the chips/systems to useful work. Google’s TPU, of course, is in its fourth generation and has been in service at Google for several years. Just today, Esperanto unveiled an ML chip using RISC-V cores.

“The times, they are a changing” as Bob Dylan once wrote. The AI tech floodgates are indeed opening wide. SambaNova’s announcements mark its plunge into broader commercialization. Marshall Choy, vice president of product, provided HPCwire with a briefing. To a fair degree, SambaNova’s technology has already been widely covered. Here’s an excerpt from HPCwire coverage in the fall of Kunle Olukotun, SambaNova chief technical officer and one of three founders, describing the technology.

“We define a reconfigurable data flow architecture that’s optimized for data flow problems. So it takes these hierarchical [parallel] patterns and maps them to an architecture so they can be executed very efficiently. This is a reconfigurable architecture composed of reconfigurable compute, reconfigurable memory, and communication primitives that makes it very efficient to execute these sorts of data flow problems.

“The first incarnation of this reconfigurable dataflow architecture is the Cardinal SN10 reconfigurable data flow unit (RDU). This is implemented in TSMC seven nanometer technology and 40 billion transistors. Over 50 kilometers of wire provide all the interconnect between the different components on the chip. It provides hundreds of teraflops of compute capability, and hundreds of megabytes of memory on chip. Just as importantly, it has different direct interfaces to terabytes of memory off chip. We’ve combined these RDU chips into systems that provide scalable performance for both training and inference. We call them DataScale systems.”

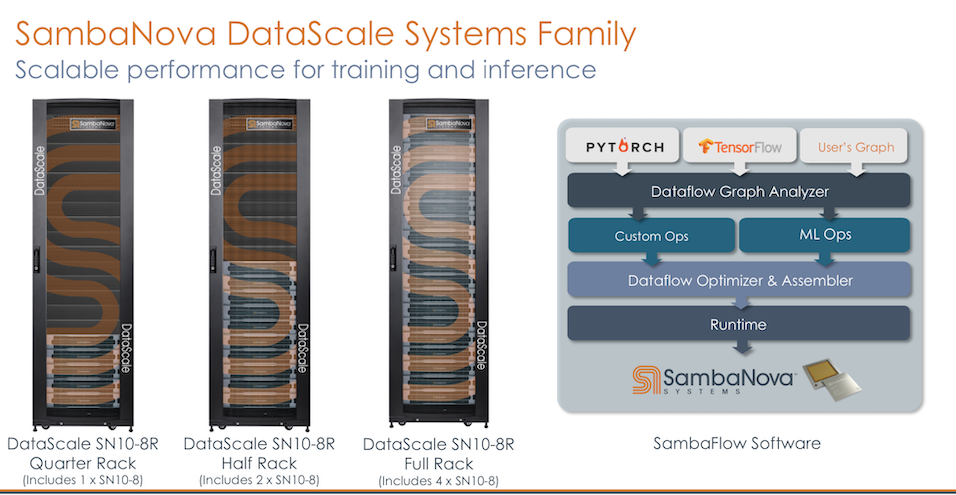

A big chunk of the magic is the SambaFlow stack and compiler which does the heavy lifting of porting applications to the chip.

Said Choy, “Our compiler does what the developer has to do with other architectures like with the GPU, [where] you do a lot of kernel by kernel execution things. That’s a lot of data movement between memory and GPU and the host. What our compiler does is extract the entire graph out of the what’s running on top of the framework. It breaks that down into common semantics and operators, and then lays out that graph in its entirety onto the chip and executes in a single continuous data flow manner.

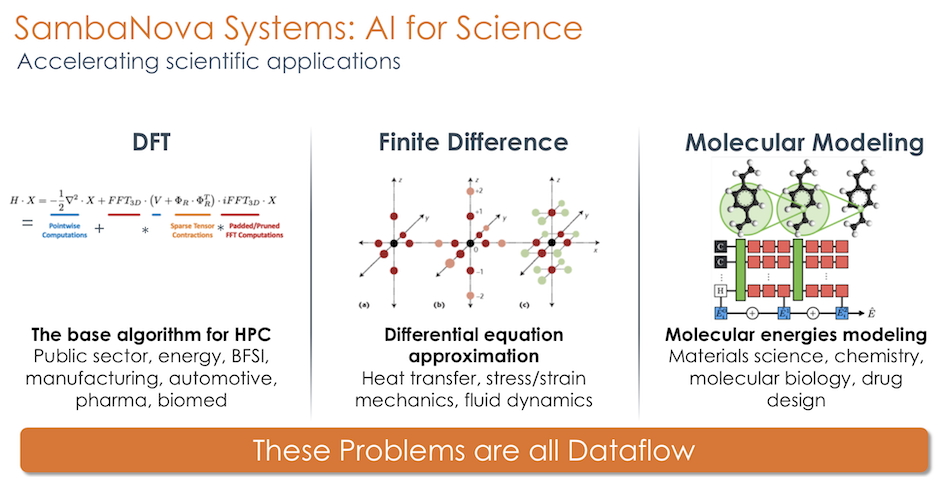

“As related to HPC applications, the SambaFlow stack has APIs not just from the PyTorch, and the framework level but also has interfaces for user graphs. We have customers who are doing things that have nothing to do with ML and frameworks. They’re running C and C++ graphs on the SambaNova – things like density functional theory, which is a workhorse workload for HPC. We have people doing finite differences type of calculations using our system and C and C++. So we’re already kind of moving into that HPC space.”

Currently, SambaNova hasn’t exposed low-level APIs to the outside world and Choy suggests the speedup provided by its standard compiler will be enough for most users.

All the systems come in racks and the current lineup includes: quarter rack (single system); half rack (two systems); and full rack (four systems) and each incudes networking and a management console. Choy said, “Part of the reason for this is that it’s how we’re able to get customers up and running very quickly. It literally ships in a rack, they literally roll the rack out and then roll it in place in the datacenter.”

“The other reason we ship it in the rack is from a customer support standpoint to avoid having to triage a bunch of local area network issues; in the past we spent a lot of time on that kind of stuff. Also, it creates a closed environment within the rack and customers are running exactly what I’m running in my labs. So when we’re doing nightly fault injection testing, and bi-weekly patch, regression testing, and all that kind of stuff, the odds are in my favor that I’ll find some things before the customers do, and can proactively address issues before it hits them in production,” he said.

SambaNova singled out the following system features:

- SambaNova Systems SambaFlow, a complete and open software stack that provides no lock- in and ease of use to improve developer productivity.

- SambaNova Reconfigurable Dataflow Unit (RDU), the industry’s next-generation processor built from the ground up to offer native dataflow processing.

- SambaNova Systems RDU-Direct, high-speed fabric that provides a low-latency, high-bandwidth direct connection between SambaNova Systems RDUs for maximum system throughput.

- 8-32 SambaNova Cardinal SN10 RDUTM processors per rack.

Choy said the company is currently focused on four use cases: “Natural language processing (NLP), high resolution computer vision, recommendation systems, and finally as a category of its own, we’re focusing on AI for science, which is probably a little bit of an open question, whether we’re talking about the convergence or the coexistence of HPC and AI, but it’s going to be somewhere in that realm.”

SambaNova released benchmark claims as part of its announcement all measured against Nvidia’s A100 according to Choy. The bullets here are from the official press release:

- Performance. World record DLRM inference 7x better throughput and latency than A100. World record BERT-Large training 1.4x faster than DGX A100 systems. World record state of the art accuracy of 90.23 percent out-of-the-box for high-resolution computer vision compared to DGX A100 systems.

- Accuracy. World record state-of-the-art accuracy of 80.46 percent for DLRM recommendation engines compared to Nvidia A100 GPUs.

- Scale. World record BERT-Large training and state-of-the-art accuracy at multi-rack scale.

- Ease of Use. From loading dock to datacenter, SambaNova DataScale quickly and easily integrates into any existing infrastructure running customer workloads in about 45 minutes. Download thousands of pre-trained Hugging Face Transformer models in seconds on SambaNova DataScale at state-of-the-art accuracy with no code changes required.

Nvidia, currently king of the GPU and AI hill, is the preferred marketing target for most of the young AI chip/system entrants. That’s not surprising given Nvidia’s market share. That being said, it seems like the newcomers should enter the MLPerf benchmarking efforts which Nvidia has owned. Choy downplays MLPerf arguing the expense and effort to participate are burdensome for a young company. SambaNova is a MLPerf (MLCommons) member and will likely participate in the future, he said.

The DataScale systems are network (InfiniBand and Ethernet) agnostic, said Choy, but given that Nvidia now owns Mellanox, he allowed that, “My preference is Ethernet. But we can do both. One of our strategies is to make sure things are easy to integrate. So we’re looking at everything from physical form factors and software interfaces and doing a lot of open source stuff, whether it’s PyTorch for frameworks, Kubernetes and Slurm for orchestration, Docker or Singularity for containers, or Red Hat and Ubuntu for the operating system. It’s all pretty standard stuff.”

SambaNova doesn’t so far support Slingshot or Omni-Path fabrics (now Cornelius Networks) but given its work with the national labs (Los Alamos, Lawrence Livermore, and Argonne) that could change as the need arises. It seems the tabs have generally been pleased as noted below by ANL’s Rick Stevens.

“At Argonne National Laboratory, we’re working on important research efforts including those focused on cancer, COVID-19, and many others, and using AI to automate parts of the development process is key to our success,” said Stevens, associate laboratory director at ANL. “The SambaNova DataScale architecture offers us the ability to train and infer from multiple large and small models concurrently and deliver orders of magnitude performance improvements over GPUs.”

The company also introduced Dataflow-as-a-Service today. It is being offered in three flavors, one for recommender, one for natural language processing, and one for high resolution computer vision. “This is a way for people to quickly and easily gain access to our technology, and pay for it with a cloud consumption model of monthly subscription billing. We will physically ship the system, install it behind their firewall, run it on their premises, and do the management support. That’s covered as part of the subscription,” said Choy. Pricing wasn’t disclosed.