Hewlett Packard Enterprise (HPE) today introduced a set of pre-configured HPC services via its HPE GreenLake platform with planned general availability in spring 2021. The new managed service offerings can be deployed on-premises in the customer’s own datacenter or in a colocation facility, bringing HPE’s HPC portfolio into the mainstream enterprise segment.

Responding to the charge that HPC systems are costly, complex and require specialized skillsets to implement and deploy, HPE says it can simplify the experience by speeding up deployment of HPC projects by up to 75 percent and reducing capital expenditures by up to 40 percent (citing a Forrester Consulting study commissioned by HPE).

HPE says that GreenLake customers pay for only what they use. Without providing exact pricing details, General Manager of GreenLake Cloud Services Keith White said HPE will offer an elastic, “true metering” model, based on storage and compute usage scenarios. “It’s along the lines of ‘how many gigabytes am I using?’ or ‘how many cores?’ — those types of scenarios,” he said. “Obviously that becomes more of an operational expense versus the full capital expense, if that’s required.”

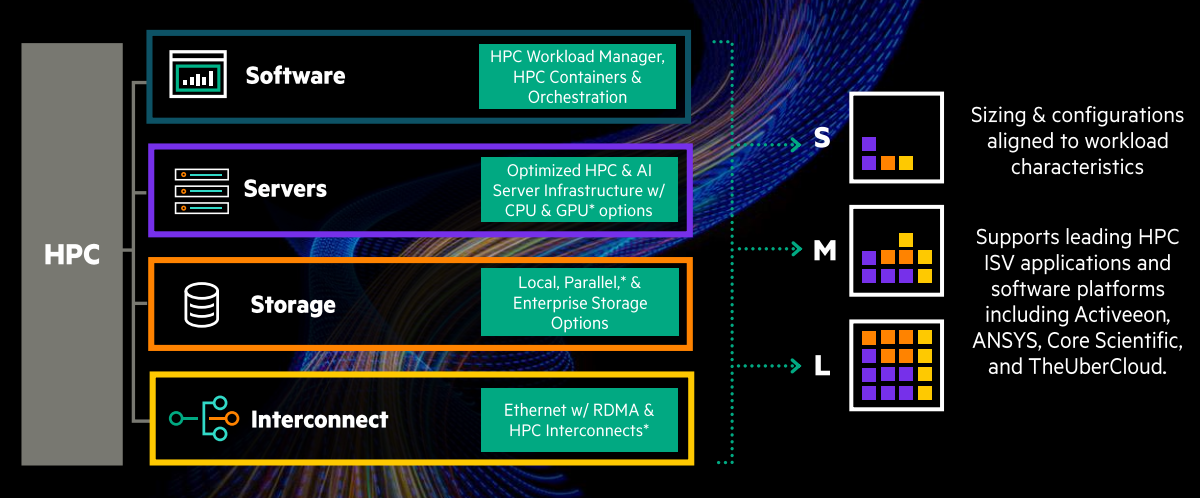

The fully managed, pre-bundled services will harness HPE’s software, storage and networking solutions, and will be sold as small, medium and large options. HPE plans to launch the offering globally in the spring with the small and medium sizes, based on HPE Apollo and Proliant servers, and then expand to the large-size offering that will leverage the Cray line and technologies, including Slingshot, its high speed interconnect, and the Cray programming environment.

The GreenLake HPC services will also leverage HPE Performance Cluster Manager and Clusterstor, HPE’s high performance, parallel storage system. Customers will be able to opt for GPU-based machines to meet the specific needs of both HPC and AI workloads.

GreenLake cloud services for HPC includes access to the following:

• HPE GreenLake Central — offers an advanced software platform for customers to manage and optimize their HPC services.

• HPE Self-service dashboard — enables users to run and manage HPC clusters on their own, without disrupting workloads, through a point-and-click function.

• HPE Consumption Analytics — provides at-a-glance analytics of usage and cost based on metering through HPE GreenLake.

• HPC, AI & App Services — standardizes and packages HPC workloads into containers, making it easier to modernize, transfer and access data.

HPC at Any Size

With high-performance computing making strides into enterprise, driven by AI and analytics, HPE is focused on providing the right-size resource with the right level of support, be it a managed service in the cloud, a managed service in an on-premises or colo environment or a more traditional supercomputing installation. With an augmented product portfolio following HPE’s acquisition of Cray last year — and the capabilities of GreenLake and the Pointnext cloud consulting services — HPE has lined up the pieces to back this strategy.

At the high-end, HPE is teed up to launch the exascale era in the United States with the scheduled deployment of Frontier at Oak Ridge National Laboratory in late 2021.

HPE’s broader strategy, however, is to use that same HPC technology to harness and tame the explosion of data that’s happening in every enterprise, large and small, to help them to process that data and unlock insights faster, according to Pete Ungaro, senior vice president and general manager of HPE’s HPC and machine critical solutions group.

“HPE wants to take these technologies that traditionally were at the pinnacle of the HPC market, and bring those down to small sizes and medium customers, hitting small and medium sized businesses or departments within larger corporations that don’t have a lot of traditional HPC expertise, where partners can bring not only a solution to them, but also the services that they can wrap around that to help them to implement,” said Ungaro.

Insights and the Market

Market watcher Addison Snell, CEO of Intersect360 Research, says HPE’s move to offer on-premise as-a-service offerings, including HPC capabilities, lines up with the his firm’s analysis of HPC market trends.

“A growing proportion of HPC spending is moving to ‘cloud-like’ engagements that don’t fit in the standard cloud bucket,” he told HPCwire. “We’re seeing demand for things like SaaS or managed services contracts that have the benefit of utility pricing, but maintaining on-premises advantages such as data locality, data sovereignty, and control.”

Cloud-type cost models that favor OPEX over CAPEX are more attractive for businesses and organizations facing COVID-19 induced economic pressures. “The situation with COVID has really driven a lot of push for customers that are looking for a much more cost effective option to reduce costs, reduce capital outlay, and really maintain that free cash flow,” said White.

Snell pointed to HPE’s position as the leading provider of on-premise HPC systems and their established HPE PointNext services as advantages for serving enterprise needs. “GreenLake HPC-as-a-service deployments leverage these to address this emerging segment that seeks to combine the advantages of on-premise and cloud,” he said.

Citing HPE’s >37 percent share of the high performance computing market, Ungaro said HPE is approaching HPC cloud in a way that is “fundamentally differently” from traditional cloud providers. “We start with our leadership HPC position in the market, and then bring that capability to a cloud infrastructure, rather than starting with a cloud infrastructure and trying to apply that to HPC,” he said.

Growth, HPC use cases, and engagement

GreenLake has seen significant growth, according to White. Since 2017, the business unit has grown from about 350 customers to over 1,000 with the overall contract value experiencing a near tripling in that time to over $4 billion. Notable customer wins include SAP, Kern County in California, Nokia and YF Life Insurance. On the partner side, White highlighted Accenture’s use of HPE GreenLake for its hybrid cloud offering (used by Accenture customers).

While GreenLake HPC-as-a-service won’t be widely available until next spring, HPE has HPC customers using HPE GreenLake today. One of these is Zenseact (formerly known as Annuity), a software developer for autonomous driving solutions based in Sweden and China. Zenseact relies on HPE’s GreenLake HPC services to perform 10,000 simulations per second, using driving data from its test cars in order to improve software design for safer autonomous vehicles.

Other use cases for the HPC-as-a-service offering include financial services, drug discovery and oil and gas exploration.

HPE is working with ISVs, such as ANSYS, to build end to end solutions in different vertical segments, and is collaborating closely with key platform providers such as Activeeon, Core Scientific and Ubercloud, so that customers can deploy on workload-optimized platforms and containerize their applications running on GreenLake cloud services.

HPE says that customers can order and configure GreenLake services via a simple self-service portal and can get up and running in “as little as 14 days.” All GreenLake cloud services, including for HPC, are also available through HPE’s channel partner program, according to the company.

GreenLake versus Azure

HPE’s supercomputing-as-a-service offering with Azure (originated by Cray in 2017) is said to be on track and growing. The arrangement provides the capability for existing Azure customers to order an HPE supercomputer (including Clusterstor storage) that is deployed in the Azure cloud and integrated with Azure services.

Ungaro clarified the differentiation between the HPE Azure setup and GreenLake HPC services:

“We’re seeing increased demand from customers who want that same capability [as provided by the Azure scheme] in their own datacenters, but they don’t want that size, they want a smaller, more bite-sized chunk,” he said.

“GreenLake allows these customers to have that versatility and that flexibility,” said Ungaro, adding “I think what we’re going to see more and more is people that want to deploy some of that core capability on their own in their own datacenters or in a colo, and then at times when they have more increased needs, they may burst out into the public cloud, and may take advantage of some of the the capability in that public cloud for those burst times but then have the core of their applications, the core of their data sets local, so they can get the best latency, the best turnaround times and most control over their environments.”

HPE cited plans to further evolve GreenLake cloud to allow customers to manage across all of these environments. “They’ll be able to manage their on-prem workloads, as well as up into multiple cloud environments, depending on what their needs and what their usage is,” explained Ungaro.