The integrated Fujitsu HPC/AI Supercomputer, Wisteria, is coming to Japan this spring. The University of Tokyo is preparing to deploy a heterogeneous computing system, called “Wisteria/BDEC-01,” that will tackle simulation and big data “learning” workloads, in support of Japan’s Society 5.0 project, which seeks to achieve economic and social social gains through the integration of cyber and physical space.

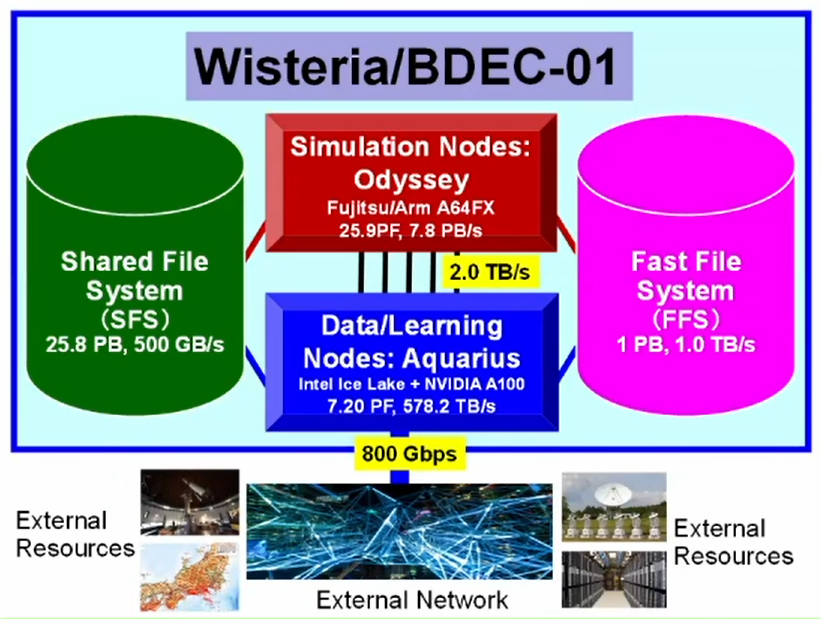

The system comprises two partitions: a simulation node group, called Odyssey, and a data analysis node group, called Aquarius. The names reference call signs for the Apollo 13 command and lunar modules, respectively. Together the new computing systems provide an aggregate 33.1 peak double-precision petaflops; and the larger cluster, Odyssey, will be one of the world’s fastest Arm-based machines, second only to Top500 leader Fugaku.

The system comprises two partitions: a simulation node group, called Odyssey, and a data analysis node group, called Aquarius. The names reference call signs for the Apollo 13 command and lunar modules, respectively. Together the new computing systems provide an aggregate 33.1 peak double-precision petaflops; and the larger cluster, Odyssey, will be one of the world’s fastest Arm-based machines, second only to Top500 leader Fugaku.

Odyssey spans 20 Fujitsu PRIMEHPC FX1000 racks, equipped with a total 7,680 nodes, each with one Fujitsu Arm-based 48-core “A64FX” CPU. The system delivers a total peak performance of 25.9 petaflops. With each node providing 32 GiB of HBM2 memory, Odyssey’s total memory capacity is 240 TiB, and the total memory bandwidth is 7.8 PB/sec. Nodes are connected by Fujitsu’s custom Tofu Interconnect D, with a bisection bandwidth of 13.0 TB/sec.

Aquarius is based on GPU-heavy Fujitsu PRIMERGY GX2570 servers. The system comprises 45 such nodes, each housing two Intel Ice Lake CPUs and eight Nvidia A100 GPUs, delivering a combined 7.2 petaflops of peak double-precision performance. Nvidia Mellanox HDR 200 Gb/s InfiniBand ties the system together, employing full bisection bandwidth. The total system memory capacity is 36.5 TiB, and the total memory bandwidth is 578.2 TB/sec. A 25 GB/s Ethernet interface provides external connectivity at a data rate of 800 Gb/s.

A 100 Gbps EDR InfiniBand backbone connects Odyssey and Aquarius with 2 TB/sec of network bandwidth.

Wisteria/BDEC-01 leverages the Fujitsu Exabyte File System (FEFS), based on Lustre. There are actually two filesystems: the large shared filesystem (25.8 PB, 500 GB/s) and the high-speed NVMe filesystem (1 PB, 1 TB/s).

The system will support well-known HPC programming tools, including Fortran, C / C ++ compiler, Python interpreter, and the MPI communication library. “We provide libraries, tools and applications in a wide range of fields such as computational science, data science, machine learning and artificial intelligence,” notes the University of Tokyo in a press release.

There are still open technical issues around programming combined workloads. Given the mix of architectures (Arm64 and x86), it will not be possible to run a single MPI job over the two partitions, but it will be possible to run different workloads using the same job script.

Project lead Kengo Nakajima (University of Tokyo/Riken R-CCS) reviewed the system design and software goals during the Riken R-CCS International Symposium on Feb. 15. (The graphics in this article are from that presentation.)

Wisteria/BDEC-01 is the first system of Japan’s BDEC (big data and extreme computing) platforms. A “Hierarchical, Hybrid, Heterogeneous (h3) system,” it will introduce a new software platform called h3-Open-BDEC that facilitates the integration of simulation, data analysis and machine/deep learning (which the partners notate as S+D+L). The five-year (software) project is funded by the Japanese government with a budget of 157 million Japanese yen ($1.48 million USD).

“The h3-Open-BDEC is the first innovative software platform to realize integration of S+D+L (simulation, data and learning) on supercomputers in the exascale era, where computational scientists can achieve such integration without support by other experts in data analytics and machine learning,” said Nakajima.

The abstract for his talk provides additional detail. “The h3-Open-BDEC is designed for extracting the maximum performance of the supercomputers with minimum energy consumption focusing on (1) innovative method for numerical analysis based on the new principle of computing by adaptive precision, accuracy verification and automatic tuning, and (2) Hierarchical Data Driven Approach (hDDA) based on machine learning. The hDDA automatically constructs the simplified models for efficient generation of training data using Feature Detection, MOR, UQ, Sparse Modeling and AMR.”

Wisteria/BDEC-01 is scheduled to begin preliminary operations on May 14, 2021, with full production deployment slated for October 2021. The supercomputer will be used for various joint usage and research programs under the HPCI and JHPCN programs, and will support the goals of Society 5.0, which the government of Japan describes as “a human-centered society that balances economic advancement with the resolution of social problems by a system that highly integrates cyberspace and physical space.”

Wisteria/BDEC-01 is being installed at the University of Tokyo Information Technology Center, which provides high-performance computing resources to industry, academia and government institutions in Japan and overseas. The center operates Oakforest-PACS and Oakbridge-CX — both Top500 machines built by Fujitsu — and serves a community of about 2,600 users, from within and and outside the university.

Another new University of Tokyo system, MDX, is set to become operational next month (March 2021). An SC20 poster describes MDX as a data platform that is cloud-like and “more for every day applications than big sciences.” A combination of general purpose Intel Ice Lake CPU nodes and accelerated nodes equipped with two Intel Ice Lake CPUs and eight A100 GPUs will deliver more than 8 peak double-precision petaflops of computing for industry-academia and government-academia collaboration activities.