Nvidia and VMware are bringing together the new Nvidia AI Enterprise software tool suite with VMware’s latest vSphere 7 Update 2 virtualization platform to make it easier for enterprises to virtualize their expanding AI workloads.

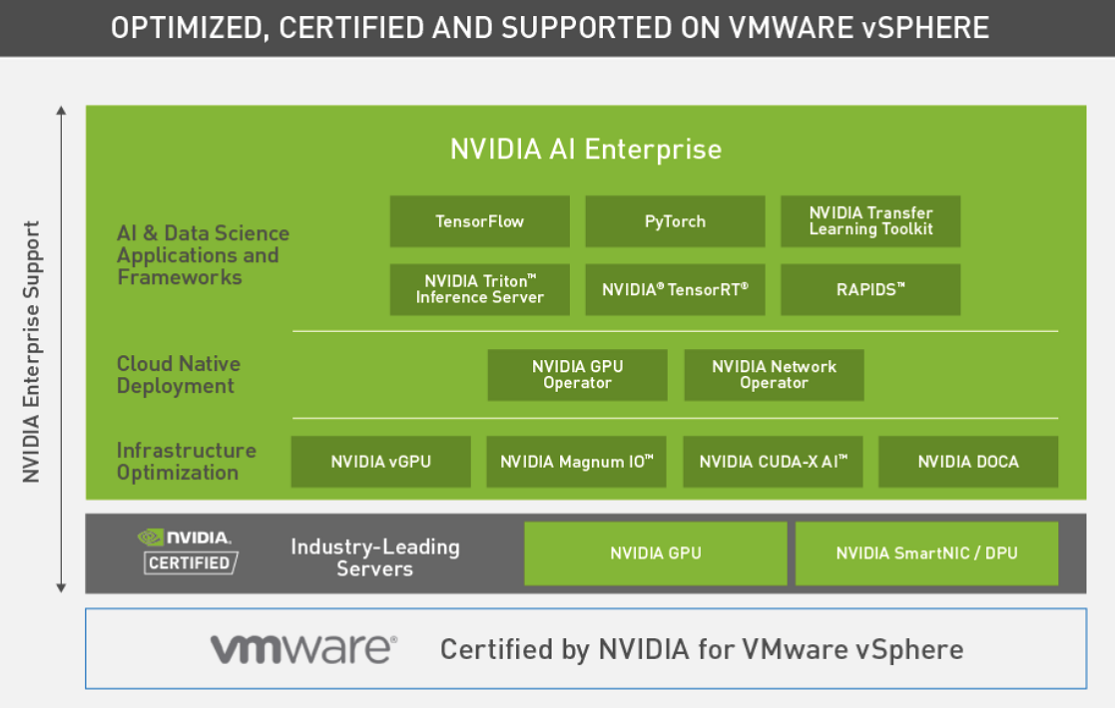

Nvidia’s new suite of AI tools and frameworks, which was announced March 9 (Tuesday), is built to run exclusively on VMware’s just released vSphere 7 Update 2, according to the companies. The combination of Nvidia AI Enterprise tools and vSphere 7 Update 2 means that AI workloads that have traditionally run on bare-metal servers can now run on VMware’s virtualization platform. This will give those workloads direct access to Nvidia’s CUDA applications, AI frameworks, pre-trained models and software development kits deployed on hybrid clouds, according to the companies.

Nvidia’s new suite of AI tools and frameworks, which was announced March 9 (Tuesday), is built to run exclusively on VMware’s just released vSphere 7 Update 2, according to the companies. The combination of Nvidia AI Enterprise tools and vSphere 7 Update 2 means that AI workloads that have traditionally run on bare-metal servers can now run on VMware’s virtualization platform. This will give those workloads direct access to Nvidia’s CUDA applications, AI frameworks, pre-trained models and software development kits deployed on hybrid clouds, according to the companies.

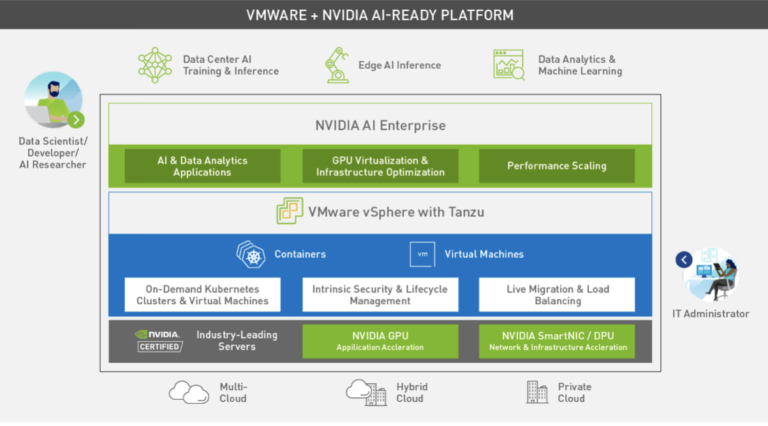

The latest Nvidia tools support data center AI training and inference, edge AI inference and as well as data analytics and machine learning workloads as well.

Until now, AI has been “an island of infrastructure where people had to [undertake a do-it-yourself] approach to setting it up and managing it,” said Justin Boitano, general manager of Nvidia’s enterprise and edge computing unit. “The partnership with VMware lets us really build the infrastructure that people are used to using [on vSphere], but really optimize it for AI so you don’t have to go create a siloed project to figure this out. We want to make it turnkey for IT administrators.”

The latest collaboration continues an initiative begun by the two partners last year to democratize AI for a wide range of users.

“We’re bringing an AI experience to developers and data scientists and similarly reaching out to our enterprise customers,” said Lee Caswell, vice president of VMware’s cloud platform business unit.

The newly updated version of vSphere is also certified to run Nvidia’s A100 Tensor Core GPUs. Nvidia said it would also support vSphere customers that acquire licenses for its new AI software suite.

The new Nvidia VMware collaboration gives vSphere hypervisor support for migrating to multiple GPU instances, allowing partitioning of A100 GPUs to as many as seven instances depending on workload requirements. That option would scale the training of AI workloads across multiple nodes, including large deep learning models that could now run on VMware Cloud Foundation.

Boitano said Nvidia’s AI software suite will make it easier to build AI models and scale them in enterprise data centers, reducing the time required to deploy AI models in production from 80 weeks to eight weeks, according to Boitano.

In the past, such AI workloads traditionally were designed to run on bare-metal servers. The new Nvidia tools and updates to vSphere 7 change all that, Boitano added. “The performance of vSphere is virtually indistinguishable from bare metal.”

Those gains are tied to support for Nvidia’s A100 GPU, yielding what the partners said was a 20-fold performance boost.

The push to democratize AI infrastructure also incorporates VMware’s Tanzu application service designed to provide vSphere support for container-based applications and Nvidia’s container tools. “You’ve got basically support for both containers and for [virtual machines] with a common operating model,” Caswell said. “What we’re doing is making sure that when new applications come in from a data scientist, developer or AI researcher, that they can apply all the same [vSphere] tools [via] a common view.”

The AI platform on vSphere is supported by Nvidia server partners Dell Technologies, Hewlett Packard Enterprise, Lenovo and Supermicro.

Along with A100 support, the vSphere 7 update also includes Nvidia’s GPU interconnects that provide direct access to GPU memory. “What we’re doing is using [GPU architecture enhancements] and effectively making sure the GPUs can talk to other GPUs across the network in a way that isn’t bottlenecked by any other chokepoints in the system,” Boitano said.

The enterprise AI platform is offered as a perpetual license at $3,595 per CPU socket. Nvidia said VMware customers can apply for early access as they upgrade to the latest version of vSphere 7.

Separately, VMware announced AI-related updates to its vSAN virtualized storage, including S3-compatible object storage geared toward machine learning and cloud native applications. Like the Nvidia GPU interconnect, the vSAN 7 update supports remote direct memory access. Along with boosting performance, RDMA is designed to increase resource utilization. The storage upgrade is also available now.