Microsoft Azure and Oracle Cloud Infrastructure (OCI) yesterday announced general availability (GA) of instances using AMD’s new third-generation Epyc (Milan) microprocessor. The news coincided with AMD’s formal launch of the new chip and marks the first time Azure or Oracle have debuted new instances on the same day the chip was announced. Other cloud providers – among them AWS, Google Cloud, Tencent, and IBM Cloud – have also announced plans to offer instances using the new processor.

“We’re very pleased with our progress in the cloud,” said AMD CEO Lisa Su at the virtual launch event. “Today, we have over 200 first and second generation Epyc instances available. [With] the third generation Epyc, we see even more opportunity to accelerate our cloud deployments.” Besides hyperscaler enthusiasm for Milan, several systems makers – Dell, HPE, Lenovo and Supermicro – have also announced new products based third-gen Epyc, some of those systems also available today.

With a single shotgun blast AMD has unleashed a product-rich ecosystem hoping to cash in on pent-up demand. For more on the new chip itself, see HPCwire coverage of the virtual launch led by Su, AMD Launches Epyc ‘Milan’ with 19 SKUs for HPC, Enterprise and Hyperscale.

Winning deployment in major cloud providers has become a critical element in success for today’s microprocessor supplier community. There will no doubt be a variety of instance types based on third-gen Epyc processors turning up quickly.

Both Azure and Oracle pre-briefed HPCwire on their plans for using the new chip. Both reported significant performance and TCO improvements (more below) compared to earlier the Epyc generation and against current generation Intel-based instance. Azure also announced a new higher security VM based on Milan.

Jason Zander, executive vice president, Azure, said, “Microsoft and AMD are jointly announcing the private preview of confidential computing VMs in Azure, running the AMD epic third gen processors. Complementary computing builds on the strong encryption at rest and in transit capabilities to keep your data encrypted, all the way to the CPU. [Users] can easily take advantage of this added protection for their most sensitive workloads, without the need to rewrite or recompile them.”

Long-term collaboration with AMD as well as technology roadmap decision made by AMD helped speed both Azure and Oracle efforts. Evan Burness, Azure, principal program manager, Azure HPC, told HPCwire, “One thing we very much appreciated is they have had socket continuity for three different processor generations. Naples to Rome to Milan are all based on the SP3 socket. That enables us to do planning years in advance around our motherboard and server platform, and that minimizes the amount of motherboard reengineering that has to occur every time a new processor comes.”

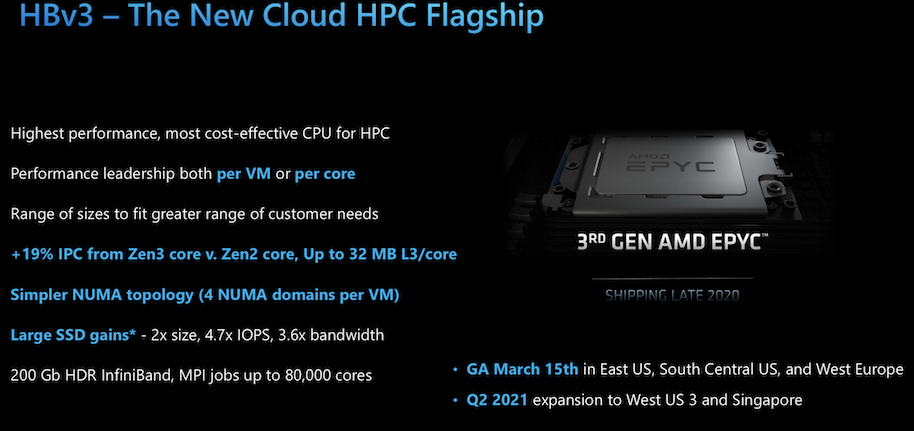

“This is the fastest VM introduction Azure has ever done for HPC. And in fact, for any new processor generation period, Azure, as far as we can tell, a cloud provider has not been in GA day-in-date with a new processor technology launch,” said Burness adding Azure planned to stick to the so-called same day launch cadence going forward AMD.

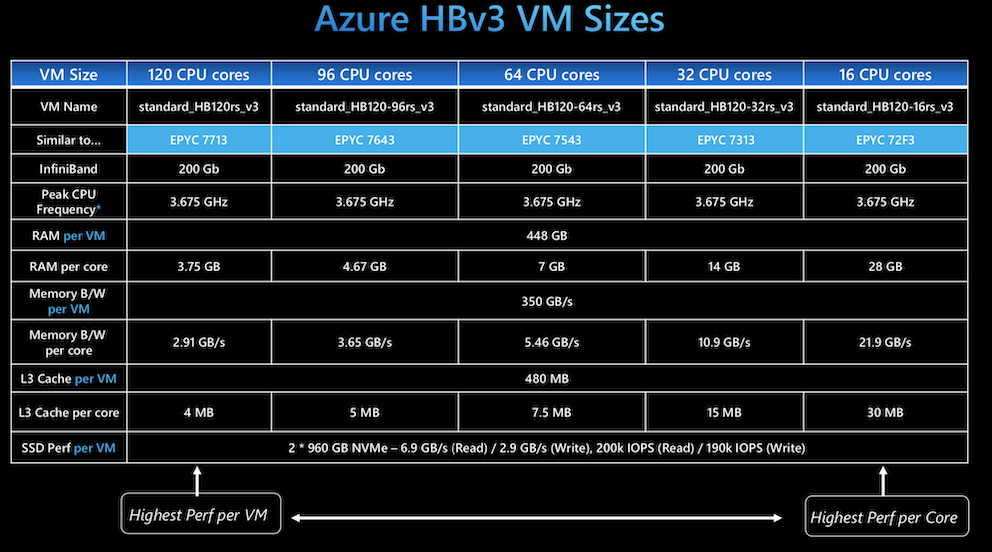

The details for many of the forthcoming cloud-based third-gen Epyc offering are still being firmed up but Azure (HBv3 instance) and OCI’s (E4 instances) have instances available now. Here are snapshots of their current lineups provided by the companies:

A blog by Rajan Panchapakesan, director of product management, OCI, presented an overview of the new Oracle lineup:

“These E4 standard instances use 64 core processors, with a base clock frequency of 2.55 GHz and a max boost of up to 3.5 GHz. The bare metal E4 standard Compute instance supports 128 OCPUs (128 cores and 256 threads) with 256 MB of L3 cache, 2 TB of RAM, and 100 Gbps of overall network bandwidth. This configuration is the highest core count for a bare metal instance on any public cloud. The memory bandwidth is well suited for both general-purpose and high-bandwidth workloads that require larger and faster memory.

“As demonstrated in the following performance benchmarks (see blog), the E4 Standard instances deliver up to a 15% increase in integer performance, a 21% increase in floating point performance, and a 24% increase in java performance, compared to E3 Standard instances. Also, the E4 instances provide three times the price performance relative to other general-purpose instances offered by other cloud providers.

“New processors, better performance, and the same price as E3 Compute instances, combined with our flexible Compute approach, which enables you to granularly customize the core counts and memory of VMs, provide better value.”

Oracle says the E4 instances continue the flexible infrastructure approach established with E3: “You’re free to select the exact number of OCPUs and amount of memory that you need for a VM, not forced to choose from a fixed menu of 1, 2, 4, 8, or 16. You can launch any custom VM size that meets your needs, such as a 3-core, 6-core, or 63-core VM with anywhere from 1 GB–1 TB of memory.”

E4 instances bill separately for the CPU and memory resources provisioned. Each CPU comes with its associated simultaneous multithreading unit and is priced at $0.025, and memory is priced at $0.0015 per GB, which is the same prices for E3 instances.

Oracle has early customers using E4 instances. Matt Leonard, vice president, product management at OCI, told HPCwire the E4 is being targeted broadly at business-critical applications such as web applications, back end servers, gaming servers, caching, application development.

“We’re also seeing customers use it for high performance, video encoding, anything like that. We have a global 5g which is doing live streaming. So that’s a production platform where they’re basically doing live sports broadcast. We’ve got a large global electronics manufacturer that is basically looking for a general-purpose business application. They’re evaluating the E4 against some of our other offerings because of its price performance. I have a European betting and lottery that’s done real time analytics in order to generate bets. We’ve got large enterprise solutions provider in Europe that is looking for the price performance for large scale continuous development.”

Oracle says the following regions have E4 now with further rollouts planned: US East (Ashburn); US West (Phoenix); India west (Mumbai); Switzerland North (Zurich); Brazil East (Sao Paulo); Canada Southeast (Montreal); Australia southeast (Melbourne); Canada Southeast (Toronto).

Azure is also reporting favorable benchmarks. Burness told HPCwire, “With HBv3, we are able to provide about a 2.6x jump in per VM performance, as compared to what we delivered in our last generation of a 16-core HPC virtual machine, which was our Haswell introduction from 2016. This may sound like you know, 16 cores in some high-performance computing, but workloads around that size are actually a high percentage of the volume HPC jobs that we still see on Azure.”

Focusing on larger jobs, “What we’re seeing in our early testing, going up to more than 30,000 cores for an MPI job, is that Rome and Milan track really closely together for a while; then what happens is the doubled size and significantly re-architected cache structure of Milan kicks in compared to Rome. And we see performance advantages for at scale workloads, as high as 2x over Rome. It can be as low, at the low end (job size) of about 30 percent or 40 percent. You have to reach a certain level of scale before our high-end customers running MPI jobs – let’s call it 4,000 cores and 10,000 cores and up – when there are really big benefits from Milan in HBv3 as compared to Rome in HBv2.”

According to Burness, improvements to local SSD configuration are producing “3.5x to the nearly 5x” increase in local SSD performance. “A common refrain is storage in the cloud is very expensive, especially for on-premise buyers of high performance computing. Many our customers are finding it is a cost effective to use the local storage in our HPC instances, to create file systems on a per job basis.”

Burness also posted a blog describing Azure’s HBv3.

One interesting change Azure has made is in the way it delivers HBv3 cores. In its last couple generations of HPC, Azure has only offered one VM size and that VM size, said Burness, “was constructed to be as much of the physical server as we could with as little held back for the hypervisor as we could technically get away.” The idea was to deliver bare metal or as close to bare metal performance as one could get. But it was only one VM size.

“We’re doing something different this time. In HBv3 we’re doing the same thing; you still see 120 cores and those are physical cores, the hyper threading is always turned off. It corresponds to a part like the Epyc 7713. We have a custom version of it, but it’s, it’s very similar to the Epyc 7713. But we’re cognizant we have this broad range of customer needs, who will want different configurations of processors. There’s nothing special about that statement. That’s why AMD doesn’t make only one processor SKU,” said Burness.

“What we’ve done is we’ve worked with AMD and our hypervisor team to create different VM sizes that very carefully hide certain cores to make the VM size look and perform as if it was another Milan SKU from AMD. We’re going to be one family, HBv3, and any of these sizes will have the same goodness in terms of global shared assets. They all have 200 gigabit InfiniBand, they all have the same board and 448 gigabytes of memory, they all have the same top-end memory bandwidth at 350 gigabytes per second, same top-end 480 megabytes of cache, same SSD, all those global shared assets stay constant. The only thing that changes is how many cores get exposed per VM,” he said.

Users now have more control over cost-performance issues. “If you want more memory per core than is offered on 120-core size, go deploy a 96- or 64-core size to get that right-sizing. If you have an application that is extremely expensive on a per-core basis, and your HPC scenario is not maxing performance within a software licensing constraint, go deploy something like the 16- or 32-core VMs. You don’t have to get locked into any one of those. The intent is to offer something that’s sort of like a VM that’s right sized for every customer,” said Burness.

Azure, like Oracle, is using a custom 64-core version of the new AMD chip. According to Azure, the HBv3-series virtual machines (VMs) are generally available in the East US, South Central US, and West Europe Azure regions. HBv3 VMs will also be available in the West US3 and Southeast Asia regions soon.

Google also made a short presentation during yesterday’s launch. Google Compute Engine introduced Epyc-based VMs last year on general purpose instances (N2D). Amin Vahdat, Google engineering fellow and vice president of systems hardware, announced plans to introduce third-gen Epyc processor-based instances later this year as well as plans to introduce confidential VMs leveraging Kubernetes.

“We are committed to helping customer on their digital transformation journeys. The key element of any digital transformation [is] security and isolation of workloads. That’s why we’re introducing confidential VMs and confidential GKE nodes, the first products in our confidential computing portfolio. They are breakthrough technologies and run on AMD Epyc,” said Vahdat.

Google Cloud plans to introduce a new compute-optimized VM called C2D based on third gen Epyc processors. “[This will offer] new machine sizes for compute intensive workloads, such as high-performance computing. We will also extend our current general purpose offering, N2D, to be to third generation Epyc processes. When it launches, customers will be able to auto upgrade to that new CPU generation. Finally, confidential computing will be available on both C2D and N2D on the latest Epyc processors at the time of launch,” said Vahdat.

Yet another vote of hyperscaler support came from IBM which also announced plans to offer third-gen Epyc-based servers in the IBM Cloud. In a blog post, Suresh Gopalakrishnan, vice president of IBM Cloud platform hardware, wrote, “The I/O bandwidth capability of AMD Epyc 7763 is industry ideal for large-scale databases and commercial deployments — especially when the PCIe Gen4 comes into play. The support for NVMe drives via PCIe Gen4 lanes notably scales I/O and helps reduce data access bottlenecks.”

The Epyc 7763 will be offered on IBM’s Cloud bare metal server clients and will feature: 64 cores per CPU (128 cores per server); 128 threads per CPU (256 threads per server); 128 GB to 4096 GB RAM per CPU; base clock frequency of 2.4GHz with a maximum boost of up to 3.6GHz; 8 memory channels per socket (up to 16 DIMMs per server); up to 10 local storage drives supported; monthly, pay-as-you-use billing; and orderable via the global IBM Cloud Catalog, API or CLI.

IBM reported the new instances will be available sometime this spring.