Supercomputing is extraordinarily power-hungry, with many of the top systems measuring their peak demand in the megawatts due to powerful processors and their correspondingly powerful cooling systems. As a result, these systems are often also extraordinarily carbon-intensive – and efficiency measures are struggling to keep pace with, let alone make headway on the rapidly accelerating demands of modern supercomputers.

As calls louden for supercomputers (and datacenters, broadly) to operate sustainably, how can the sector deliver on that imperative without sacrificing the crucial work performed on those systems? At the 11th annual Rocky Mountain Advanced Computing Consortium (RMACC) HPC Symposium, a panel (“Green Practices in HPC”) of experts tackled this question.

“Overall, I’d like to say this is a good news story,” said Aaron Andersen, a manager of advanced computing at the National Renewable Energy Laboratory (NREL) who previously spent nearly three decades with the National Center for Atmospheric Research (NCAR). Andersen illustrated how major advances in facility and vendor efficiencies had kept datacenter energy use relatively flat compared to a steep, hockey stick-like business-as-usual trajectory.

“But,” he said, “I think it’s more complex than that. … We’re now on a trajectory that, once again, I think we’re going to be consuming an awful lot of electricity. And I think anybody who manages a facility looks at some of the newer architectures and some of the bigger procurements and thinks, ‘Oof. We’re back on the hockey stick curve.’”

“The other bad news is that there’s not any real good news on processor performance,” he continued, citing the limits on clock frequencies being hit by CPU manufacturers. “In some regards, that means not only is it getting hotter and more power-consuming, but we need more of it to get greater performance – which is certainly not ideal.”

With processor performance not providing promising leads in efficiency, facility improvements – such as novel air and water economy measures and liquid cooling – have been picking up much of the slack. System-level improvements such as dynamic power management and accelerator integration have also provided substantial improvements.

But it’s not enough – at least, not forever – and the most apparent outside-the-fence tools have their own drawbacks, as well. Renewable energy is wonderful, of course – if your datacenter happens to be sited near a reliable source of baseload power, like the hydropower that will power CSC’s forthcoming Lumi system. And renewable energy credits, carbon offsets and other market mechanisms are undeniably affordable – but they largely represent a financial transaction, not a conversion of carbon-intensive energy to carbon-free energy, argued Andrew Chien, a senior computer scientist at Argonne National Laboratory.

For some, like Matt Koukl (a principal at Affiliated Engineers, Inc.), one of the most apparent solutions is shifting supercomputing workloads to a more desirable space.

“Location!” Koukl exclaimed. “We’re working on projects in the South right now, and that area versus somewhere like Idaho… there’s a significant driving factor in efficiency in being able to economize [your cooling]. If you’re thinking about a facility and where that facility should go, I guess I would kind of, you know, encourage a northern location if you’re looking for very high-density compute and high efficiency, like not having to run compressors all the time.”

Chien, though, argues for moving the workloads to a more desirable space and time to take advantage of a very specific resource.

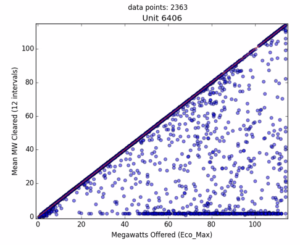

“The key is that the grid is nothing like it used to be 20 years ago, right?” Chien said. “There’s this phenomenon called stranded power … which includes the dispatch of all kinds of renewables and other volatile generators of power, where they offer power to the power grid and that power market combines the feasible generation with the load that’s required. And it turns out, surprisingly, [that they] often tell these renewable generators that they’re not allowed to put power into the grid or, much more often, that you can put power into the grid but we’re only going to pay you a negative price for that power.”

This stranded power, Chien cautioned, is not a large fraction of overall power generation – but it is, he said, large “compared to the kinds of power computing consumes, and that HPC, certainly, consumes.”

“We’re looking at [the stranded power] saying, ‘oh my god, there’s all this excess power that could be exploited,’” he said. “So we did a study trying to exploit that power, using this idea that HPC often doesn’t require continuously operating equipment. We have batch jobs, sometimes we have checkpointable jobs and so on, and most of our job queues are 24 hours or less, right? So we could actually tolerate some interruptions.”

In the study, they tasked two datacenters with an Argonne workload: one with a reliable power supply, another powered predominantly by stranded power. They achieved comparable turnaround times to one another.

Furthermore, Chien said, the total cost of ownership could be positive compared to a traditional system: not only would system operators save on power, they could also invest less in cooling systems and related apparati, since the system wouldn’t be running during the hottest days of the year when energy demand was at its highest.

“But,” Chien said, “you have to be willing to swallow this kind of intermittence.” This isn’t a major impediment in many cases, though: some locations, Chien explained, have around 90 percent availability of stranded power, with that power available for use in 24- to 72-hour windows. “[The intervals are] not like five minutes,” he said.

And, he said, there’s an even lower-tech way of doing the same thing: “You could just modulate your one datacenter to be more compatible with carbon emissions in the grid.” While not quite as effective in terms of carbon savings, simply choosing to operate workloads when the carbon intensity of the grid was lower rather than higher – such as when lots of renewable energy was online – could result in 15 to 19 percent reductions in carbon emissions.

To watch this and other presentations from the 2021 RMACC HPC Symposium, click here.