Last October, the executive director of the EuroHPC JU – Anders Dam Jensen – said that with respect to MareNostrum 5 (the final pre-exascale EuroHPC system), “the tendering process [was] in its very final phase” and that “there [would] be announcements on that in the coming weeks.” Eight months later, the system’s status is still set to “it’s complicated.” Now, thanks to a brief presentation from the Barcelona Supercomputing Center operations director Sergi Girona at ISC21 today, we finally have some new details on the elusive system – even while much of its design remains in limbo.

First, some brief backstory (if you’re impatient to read about the new disclosures, skip ahead to “What we learned at ISC21”). Eight initial systems are being procured under the EuroHPC JU, which serves as the European Union’s concerted supercomputing play on the global stage. Five of these systems are decidedly petascale: Discoverer in Bulgaria; Vega in Slovenia; Deucalion in Portugal; Karolina in Czechia; and Meluxina in Luxembourg. Of those, all but one – Deucalion – are now listed on the Top500. The remaining three systems are the EuroHPC pre-exascale systems. Leonardo, hosted by Italy, and Lumi, hosted by Finland, have already been extensively detailed by their hosts.

First, some brief backstory (if you’re impatient to read about the new disclosures, skip ahead to “What we learned at ISC21”). Eight initial systems are being procured under the EuroHPC JU, which serves as the European Union’s concerted supercomputing play on the global stage. Five of these systems are decidedly petascale: Discoverer in Bulgaria; Vega in Slovenia; Deucalion in Portugal; Karolina in Czechia; and Meluxina in Luxembourg. Of those, all but one – Deucalion – are now listed on the Top500. The remaining three systems are the EuroHPC pre-exascale systems. Leonardo, hosted by Italy, and Lumi, hosted by Finland, have already been extensively detailed by their hosts.

The tumult of MareNostrum 5

The final (and most expensive) pre-exascale system is MareNostrum 5, set to be hosted by the Barcelona Supercomputing Center (BSC). That system has had a more troubled development path. “Coming weeks” came and went after Jensen’s October remarks, and by March 2021, Evangelos Floros – a program officer for the JU – was cautiously characterizing the MareNostrum tender as “pending” and “still an ongoing process.”

Even that may have been something of a positive take on the situation, which was quickly unraveling. Politico reported the drama: according to its sources, competing bids from Atos and IBM/Lenovo had brought the decision-makers to a deadlock, with advisors from the broader EU favoring Atos in order to boost the European computing apply chain and the Spanish stakeholders favoring IBM/Lenovo’s pitch, which seemingly offered a better bang for their buck. The process ground to a halt, ending with an inconclusive vote.

“The voting result did not achieve the needed majority to reach an agreement to adopt the selected tender,” reads the most recent decision of the EuroHPC JU’s governing board, issued in late May. “The lack of decision leads to the cancelation of the public procurement for the acquisition, delivery, installation and maintenance of Supercomputer MareNostrum 5[.]”

According to Politico, Jensen sent a letter to stakeholders suggesting that the tender was withdrawn because its specifications had become outdated in the interim, with COVID-19 highlighting the need for biomedical applications, drug development and other computational biology use cases. Speculation remains, however, that the political tensions between European supply chain interests and research interests led to the withdrawal. (To learn more about Europe’s work to build a homegrown computing supply chain, read HPCwire‘s interview with Jean-Marc Denis, chair of the European Processor Initiative.)

At the time, a JU spokesman declined to comment on whether a new tender would be issued. But at the ISC talk, the message was clear: the Barcelona Supercomputing Center, at least, fully expects to complete MareNostrum 5.

What we learned at ISC21

Little had been known about MareNostrum 5: BSC had shared that the system would be heterogeneous; that they expected its peak performance to reach at least 200 petaflops; that its five-year cost would be €223 million; and that it would include an experimental platform intended to develop new technologies for future supercomputers.

According to Girona’s talk today, little – if anything – from those specifications has changed, and BSC is well on its way to completing the facility meant to host a new, major supercomputer. Girona first shared images of the cooling towers, which have a “total capacity of 17 megawatts of cooling” in order to serve “not only MareNostrum 5, but new components to come in the future.”

Furthermore, he said, “we have already in place and in production the transformers for the facility – a total of five of them, for a total capacity of 20 megawatts[.] We have in the final stages of installation the low-voltage switchboard room,” which he noted was accompanied by an empty row to allow for the possibility of doubling this capacity.

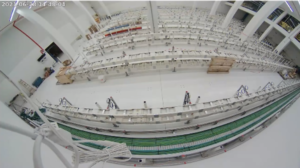

“And this is the status as of today of the compute room,” Girona continued. “You can see the dates on the pictures. … And we are just waiting on the deployment of the pipes that you can see some samples [of] in the middle of the room. So, we have almost finished the computer room for installing the system of MareNostrum 5.”

“I have still to explain to you the concept of MareNostrum 5,” Girona said, quickly showing a diagram of the system. A lot of information is packed into this image: MareNostrum 5, according to the diagram, will have at least two general-purpose partitions; an accelerated compute partition; a data pre- and post-processing partition; and at least two “emerging technology” partitions, in line with MareNostrum 5’s ambition to boost future supercomputers. These will be accompanied, according to the graphic, by high-performance storage connected to the BSC backup and archive systems.

The slide explains that the general-purpose partitions will be “open to all researchers with MPI, OpenMP codes [or] standard HPC codes” and will have “high scalability” across “thousands of nodes.” The accelerated partition, meanwhile, will scale across “thousands of GPUs,” and the emerging technologies partitions are targeted at “[preparing] workloads [for the] exascale era” and “exascale technology assessment.”

And just like that, it was gone from the screen.

“We have not finished the tender process,” Girona said. “As you know, the tender process was canceled due to changes on the specifications of the system. But we’re expecting that the relaunch of the procurement is in short [order],” pending action by the JU’s governing board.

“The timeline is not different,” he added. “The expectation is still that the system will be ready for installation at the end of this year, of some partitions.”