The U.S. National Strategic Computing Initiative (NSCI), launched in the summer of 2015 by executive order (EE) of the Obama Administration, was intended to be an “whole-of-government” effort to nourish a robust, national HPC infrastructure. NSCI spelled out five objectives, including embrace of the already-started exascale computing initiative as well providing early recognition of the rising importance of data analytics (i.e. AI) in HPC. A year later (2016), the NSCI Executive Council, co-chaired by OSTP and OMB, issued a formal plan, which was then updated in 2020.

So, how’s it going?

Last week the U.S. Government Accountability Office weighed in on NSCI progress with formal a review (High-Performance Computing: Advances Made Towards Implementing the National Strategy, but Better Reporting and a More Detailed Plan Are Needed). To some extent, the top-line findings aren’t surprising, with the biggest success seen as the exascale computing program and the biggest challenges seen as pursuit of unbudgeted mandates and lack of detailed implementation plans. GAO made two recommendations:

- The Director of OSTP should address each of the desirable characteristics of a national strategy, as practicable, in the implementation roadmap for the 2020 strategic plan or through other means.

- The Director of OSTP, in consultation with the 10 NSCI agencies, should prepare publically available annual reports assessing progress made in implementing the 2020 strategic plan on the future advanced computing ecosystem.

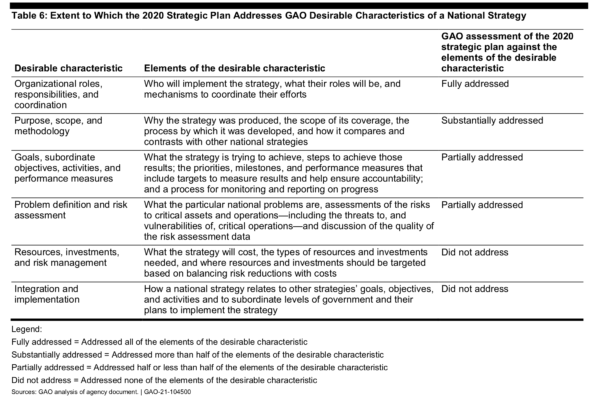

GAO further noted, “The 2020 strategic plan—which superseded the 2016 strategic plan—fully or substantially addressed two desirable characteristics of a national strategy identified by GAO to help ensure accountability and more effective results. For example, the plan described how agencies will partner with academia and industry but partially addressed or did not address four other characteristics, such as the resources needed to implement it or a process for monitoring and reporting on progress.

“OSTP and agency officials said they plan to release a more detailed implementation roadmap later in 2021 but have not described what details this plan will include. By more fully addressing the desirable characteristics of a national strategy through the implementation plan or other means, including reporting on progress, OSTP and agencies could improve efforts to sustain and enhance U.S. leadership in high-performance computing.”

GAO also acknowledged the tremendous public-private effort during the pandemic and the effectiveness of the COVID-19 HPC Consortium, which involved NSCI participants, and the early efforts towards creating a National Strategic Computing Reserve.

There’s a lot to digest in the GAO document, though much of the material will be familiar to the HPC community. With 2021 quickly winding down, it will be interesting to see how detailed the forthcoming implementation plan is. NSCI’s broad charge has always been daunting.

Given the scope of NSCI, it’s worth quickly reviewing the principles and objectives identified in the original NSCI executive order:

NSCI principles:

- The United States must deploy and apply new HPC technologies broadly for economic competitiveness and scientific discovery.

- The United States must foster public-private collaboration, relying on the respective strengths of government, industry, and academia to maximize the benefits of HPC.

- The United States must adopt a whole-of-government approach that draws upon the strengths of and seeks cooperation among all executive departments and agencies with significant expertise or equities in HPC while also collaborating with industry and academia.

- The United States must develop a comprehensive technical and scientific approach to transition HPC research on hardware, system software, development tools, and applications efficiently into development and, ultimately, operations.

NSCI objectives:

- Accelerating delivery of a capable exascale computing system that integrates hardware and software capability to deliver approximately 100 times the performance of current 10 petaflop systems across a range of applications representing government needs.

- Increasing coherence between the technology base used for modeling and simulation and that used for data analytic computing.

- Establishing, over the next 15 years, a viable path forward for future HPC systems even after the limits of current semiconductor technology are reached (the “post- Moore’s Law era”).

- Increasing the capacity and capability of an enduring national HPC ecosystem by employing a holistic approach that addresses relevant factors such as networking technology, workflow, downward scaling, foundational algorithms and software, accessibility, and workforce development.

- Developing an enduring public-private collaboration to ensure that the benefits of the research and development advances are, to the greatest extent, shared between the United States Government and industrial and academic sectors.

Gathering so many important HPC activities under a single umbrella, mostly without dedicated budgets, has proven consistently challenging. Recent efforts in quantum and AI are perhaps exceptions, winning both public support and funding. A fair amount of work has been done in all of the NSCI areas, some of it ad hoc, some of it as part of other programs; that said, there’s a sense by many that most of these efforts are only loosely coordinated by a formal NSCI framework.

GAO pointed out that while the 2020 NSCI update added details about how to integrate some of NSCI’s efforts, it “did not provide a cost estimate for its overall implementation, define funding needs, or provide cost estimates for its specific proposed objectives or activities. In general, we found that the plan did not include details, such as the level of agency resources and investments needed to support proposed actions. As a result, it is not clear how proposed actions will be funded and sustained in the future, which was one of the challenges to implementation of the 2016 plan that agencies cited.”

Shown below is GAO’s take on progress made on the 2020 update plan

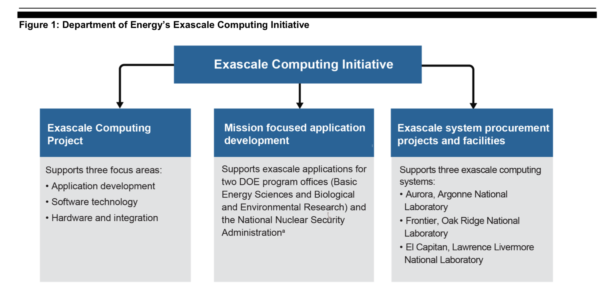

For each NSCI objective the GAO report briefly walks through progress and what’s been spent. The biggest ticket item so far has been the exascale initiative, where about $2.2 billion has been obligated between 2016 and 2020 (fiscal years).

Looking at just site and non-recurring engineering costs, “According to DOE, total funding enacted to support the exascale facilities was about $897 million from fiscal years 2017 through 2020 – $338 million for Frontier, $480 for Aurora, and $79 million for El Capitan – with higher amounts for Frontier and Aurora because they were further along in the construction process.”

There’s also been a lot work on adapting and incorporating data analytics and AI (machine learning/deep learning) into HPC. GAO singled out a few examples: “Two lead agencies (DOD and NSF) and three deployment agencies (NASA, NIH, and NOAA) made efforts to increase coherence between modeling and simulation and data analytic computing. According to responses to our questionnaires and agency documents, examples of agency efforts included supporting programs to meet mission needs through HPC, investing in software and data analytics systems, using cloud computing to lower the barrier to entry for researchers to use HPC, and issuing funding opportunity announcements for grants and small business awards.”

One persistent question has been whether these efforts are NSCI-inspired efforts or just good programs being co-opted under the NSCI nametag. Maybe it doesn’t matter. The lack of a formal, annual NSCI progress reports contributes to that idea.

Not surprisingly, the NSCI was initially greeted warmly by HPC community (see HPCwire coverage, White House Launches National HPC Strategy, and a guest commentary from HPC leaders Bill Gropp (NCSA) and Thomas Sterling (Indiana University), New National HPC Strategy Is Bold, Important and More Daunting than US Moonshot).

It will be interesting to watch how NSCI evolves with ‘completion’ of the exascale computing initiative, the rapid development of AI technologies, and with quantum computing seemingly not far behind.

Stay tuned.

Link to GAO report, https://www.gao.gov/products/gao-21-104500