In this regular feature, HPCwire highlights newly published research in the high-performance computing community and related domains. From parallel programming to exascale to quantum computing, the details are here.

Accelerating distributed deep neural network training on HPC clusters using DPUs

Training a deep learning model, this team from Ohio State University says, is traditionally done by executing the phases (data augmentation, training and validation) serially on CPUs or GPUs. In their paper, they explore the use of Nvidia’s new BlueField-2 DPUs, seeking to “characterize and explore how one can take advantage of the additional ARM cores on the BlueField-2 DPUs to intelligently accelerate different phases of DL training.” Their results show that their proposed d designs could deliver up to 15 percent improvement in deep learning training time.

Authors: Arpan Jain, Nawras Alnaasan, Aamir Shafi, Hari Subramoni and Dhabaleswar K. Panda.

Scaling containerization on multi-petaflops CPU and GPU HPC platforms

Scaling containerization on multi-petaflops CPU and GPU HPC platforms

When implementing containers, these authors from the Texas Advanced Computing Center (TACC) say, “compatibility between certain elements of the container and host is required, resulting in a trade-off between portability and performance.” In their paper, they present TACC’s approach for building containers to ensure performance and security at scale, demonstrating “near-native performance and minimal memory overheads” in containerized environments across 6,144 nodes.

Authors: Amit Ruhela, Stephen Lien Harrell and Richard Todd Evans.

Establishing a high-performance AI ecosystem for Sunway supercomputers

These authors, hailing from China’s National Research Centre of Parallel Computer Engineering and Technology and two Chinese universities, seek to “meet the demand of large computing power for training complex deep neural networks” by establishing an “an AI ecosystem on [the] Sunway platform to utilize the Sunway series of high performance computers[.]” They say the optimized accelerating library for deep neural networks they have developed, called SWDNNv2, shows high efficiency and scalability.

Authors: Sha Liu, Jie Gao, Xin Liu, Zeqiang Huang and Tianyu Zheng.

Illuminating the future role of HPC-powered AI in cardiovascular medicine

Illuminating the future role of HPC-powered AI in cardiovascular medicine

“Advances in [HPC] technology have reached the capacity to inform cardiovascular … science in the realm of both inductive and constructive approaches,” write these authors from Tokai University and the University of Texas Southwestern Medical Center. In their article, they discuss how HPC-powered AI can be used to “predict future [cardiovascular] events with the use of large scale multi-dimensional datasets” in a manner that informs the “mechanistic underpinnings” of the human body in the same manner as clinical trials while still allowing for individual-level event risk prediction.

Authors: Shinya Goto, Darren K McGuire and Shinichi Goto.

Moving toward efficient metagenomic analysis in HPC

“Clinical metagenomics is a technique that allows the search for an infectious agent in a biological tissue/fluid sample,” explain these researchers from Brazil’s Federal University of Health Sciences. “Over the past few years, this technique has been refined, while the volume of data increases in an exponential way.” Metagenomic analysis, they continue, defies traditional string search algorithms, and clinical diagnosis use cases demand that data processing occur as swiftly as possible. The researchers outline current techniques for accelerating searches in genomic data processing using HPC and explore “possible alternative computational paths” for streamlining metagenomic diagnosis.

Authors: Gustavo Henrique Cervi, Cecília Dias Flores and Claudia Elizabeth Thompson.

Simulating forest fires using HPC

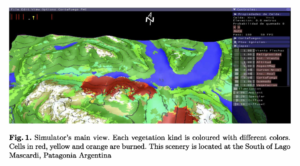

Simulating forest fires using HPC

In this paper, a team of computer scientists, physicists, atmospheric and biological scientists and electronic engineers from five different Argentinian institutions presents an HPC-enabled “forest fire simulator with a visual interface that allows [testing] of different scenarios for fire propagation.” The authors describe how the application was developed for and in collaboration with firefighters and highlight a path toward using the simulator for real-world forest fire management.

Authors: Mónica Denham, Viviana Zimmerman and Karina Laneri.

Developing a dynamic power capping library for HPC applications

“As HPC systems increase in scale and capability, the cost of supplying power to these systems grows significantly,” write these authors from Argonne National Laboratory and the Illinois Institute of Technology. “This introduces the urgent need for energy efficient computing through the management of power consumption” To that end, they debut DNPC, their Dynamic Node-level Power Capping library, which, given an application and a defined performance degradation limit, “dynamically follows the application’s power profile and adjusts the power cap, with the objective to minimize package level power consumption within the performance degradation threshold.”

Authors: Sahil Sharma, Zhiling Lan, Xingfu Wu and Valerie Taylor.

Do you know about research that should be included in next month’s list? If so, send us an email at [email protected]. We look forward to hearing from you.