Nvidia yesterday introduced Quantum-2, its new networking platform that features NDR InfiniBand (400 Gbps) and Bluefield-3 DPU (data processing unit) capabilities. The name is perhaps confusing – it’s not a quantum computing device and even Nvidia is getting into the true quantum computing market with its cuQuantum simulator. The name stems from the legacy line of Nvidia/Mellanox Quantum switches. That said, the new Quantum-2 platform specs are impressive.

Jensen Huang, Nvidia CEO, introduced the new platform during his keynote, “Quantum-2 is the first networking platform to offer the performance of a supercomputer and the shareability of cloud computing. This has never been possible before. Until Quantum-2, you [would] get either bare metal high performance or secure multi-tenancy. Never both. With Quantum-2 your valuable supercomputer will be cloud native and far better utilized,” said Huang who ticked through Quantum-2’s key features.

“Performance isolation keeps the activity of one tenant from disturbing others. A telemetry-based congestion control system keeps high data rate senders from overwhelming the network and jamming to traffic for all. Generation three SHARP (scalable hierarchical aggregation and reduction protocol) has 32 times higher in switch processing to speed up AI training. A nanosecond precision timing system can synchronize distributed applications like database processing, lowering the overhead of waiting and handshaking needed to avoid race conditions,” he said.

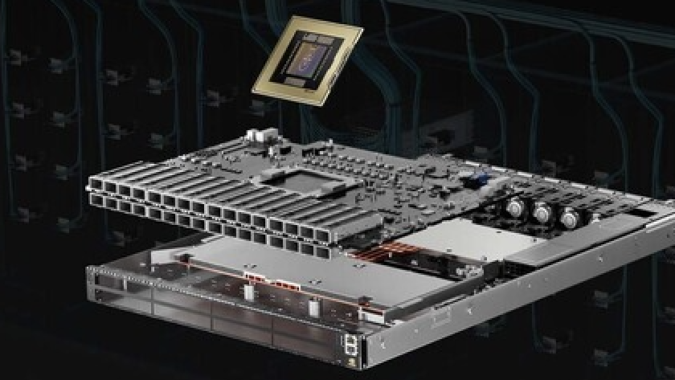

To some extent the new platform pulls together earlier technologies into a single package. Nvidia says “Quantum-2 is the most advanced end-to-end networking platform ever built. It comprises a “400Gbps InfiniBand networking platform that consists of the Nvidia Quantum-2 switch, the ConnectX-7 network adapter, the BlueField-3 data processing unit (DPU) and all the software that supports the new architecture.”

At the heart of the new Quantum-2 platform is the Quantum-2 InfiniBand switch, a 57-billion transistor chip fabricated using TSMC’s 7-nanometer process; it’s slightly bigger than the Nvidia’s A100 GPU with 54 billion transistors. It features 64 ports at 400Gbps or 128 ports at 200Gbps and will be offered in a variety of switch systems up to 2,048 ports at 400Gbps or 4,096 ports at 200Gbps — more than 5x the switching capability over the previous generation, Quantum-1. (See official announcement)

Nvidia reported the Quantum-2 switch is “now available” from a wide range of infrastructure and system vendors around the world, including Atos, DataDirect Networks (DDN), Dell Technologies, Excelero, GIGABYTE, HPE, IBM, Inspur, Lenovo, NEC, Penguin Computing, QCT, Supermicro, VAST Data and WekaIO.

Interestingly, Nvidia is offering two versions of the new Quantum-2 networking platform: “The Nvidia Quantum-2 platform provides two networking end-point options, the Nvidia ConnectX-7 NIC and Nvidia BlueField-3 DPU InfiniBand.”

ConnectX-7 is being billed by Nvidia as the world’s leading HPC networking chip, doubling the performance of ConnectX-6. Nvidia says it also doubles the performance of RDMA, GPUDirect Storage, GPUDirect RDMA and In-Networking Computing. ConnectX-7 will sample in January. The BlueField-3 DPU will sample in May.

Nvidia didn’t specify different uses cases for the two versions of the Quantum-2 platform, although private and public cloud providers are obvious markets along with large supercomputing centers. The expectation is that over time, there will be a preference for the DPU version.

“The DPU includes the ConnectX-7 IP, and adding Arm cores, more accelerations engines, and on board memory. We are seeing great eco system being developed around the DPU, using it for scientific applications offloads and accelerations, for infrastructure workloads, for security and isolation and so forth,” said Gilad Shainer, senior VP of marketing for networking, Nvidia.

“As the eco-system is being developed, we provide our customers with the flexibility to use the DPU or the ConnectX [version], based on their data center applications requirements. In the future, we believe that the DPU will become the default network adapter, for both compute and storage connectivity,” he said.

Nvidia also introduced DOCA 1.2, the latest version of its tool suite for the BlueField DPUs and showcased a few benchmarks. During his keynote, Huang also emphasized the steady expansion of the DPU ecosystem as shown below.

It will be interesting to see how the Quantum-2 platform-plus-DPU gambit plays out. Nvidia has consistently argued that its DPU-based offerings constitute more than a smartNIC. Nvidia contends the ability to offload key infrastructure chores such as networking, storage, and security management – which consume as much as 30 percent of CPU time in large datacenters and the cloud according to at least one study – is a critical requirement for advancing datacenter performance.

Huang is doubling down on that idea. During a post-keynote media Q&A held today (Wednesday), Huang said, “Quantum-2 is going to be the next-generation nervous system, the fabric, for large computer systems.”

Other chipmakers have jumped into the fray, for example Intel with its IPU (infrastructure processing unit). See HPCwire coverage from Hot Chips this year, Here Come the DPUs and IPUs from Arm, Nvidia and Intel.

Link to Nvidia press release, https://www.hpcwire.com/off-the-wire/nvidia-quantum-2-takes-supercomputing-to-new-heights-into-the-cloud/