Nvidia today reported its BlueField-2 DPU delivered 41.5M IOPS on a storage benchmarking test and declared that it delivers “more than 4x more IOPS than any other DPU.” Nvidia issued the claim to counter last month’s announcement from Fungible that its DPU-based, 10M IOPS storage demonstration, conducted with the San Diego Supercomputing Center, set new NVMe and TCP records for storage initiator performance.

The timing of Nvidia’s announcement, of course, isn’t coincidental. “People are out making claims that they have breakthrough performance with something like 10 million IOPS and [that it’s] the best performance in the world. Frankly, we just don’t see it that way,” Kevin Deierling, SVP of networking at Nvidia, told HPCwire. Nvidia posted a blog today describing the work.

Nvidia reported, “The BlueField-2 DPU delivered record-breaking performance using standard networking protocols and open-source software. It reached more than 5 million 4KB IOPS and from 7 million to over 20 million 512B IOPS for NVMe over Fabrics (NVMe-oF), a common method of accessing storage media, with TCP networking, one of the primary internet protocols.”

While the Nvidia and Fungible demonstrations spotlight DPU-based storage solutions, they suggest a broader battle among DPU providers is brewing. The basic premise is that DPUs – data processing units – may be able to cut costs and accelerate host system performance by absorbing tasks usually handled by the host CPU. The most commonly-cited applications targeted for DPUs include network, storage, and security management. (See HPCwire coverage, Hot Chips: Here Come the DPUs and IPUs from Arm, Nvidia and Intel.)

It is still early days for DPUs. Nvidia (via Mellanox) has probably promoted the DPU concept the longest. Nvidia’s BlueField DPU line is certainly the most mature of current DP offerings. Fungible is a relatively young start-up founded in 2015; it has a DPU and several early solutions. Intel and Arm also have forays into the DPU market. Intel calls its offering an infrastructure processing unit (IPU).

Deierling said, “We’ve focused a lot on cybersecurity, zero trust, and those sorts of accelerations. The storage piece is table stakes for us. Frankly, a ton of our [Bluefield] design wins are in the storage space and we don’t talk enough about it.”

Nvidia’s recent benchmarking exercises were run using BlueField-2, which is available now. BlueField-3 isn’t due until the spring, likely in time to talk more about at GTC22. Below is an excerpt from today’s Nvidia blog describing the methodology used:

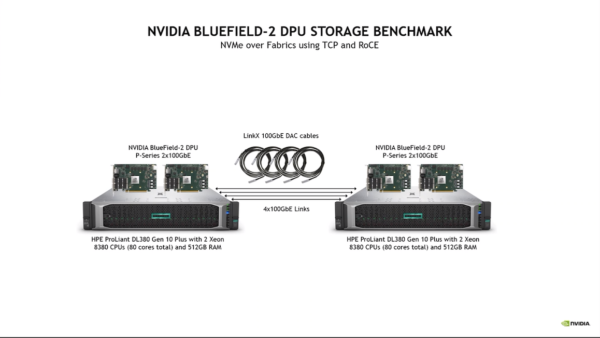

“This performance was achieved by connecting two fast Hewlett Packard Enterprise Proliant DL380 Gen 10 Plus servers, one as the application server (storage initiator) and one as the storage system (storage target).

“Each server had two Intel “Ice Lake” Xeon Platinum 8380 CPUs clocked at 2.3GHz, giving 160 hyperthreaded cores per server, along with 512GB of DRAM, 120MB of L3 cache (60MB per socket) and a PCIe Gen4 bus.

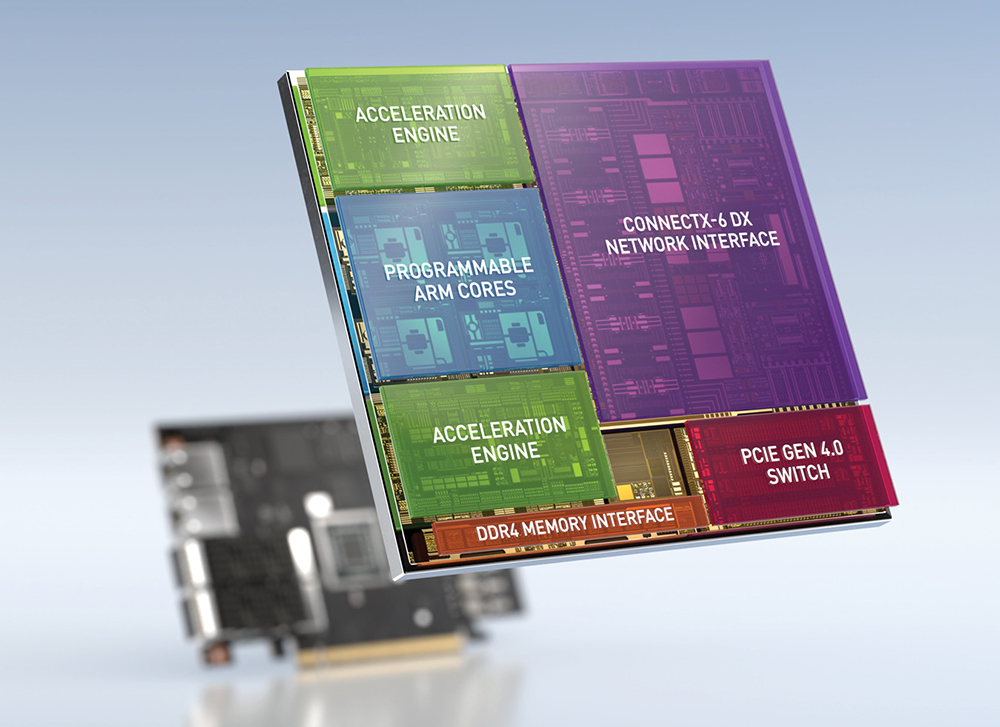

“To accelerate networking and NVMe-oF, each server was configured with two NVIDIA BlueField-2 P-series DPU cards, each with two 100Gb Ethernet network ports, resulting in four network ports and 400Gb/s wire bandwidth between initiator and target, connected back-to-back using NVIDIA LinkX 100GbE Direct-Attach Copper (DAC) passive cables. Both servers had Red Hat Enterprise Linux (RHEL) version 8.3.

“For the storage system software, both SPDK and the standard upstream Linux kernel target were tested using both the default kernel 4.18 and one of the newest kernels, 5.15. Three different storage initiators were benchmarked: SPDK, the standard kernel storage initiator, and the FIO plugin for SPDK. Workload generation and measurements were run with FIO and SPDK. I/O sizes were tested using 4KB and 512B, which are common medium and small storage I/O sizes, respectively.

“The NVMe-oF storage protocol was tested with both TCP and RoCE at the network transport layer. Each configuration was tested with 100 percent read, 100 percent write and 50/50 read/write workloads with full bidirectional network utilization.”

Deierling said, “This is [using] an off-the-shelf DL380, which is a high-volume (HPE) server. We put two BlueFields on both sides and we connected it with 400 gigabits per second, so four separate 100 gigabit links. And we were able to get 41 million IOPS; so that’s 20 million IOPS per BlueField DPU. The trade-off is with the block size. We will saturate these 100-gig links at 4K bytes, block size, and we can actually do that when we’re virtualizing the storage. What I mean by that is that when the operating system powers up, and it talks to the BlueField DPU and asks what are you? It will say, ‘we’re a BlueField DPU’ and [the OS] will treat us as such and know about the network connectivity with dual 100 gig ports.”

“Then [the OS] sweeps the bus again, and we’ll actually say, we are one terabyte of local NVMe drives. In fact, we’re not. There’s no NVMe in our box, but the operating system will see as if there were local NVMe drives. The reason that that’s important is because now any operating system, even if it doesn’t know about NVMe over Fabrics, it doesn’t need to know about that, it doesn’t need to manage that. In fact, it can go off and do a local NVMe access, and whether that storage is actually local, or sitting behind the network on a remote storage box, we’ll go fetch it and return it as if it was local,” he said.

Market watchers suggest the DPU market’s prospects look promising. Karl Freund, principal at Cambrian AI Research told HPCwire earlier this year, “This market for Smart NICS+ is just beginning to take shape. The early adopters are the hyperscalers typically using an ASIC (AWS) or FPGA (Microsoft). Nvidia however foresees needing something much more powerful with a GPU along with Arm cores on the IPU. In three years, that could be a game changer for very large composable infrastructure.”

Link to Nvidia blog, https://blogs.nvidia.com/blog/2021/12/21/bluefield-dpu-world-record-performance/