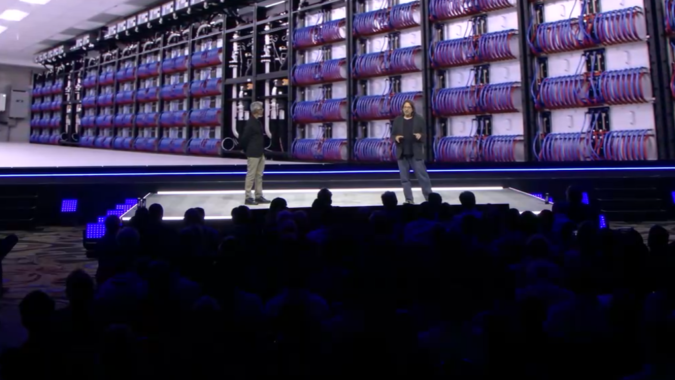

Installation has begun on the Aurora supercomputer, Rick Stevens (associate director of Argonne National Laboratory) revealed today during the Intel Vision event keynote taking place in Dallas, Texas, and online. Joining Intel exec Raja Koduri on stage, Stevens confirmed that the Aurora build is underway – a major development for a system that is projected to deliver more than 2 exaflops of peak computing when it is fully deployed.

It’s been a “massive effort” to install the system, said Stevens. Aurora combines more than 10,000 Intel-outfitted blades into an HPE Cray EX (née Shasta) supercomputer. Each compute blade has two Sapphire Rapids Xeon CPUs (with HBM) and six Ponte Vecchio (PVC) GPUs, integrated into HPE’s Cray EX architecture with Slingshot networking.

All told, Aurora will implement 60,000+ Ponte Vecchio GPUs and 20,000+ Sapphire Rapids CPUs, said Stevens. “We’ve already installed the Intel DAOS storage system, management nodes and cooling infrastructure, and we’ve put in place a validation system with Sapphire Rapids and PVC processors in place right now for applications testing. The whole team can’t wait until we get the system up and running.”

Argonne is also partnering with Intel to deploy OneAPI as a new model for programming the nodes across the entire system. Stevens explains the proposition: “Using one interface, we can program the CPUs and the GPUs – you have one code base; it’s open standards-based, and you don’t have to change your code as you move from CPU to GPU during development. It just works.”

To ensure the system is ready when it comes online and to inspire developers to work on large scale scientific computing problems, Argonne is now taking reservations.

“You can make a reservation for economic and industrial research, where you’re interested in pursuing breakthroughs in science and engineering,” said Stevens. “We’re taking reservations, setting up development accounts and getting teams on the early systems to build software so that when Aurora stands up on day one, we’ll have applications running.”

Koduri and Stevens were in agreement on the world’s need for more flops, from exaflops to zettaflops. “We need a lot of flops,” said Stevens. “Exascale is just the beginning. We need a lot of compute power to solve simulation problems – whether it’s to predict the future climate, to design new batteries, to work on new cancer treatments, new drugs, manufacturing, or prototype the metaverse.”

Aurora is one of three exascale systems on the U.S. leadership supercomputing roadmap. The project has gone through a number of redefinitions dating back to 2017. Most recently, in October at the Intel Innovation event, Aurora was expanded from 1 exaflops to “over 2 exaflops of peak performance” and delivery was moved from 2021 to 2022.

Frontier (Oak Ridge) and El Capitan (Livermore) are the other two U.S. exascale systems in the works. El Capitan (HPE/AMD) is on track for delivery next year, while Frontier (also HPE/AMD) is installed at the Oak Ridge Leadership Computing Facility and undergoing final testing ahead of acceptance. Oak Ridge reported in March they anticipate the full Frontier system will be ready for early science in July of this year. The bioinformatics code CoMet has, according to the lab, been run on 3,210 nodes of the ~9,000 node system.