HPCwire takes you inside the Frontier datacenter at DOE’s Oak Ridge National Laboratory (ORNL) in Oak Ridge, Tenn., for an interview with Frontier Project Director Justin Whitt. The first supercomputer to surpass 1 exaflops on the Linpack benchmark, Frontier earned the number one spot on the Top500 in May and broke new ground in energy efficiency. The HPE/AMD system delivers 1.102 Linpack exaflops of computing power in a 21.1-megawatt power envelope, an efficiency of 52.23 gigaflops per watt.

Whitt shares what it was like to stand up the first U.S. exascale supercomputer, diving into the system details, the power and cooling requirements, the first applications to run on the system, and what’s next for the leadership computing facility.

Transcript (lightly edited):

Tiffany Trader: Hi Justin. I’m here with Justin Whitt. We’re standing in front of the Frontier supercomputer, the HPE/AMD system that recently was the first to cross the Linpack exaflops milestone. Justin is the project director on Frontier. You and your team must be feeling pretty good about this.

Justin Whitt: We’re very excited. It was quite the accomplishment. The team worked really hard. The corporate partners of HPE and AMD have worked tremendously hard to make this happen. And we couldn’t be happier. It’s just great.

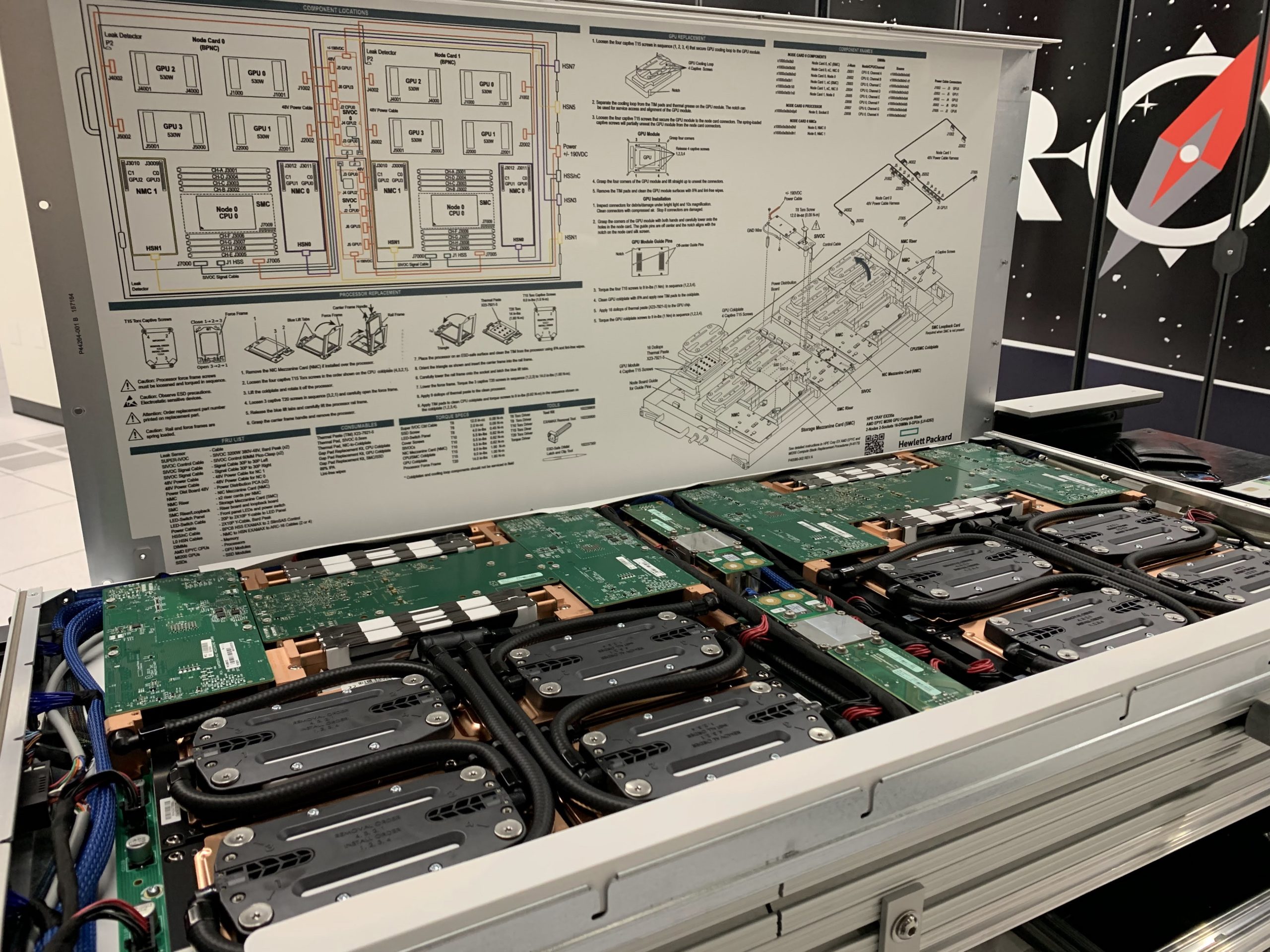

Trader: Well, congratulations. So tell us about the system. We’re standing in front of some of the cabinets, can you tell us about what’s inside?

Whitt: Sure. These are HPE Cray EX systems. We have 74 cabinets of this — 9,408 nodes. Each node has one CPU and four GPUs. The GPUs are the [AMD] MI250Xs. The CPUs are an AMD Epyc CPU. It’s all wired together with the high-speed Cray interconnect, called Slingshot. And it’s a water-cooled system. We started getting hardware last October. We’ve been building the system, testing it and had it up and running now for a few months.

Trader: So I understand the process to get it benchmarked in time for the Top500 was right to the wire. Do you want to share a little about that and what that experience was like?

Whitt: It was right to the wire. The funny thing about these systems is that they’re so large, you can only build them for the first time when all the hardware arrives. So when the hardware arrived, we started putting things together, and it takes a while. Once we had it up, you know and had all the hardware functioning, then you start to tune the system. And we’ve kind of been in that mode for several months now. Where in the daytime, we make adjustments, make tunings, and at nighttime we check our work by running benchmarks on it and seeing how we did. And we were running out of time, you know, the May list was coming up. And we were down to you know, early, maybe mid May, still running, always running overnight with us and the engineers around the country watching the power profiles at home and saying, “Oh, this looks like a good run, or, Hey, let’s kill it, and let’s start it again.” And literally a few hours before the deadline, we were able to get a run through that broke the exascale barrier.

Trader: That was 1.1 exaflops on the High Performance Linpack benchmark. And then the system also very impressively got number two on the Green500. And it’s companion, the smaller companion the test and development, the Frontier TDS — Borg I think you call it — it was number one with a pretty impressive energy efficiency rating.

Whitt: Yes, over 60 gigaflops-per-watt for the single cabinet run. So very impressive. And actually I guess the top four spots on the Green500 were the same Frontier architecture.

Trader: And tell us a little bit more about the cooling. I know you did a lot of facility upgrades for the power and cooling, and the compute is completely liquid cooled?

Whitt: It is, yes it is. So this is the datacenter where we formerly had the Titan supercomputer. So we removed that supercomputer and refurbished this datacenter. We knew that we needed more power, and we needed more cooling. So we brought in 40 megawatts of power to the datacenter. And we have 40 megawatts of cooling available. Frontier really only uses about 29 megawatts of that at its very peak. And so there was a lot of construction work to get that done and get the cooling in place ahead of the system.

Trader: And does that liquid cooling dynamically adjust to the workloads?

Whitt: Yeah, it does. These are incredibly instrumented machines at this point, where even down to the individual components on the individual node-boards, there’s sensors there that are monitoring temperatures, so we can adjust the cooling levels up and down to make sure that the system stays at a safe temperature.

Trader: And what would you say about the volume level in the room? We’re using a mic here, but it’s really not too loud as far as datacenters go.

Whitt: That’s right. You probably visited during the Titan days where we would have been wearing earmuffs and we wouldn’t be having this conversation. Summit was a lot quieter than that. And this is even a little bit quieter than Summit is, so they’re getting quieter because they’re going to liquid-cooled. We don’t have fans. We don’t have rear doors where we’re exchanging heat with the room.

Trader: So it’s 100 percent liquid cooled, and the [fan] noise we’re hearing is actually from the storage systems that are also HPE and are air-cooled.

Whitt: Yes, they’re a little louder so they’re on the other side of the room, and you can… they are pretty loud.

Trader: I understand you’re coming up on the acceptance process, how’s that going?

Whitt: We’re actually coming up to the point where we will start the acceptance process. So basically, up until now, we’ve been doing a lot of the testing and a lot of tuning with the pre-production software. And so we’ve got to get all the production software on the system, you know, from the network software, to the programming environments to all that, get it to what we will use when we actually have researchers on the system. Once we have that done, and everything’s checked out, we will start the acceptance process on the machine.

Trader: So what’s running on Frontier right now?

Whitt: So right now, we’re still doing some benchmark testing. And we’re also doing a lot of checks on these new software packages we put on. So we’ll put things on, we run benchmarks, we run real-world applications on the system to make sure that as we’ve upgraded software, we haven’t introduced any new bugs in the system.

Trader: Is there a dashboard you pull up, and you can see exactly what’s running on it?

Whitt: That’s right. That’s right.

Trader: That’s cool.

Whitt: And you know, I mentioned all the instrumentation and sensors, on the same dashboard, we can look at temperatures down to the individual GPUs, to see you know, how hot the GPUs are running, to see, you know, what the flow rates are through the system. It’s really impressive.

Trader: And what will some of the very first workloads be when it goes into early science?

Whitt: Here at OLCF [Oak Ridge Leadership Computing Facility], we have the Center for Accelerated Application Readiness, we call it CAAR. We jokingly say it’s our vehicle for application readiness. That group supports eight apps for the OLCF and 12 apps for the Exascale Computing Project. So the plan is that we’ll have over 20 apps that are ready to do science on day one of the system.

Trader: They say exascale readiness on day one is the tagline there. And given the long term procurement cycles for these enormous instruments, you’re already working on the planning for the next supercomputer after Frontier, which you call OLCF-6. So how are you preparing for that system and where will it go?

Whitt: Yes, in project parlance, you know, Frontier was OLCF-5, the next system will be OLCF-6. And we’re really just in the very conceptual thinking about it phases at this point. That system will likely go in this room, we have room for that system, both from a space and from a power and cooling perspective.

Trader: Partly because these [Frontier machines] are so dense that you needed fewer cabinets.

Whitt: That’s exactly right. Yeah.

Trader: And then you also still have Summit here, a previous Top500 number-one system, an IBM/Nvidia machine. What are the plans for Summit once you have Frontier up in full production?

Whitt: Summit’s still a great system. It’s highly utilized at this point. Even as we speak, it’s, you know, probably 95 percent or maybe more full, with researchers running codes on that system. And so it’s still a great system at this point. We normally like to run systems for at least a year overlap, so that we can make sure that Frontier is up and stable and give people time to transition their data and their applications over to the new system. But Summit is a really good system, so we’ll have to wait and see, but we will at least run it for a year and overlap with Frontier.

Trader: And then a very important question. We talked a little bit about it, but maybe just from a more personal point of view, looking at the science that Frontier and exascale will enable, what are you most excited about?

Whitt: So I’m excited about a lot of different science, you know, really, with the scales of the systems, you know, you’ll be able to approach problems we’ve never been able to approach before. I’m a CFD person by training. So I always have a soft spot for the CFD codes. But some of the most exciting things are the work in artificial intelligence and those workloads. You know, you have researchers that are looking at how to develop better treatments for different diseases, how to improve efficacies of treatments, and these systems are capable of digesting just incredible amounts of data. Think about laboratory reports or pathology reports, thousands of them, and they can draw inferences across these reports that no human being could ever do but that a supercomputer can do. And some of that to me is really exciting.

Trader: Speaking of CFD, are you using computational fluid dynamics to model the water flow in the cooling system?

Whitt: We are. Yeah, we are. That’s a recent effort.

Trader: That’s pretty neat. All right. Well, thank you so much, we appreciate the tour.

Whitt: You guys are always welcome.

Trader: Congratulations.

Whitt: Thank you.