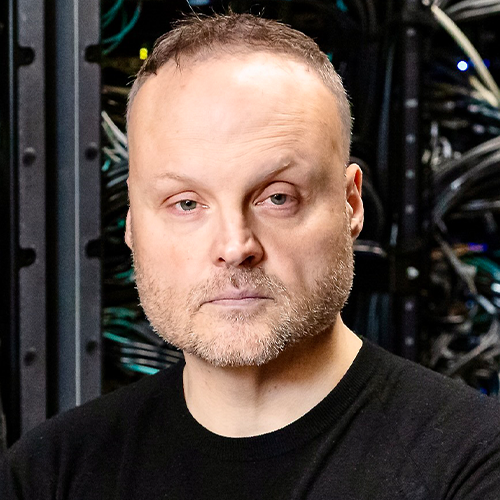

HPCwire presents our interview with Bronson Messer, distinguished scientist and director of Science at the Oak Ridge Leadership Computing Facility (OLCF), ORNL, and an HPCwire 2022 Person to Watch. Messer recaps ORNL’s journey to exascale and sheds light on how all the pieces line up to support the all-important science. Also covered are the role of the Exascale Computing Project, insights into architectural directions and evolving HPC-AI synergies. This interview was conducted by email earlier this year.

Bronson, congratulations on being named a 2022 HPCwire Person to Watch! Can you give us a summary overview of your responsibilities at Oak Ridge Leadership Computing Facility and what your position entails?

As the director of science for the OLCF, I’m responsible for marshalling all our resources toward making sure the science that only leadership computing can enable gets done. That job starts before an allocation is made on the machines, continues through the computational campaigns, and really doesn’t have a formal end, as I continue to communicate the impact made by those projects to a wide variety of audiences even years after they are over. It’s a great job for a science junkie like me: I get to develop a more-than-pedestrian understanding about the full gamut of science we support at OLCF (i.e., almost all scientific disciplines) while “living close” to some of the world’s most powerful computers. The little Appalachian boy programming a TRS-80 Model 1 that was me in the early 80’s would be very jealous.

Please highlight some of the successes that Oak Ridge has had on the path to exascale. (HW, SW, applications, people – anything!)

I think our biggest successes on the road to exascale are wrapped up in the chance we took with Titan back at the beginning of the last decade. There was considerable skepticism when we first adopted hybrid CPU-GPU computing, going all-in with Titan. We have continued along that path with Summit, a path that has proven fruitful as we now stand on the precipice of exascale.

That journey is as much about the people we have deployed around the machines and their expertise as it is about the hardware. I have been especially fortunate to work alongside some of the most skilled and experienced folks in HPC over the past decade and a half, across all the various aspects of endeavor that are necessary to deploy resources at the scale we have. In particular, our liaison model – pairing domain scientist who have top-notch HPC skills with individual projects – is a methodology that has enabled the arrival at exascale along the road of hybrid-node computing in a real way.

How has your team interfaced with the Exascale Computing Project (ECP)? What can you share about the ECP’s role in supporting exascale-readiness from your perspective?

We are close partners with ECP. There is hardly a facet of the project that OLCF is not deeply involved in, from application development to hardware and integration. We have provided the primary development and testing platform for all the ECP application development and software technology teams in the form of Summit, to the tune of a few million node-hours per year over the past few years. We also understand the ECP teams to be part of our traditional early-science teams. We have instantiated the third version of our Center for Accelerated Application Readiness (CAAR) to prepare a group of applications for Frontier, and we consider the ECP development teams to be a part of that. Indeed, many of the same OLCF folks working with our CAAR teams are also working on ECP apps and other software. The ECP teams are also part of the first group of users on our test and development system for Frontier. I anticipate the ECP apps will deliver some of our earliest scientific results on Frontier.

Milestones are inspiring and exciting. What excites you most about entering the exascale era? What are some examples of the science and hopefully breakthroughs that will be unlocked? In what ways will having exascale systems – and I mean the entire ecosystem not just the hardware – be game-changing?

The great thing (to me, anyway) about supercomputing is that there is no one “killer app.” Supercomputing is useful across the entire scientific enterprise, so the list of new insights and questions that will gleaned from exascale computing is … countably infinite. But I do have a couple of places where I think the effect will be especially sharp and profound. The first is in the design cycle for engineering in aerospace, CFD, and related fields. The ability to do design simulations with the requisite physical fidelity to deploy real machines and do that on human time scales (i.e., about a day or overnight) is a real game-changer for a lot of researchers, in academia and in industry. Related to that is the continuing quest to understand turbulence, the last great classical physics problem. Resolution – meaning memory – is required to make progress on this front, and Frontier will provide a significant jump. The ability to resolve convection in the atmosphere on roughly kilometer scales is a place where this additional resolution isn’t just gratuitous. Rather, it leads to new physics and new understanding.

In addition, we are fielding huge storage systems as part of Frontier. The ability to quickly query very large collections of data and do non-trivial amounts of compute on those data will lead to insights in a number of fields, with drug discovery being a very important example.

Heterogeneous computing architecture, largely dependent upon accelerators (GPUs mostly), has become the dominant approach to supercomputing (with the notable exception of Top500 leader Fugaku) and is the backbone of the U.S. exascale program. Where do you see computer architecture headed? What will be the follow-on to today’s dominant heterogeneous (CPU plus accelerator) landscape?

I think the general outlines of CPU+accelerator computing probably has quite a bit of gas left in it. More important to developers is the abstraction of the memory hierarchy into “close and fast” and “far and slow” memory spaces. That model has been with us for a while, it’s just made more obvious and, maybe, important, with hybrid-node computing. The compute engines might change a bit, but having that kind of structure and having heterogeneity on the node are likely going to persist for a while. That doesn’t mean we might not field multiple partitions of differing HW in the future (i.e. push some heterogeneity up from the node level), but I think that might be more a matter of expedience for getting science done: To make sure all the steps of the process of actually getting insight out of a computational experiment, data analysis, or inference are done as efficiently as possible.

What is the opportunity for bringing HPC and AI capabilities together in one architecture? I have heard it said (I forget by whom!) that Summit is (already) the world’s first big HPC-AI supercomputer. What is the state of adoption/implementation for converged AI-HPC workflows? Do you also see a need for purpose-built AI architectures (like Cerebras, SambaNova, Groq, etc)?

We have recently looked at this idea that HPC and AI are coming together, based on what we see in our user programs. That confluence is already here. A large fraction of the projects we support in Summit make use of both “traditional” (I really hate that moniker for this) simulation and AI and ML techniques. These projects use AI/ML in a number of steps in their computational campaigns as well, from before the first simulation run to train surrogate models, to design of experiments, and through the analysis after the data are generated.

If the purpose-built architectures can be made amenable to joining in on all these steps – through policy or software or both – then I think the acceleration they hope to achieve can be as impactful as, for example, the Tensor Cores on Summit proved to be.

It has been proposed that in the not-so-distant future, quantum accelerators will be integrated into either an HPC architecture or workflow. How do you see these technologies coming together? Is this something OLCF is preparing for?

OLCF has an active Quantum Computing User Program where we manage access to a number of commercial quantum computing providers. We are also actively soliciting proposals to our Director’s Discretionary allocation program for “hybrid” proposals that want to take advantage of these resources coupled with an allocation on Summit.

I’m most excited for the promise of quantum computing to help solve problems that are already “quantum.” Some of these problems are treated classically now because we can’t figure out how to write software to solve the “real” quantum equations fast enough. One that is particularly interesting to me is the idea of quantum kinetics for neutrinos in dense astrophysical environments like neutron stars and core-collapse supernovae. I think we are years away from having “quantum accelerators” hanging off HPC nodes, solving the quantum kinetic equations that will tell us how neutrinos change flavor in these explosive environments, but maybe a student I help to train will see that happen.

Are there any other computing trends you would like to comment on? Any areas you are concerned about, or identify as in need of more attention/investment?

Moving numbers to and from memory is the single most important bottleneck for scientific computing. This has been known by practitioners in HPC for a long time, and our now partners in AI and ML are quickly pushing right up against this reality as well. There are no easy technical answers to increase memory bandwidth and limit the amount of energy it takes to move those bits, but it should be perhaps the single most motivating notion as we go forward.

Outside of the professional sphere, what can you tell us about yourself – unique hobbies, favorite places, etc.? Is there anything about you your colleagues might be surprised to learn?

I wear my Appalachian origins on my sleeve, so most people who know me know I grew up in the Great Smoky Mountains. A bit of an obsession with fly fishing goes along with that origin story. But not everyone knows that I am an avid lacrosse player and coach, that I finally got my (honorary) high school diploma this past year, or that I’m a multi-day Jeopardy! champion.

Messer is one of 12 HPCwire People to Watch for 2022. You can read the interviews with the other honorees at this link.