Since 2017, plans for the Leadership-Class Computing Facility (LCCF) have been underway. Slated for full operation somewhere around 2026, the LCCF’s scope extends far beyond that of the large supercomputer — Horizon — that will be housed at the Texas Advanced Computing Center (TACC). At the fall meeting of the NSF’s Advisory Committee on Cyberinfrastructure (ACCI) this week, an update on the LCCF was provided by Ed Walker, program director for the Office of Advanced Cyberinfrastructure (OAC) at the NSF’s Computer and Information Science and Engineering Directorate (CISE).

This will formally be the second phase of the LCCF. The first phase resulted in the delivery of Frontera, TACC’s 23.5 Linpack petaflops supercomputer that charted fifth on the Top500 lists in 2019 and still places in the top 20. This second phase is aimed at deploying a much more capable facility under the NSF’s Major Facilities planning process.

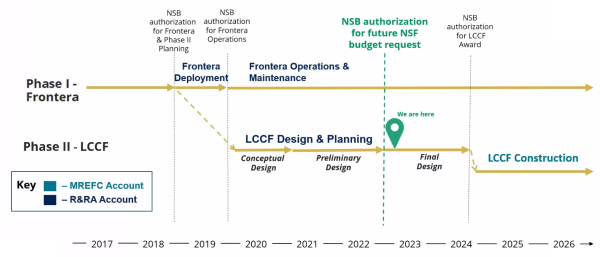

“As well as operating Frontera, TACC is also leading the NSF Major Facilities planning process for LCCF,” Walker said. “TACC officially started the design process in 2019, so the project has been doing this for more than three years now.”

The planning process has three stages: conceptual design, preliminary design and final design. Walker said that the LCCF project is now moving past the preliminary design phase and into final design. “The most recent external review of the project was the preliminary design review at the beginning of this year,” he said, adding that the project was rated “highly recommended” and approved by the NSF director to enter the final design stage in spring of this year.

August saw another major milestone for the project. “After extensive review of the strategic need for NSF to fund LCCF, the National Science Board authorized the NSF to also include LCCF in a future budget request,” Walker said.

What is the LCCF?

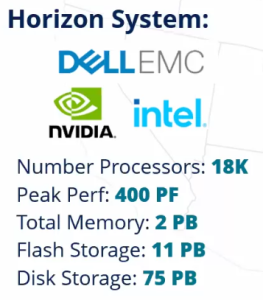

The flagship hardware of the LCCF will be the Horizon supercomputer, which, like Frontera, will be operated by TACC. The general mandate for Horizon, per TACC Director Dan Stanzione, is “10× faster!” — not necessarily achieved solely through flops improvements. Nevertheless, Walker referred to Horizon as a “half-exaflop system with significant memory and storage capabilities.” One of Walker’s slides outlined a few speculative specs for the system: 18,000 processors; 400 peak petaflops; 2PB of memory; 11PB of disk storage.

In an email to HPCwire, Stanzione characterized these specifications: the storage specs, he said, were “minimum bids,” while the 400 peak petaflops refers to peak flops from just one of several presented options. Stanzione added that any flops numbers for Horizon at this point should be taken with “huge grains of salt” and that there are currently viable options ranging from 300 petaflops to 750 petaflops, with some dependency on how one counts the flops. “Although we expect Horizon to be among the most powerful systems globally,” Walker said, “it is more important to note that Horizon is specifically designed to support a broad range of scientific applications across the NSF-funded research portfolio.”

Walker’s slides also showed logos from Dell EMC, Intel and Nvidia. Stanzione said that they have presented several priced options from each of those vendors, but that they remain among the options; John West, TACC’s director for strategic initiatives, added that those vendors are “not a complete list and certainly not a final list.” And, per Walker: “The architecture choice has not been actually decided yet — they have a number of candidate choices which they have made through discussions with Intel and Nvidia, but they haven’t made a decision yet.”

There was a somewhat more concrete nugget of information: “The Horizon system itself will be hosted in a hyperscale datacenter that is being built by Switch approximately ten miles from the TACC campus,” Walker said. Back in May, TACC had indicated that this would likely be the case, estimating that it would save $80 million in construction costs and accelerate the process by nine months.

But the LCCF extends far beyond Horizon. “LCCF is not just one system,” Walker said. “The project will provision a world-class computational capabilities through an integrated set of systems. Secondly, the project is expected to provision extensive software and services to support scientific simulation, AI and data analytics, as well as the full scientific data lifecycle. Thirdly, the project will be leveraging extensive partnerships across the country in standing up this facility. And fourthly, the project will have a comprehensive education and outreach plan to nurture the future workforce pipeline.”

And, while LCCF will be led by TACC, it will be orchestrated across five distributed sites. The four satellite sites will be designed as regional hubs of excellence, hosting resources and personnel to support LCCF’s user community. One will be led by the Atlanta University Center Consortium (AUCC), a coalition of four HBCUs in the Atlanta area, and will be aimed at providing workforce pathways into leadership and computing and data science for underrepresented groups; another, led by the Pittsburgh Supercomputing Center (PSC), will focus on protected data and mirroring data archives; a third, hosted by the National Center for Supercomputing Applications (NCSA), will provide services to support new AI processes for exploring large datasets; the final satellite site, led by the San Diego Supercomputer Center (SDSC), will support data analytics in scientific workflows and new workflows for democratizing access.

And beyond the five core sites, Walker said, the LCCF is incorporating a broad range of other partnerships and collaborations. “The project is partnering with 27 academic institutions during the design and construction, which includes four HBCUs and 4 MSIs as partners,” Walker said. “The project has also established deep partnerships with U.S. industry, including Dell, Intel, Nvidia” — note those names again — “and Switch, our hyperscale datacenter provider.”

When will LCCF arrive?

“The ultimate goal is to begin LCCF construction in 2024,” Walker said, “which again will be subject to review and ultimately the authorization before funding approval.” The construction phase, he said, would last two to three years, and “the expectation is that the facility will be deployed sometime in 2026.” West confirmed that the deployment of Horizon is still anticipated to begin in early 2025, with early science users starting on the machine in the back half of that year, ahead of the LCCF’s routine operations beginning in “late 2026.”