In this regular feature, HPCwire highlights newly published research in the high-performance computing community and related domains. From parallel programming to exascale to quantum computing, the details are here.

Reinventing high performance computing: challenges and opportunities

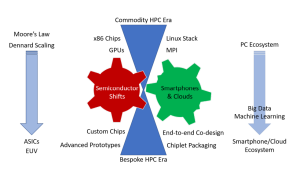

In this paper by a team of researchers from the University of Utah, University of Tennessee and Oak Ridge National Laboratory, the researchers dive into the challenges and opportunities associated with the state of high-performance computing. The researchers argue “that current approaches to designing and constructing leading-edge high-performance computing systems must change in deep and fundamental ways, embracing end-to-end co-design; custom hardware configurations and packaging; large-scale prototyping, as was common thirty years ago; and collaborative partnerships with the dominant computing ecosystem companies, smartphone and cloud computing vendors.” To prove their point, the authors provide a history of computing, discuss the economic and technological shifts in cloud computing, and the semiconductor concerns that are enabling the use of multichip modules. They also provide a summary of the technological, economic, and future directions of scientific computing.

The paper provided inspiration for the upcoming SC22 panel session of the same name, “Reinventing High-Performance Computing.”

Authors: Daniel Reed, Dennis Gannon, and Jack Dongarra

A type discipline for message passing parallel programs

Researchers from the University of Lisbon and University of the Azores (Portugal), Copenhagen University and DCR Solutions A/S (Denmark), and Imperial College London (UK) developed a type of discipline for parallel programs called ParTypes. In this research article published in the ACM Transactions on Programming Languages and Systems journal, the team of researchers focused “on a model of parallel programming featuring a fixed number of processes, each with its local memory, running its own program and communicating exclusively by point-to-point synchronous message exchanges or by synchronizing via collective operations, such as broadcast or reduce.” Researchers argue that the “Type-based approaches have clear advantages against competing solutions for the verification of the sort of functional properties that can be captured by types.”

Authors: Vasco T. Vasconcelos, Francisco Martins, Hugo A. López, and Nobuko Yoshida

Measurement-based estimator scheme for continuous quantum error correction

An international team of researchers from Okinawa Institute of Science and Technology Graduate University (Japan), Trinity College (Ireland), and the University of Queensland (Australia) developed a continuous quantum error correction (MBE-CQEC) scheme. In this paper published by the American Physical Society in the Physical Review Research journal, researchers demonstrated that by creating a “measurement-based estimator (MBE) of the logical qubit to be protected, which is driven by the noisy continuous measurement currents of the stabilizers, it is possible to accurately track the errors occurring on the physical qubits in real time.” According to researchers, by using the MBE, the newly developed scheme surpassed the performance of canonical discrete quantum error correction (DQEC) schemes, which “use projective von Neumann measurements on stabilizers to discretize the error syndromes into a finite set, and fast unitary gates are applied to recover the corrupted information.” The scheme also “allows QEC to be conducted either immediately or in delayed time with instantaneous feedback.”

Authors: Sangkha Borah, Bijita Sarma , Michael Kewming, Fernando Quijandría, Gerard J. Milburn, and Jason Twamley

GPU-based data-parallel rendering of large, unstructured, and non-convexly partitioned data

A team of international researchers leveraged Texas Advanced Computing Center’s Frontera supercomputer to “interactively render both Fun3D Small Mars Lander (14 GB / 798.4 million finite elements) and Huge Mars Lander (111.57 GB / 6.4 billion finite elements) data sets at 14 and 10 frames per second using 72 and 80 GPUs.” Motivated by Fun3D Mars Lander simulation data, the researchers from Bilkent University (Turkey), NVIDIA Corp. (California, USA), Bonn-Rhein-Sieg University of Applied Sciences (Germany), NESC-TEC & University of Minho (Portugal), and the University of Utah (Utah, USA) introduced a “GPU-based, scalable, memory-efficient direct volume visualization framework suitable for in situ and post hoc usage.” In this paper, the researchers described the approach’s ability to reduce “memory usage of the unstructured volume elements by leveraging an exclusive or-based index reduction scheme and provides fast ray-marching-based traversal without requiring large external data structures built over the elements themselves.” In addition, they also provide details on the team’s development of the “GPU-optimized deep compositing scheme that allows correct order compositing of intermediate color values accumulated across different ranks that works even for non-convex clusters.”

Authors: Alper Sahistan, Serkan Demirci, Ingo Wald, Stefan Zellmann, João Barbosa, Nathan Morrical, Uğur Güdükbay

Not all GPUs are created equal: characterizing variability in large-scale, accelerator-rich systems

University of Wisconsin-Madison researchers conducted a study with the goal “to understand GPU variability in large scale, accelerator-rich computing clusters.” In this paper, researchers seek to characterize the extent of variation due to GPU power management in modern HPC and supercomputing systems.” Leveraging Oak Ridge’s Summit, Sandia’s Vortex, TACC’s Frontera and Longhorn, and Livermore’s Corona, the researchers “collect over 18,800 hours of data across more than 90% of the GPUs in these clusters.” The results show an “8% (max 22%) average performance variation even though the GPU architecture and vendor SKU are identical within each cluster, with outliers up to 1.5× slower than the median GPU.”

Authors: Prasoon Sinha, Akhil Guliani, Rutwik Jain, Brandon Tran, Matthew D. Sinclair, and Shivaram Venkataraman

Tools for quantum computing based on decision diagrams

In this paper published in ACM Transactions on Quantum Computing, Austrian researchers from the Johannes Kepler University Linz and Software Competence Center Hagenberg provide an introduction to tools designated for the development of quantum computing for users and developers alike. To start, the researchers “review the concepts of how decision diagrams can be employed, e.g., for the simulation and verification of quantum circuits.” Then they present a “visualization tool for quantum decision diagrams, which allows users to explore the behavior of decision diagrams in the design tasks mentioned above.” Lastly, the researchers dive into “decision diagram-based tools for simulation and verification of quantum circuits using the methods discussed above as part of the open-source Munich Quantum Toolkit.” The tools and additional information are publicly available on GitHub at https://github.com/cda-tum/ddsim.

Authors: Robert Wille, Stefan Hillmich, and Lukas Burgholzer

Scalable coherent optical crossbar architecture using PCM for AI acceleration

University of Washington computer engineers developed an “optical AI accelerator based on a crossbar architecture.” According to the researchers, the chip’s design addressed the “lack of scalability, large footprints and high power consumption, and incomplete system-level architectures to become integrated within existing datacenter architecture for real-world applications.” In this paper, the University of Washington researchers also provided “system-level modeling and analysis of our chip’s performance for the Resnet50V1.5, considering all critical parameters, including memory size, array size, photonic losses, and energy consumption of peripheral electronics.” The results showed that “a 128×128 proposed architecture can achieve inference per second (IPS) similar to Nvidia A100 GPU at 15.4× lower power and 7.24× lower area.”

Authors: Dan Sturm and Sajjad Moazeni

Do you know about research that should be included in next month’s list? If so, send us an email at [email protected]. We look forward to hearing from you.