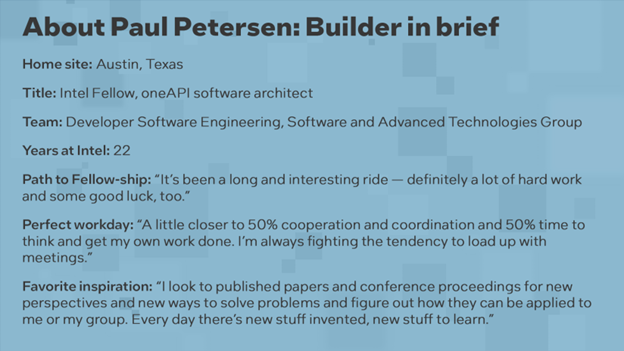

Behind the Builders: Paul Petersen applies ‘thinking in parallel’ to oneAPI, which lets developers maintain one set of code that runs on many kinds of chips — without compromising performance.

|

“We’re creating an open ecosystem that allows for developer productivity for high-performance software” Paul Petersen |

It’s increasingly clear that the future of computing isn’t one chip for everything, but rather many chips for many things. Intel CEO Pat Gelsinger recently envisioned a “sea of accelerators” from which customers could select, mix and match for their specific needs.

Sounds pretty great, right? Unless you’re the software developer burdened with creating custom code for every new kind of chip (or even to just try out a new kind of chip).

This is where Paul Petersen, an Intel Fellow and software architect for oneAPI, comes in. His job is to make it so developers can have one set of code that runs as fast as possible — for all the chips.

As straightforward as this sounds, it’s a task of breathtaking scope.

The ambition of oneAPI is to take on both these problems with one big swing: give developers choice when it comes to hardware and make it easier to achieve high-performance code. Intel, Petersen says, has “the unique viewpoint that we are allowing heterogeneous hardware into the mix.”

In contrast with proprietary solutions — particularly the CUDA programming model, which targets Nvidia GPUs — oneAPI is built on a foundation of openness that enables maximum choice and cements standards into place.

Quick sidebar: oneAPI can refer to two things. First is the oneAPI initiative, an open community working together to define and shape the oneAPI specification, which aims to give developers a common experience across different types of chips and from different vendors. Second are the Intel oneAPI toolkits based on that open standard that work on the span of Intel CPUs, GPUs and FPGAs. From here, “oneAPI” will refer to these Intel toolkits.

Portable Performance Across Many Kinds of Processors

Let’s say you are building a program to predict tomorrow’s weather or to model the interaction of molecules for medical therapies. The Intel oneAPI toolkits contain the tools to migrate and compile existing code and suites of libraries with functions pre-tuned for several kinds of chips — or with something like 4th Gen Intel® Xeon® Scalable processors, many accelerators on one chip.

Instead of learning three or four different ways to perform a single function, Petersen says, developers “can basically just learn the one way of doing it and then we handle the diversity and take care of the mapping down to the hardware in an efficient way.”

Eating complexity for customers has always been a bedrock Intel business strategy. In this case, “We dramatically simplify the developer’s cost of how much they need to learn and how many different ecosystems they need to maintain in order to do their job.”

Petersen describes oneAPI as “a performance-oriented programming system,” as opposed to something like JavaScript or Python “where it’s all about productivity, and performance is secondary.” The team is “creating an open ecosystem that allows that kind of developer productivity for high-performance software.”

“We strive to be as close to the hardware as we can get while still being able to be portable and supporting multiple kinds of hardware,” he explains. Therein lies the benefit to developers: code that is easier to write, maintain and market. It’s also the central challenge of Petersen’s work.

This approach means taking on “a lot of challenges in terms of performance optimization simultaneously on multiple targets. Anytime you’re allowing choice, you have to make decisions, which can increase latency. We’re constantly fighting to keep our code paths as short as possible.”

So far, so good: “We can go head-to-head with the same piece of hardware and show users that they’re not losing anything by switching to an open solution,” Petersen says. Several recent academic studies bear this out.

‘An Unbelievable Amount of Depth to Coordinate’

The result of this ambitious plan — to offer a competitive and comprehensive set of features, invent new capabilities and do so across an expanding array of hardware configurations — is that “oneAPI is huge,” Petersen confirms. Both literally and metaphorically.

Intel offers several oneAPI toolkits for different uses, each one composed of dozens of components, which decompose hierarchically into an uncounted number of functions. It totals into tens of millions of lines of code.

All the meetings filling up Petersen’s calendar are important for “an unbelievable amount of depth to coordinate, to get people to work together, to design interfaces that they can collaborate on, to make sure we’re able to get efficient execution out of all the pieces of hardware we’re trying to target.”

There’s another good reason to leave the complexity to Petersen and his team: It’s getting to be way too much for any one person to mind.

“It’s very hard for users to comprehend the scale of parallelism that these accelerators just naturally do and the speed at which they run,” Petersen explains. “We can easily get to the point where you have to imagine hundreds of thousands, if not millions of things running in parallel to have enough work to keep the devices busy. Developers need to think bigger in the scale of the parallelism that they need to do.”

A Career Spent ‘Thinking in Parallel’

As a new generation of palm-sized supercomputers like the Intel® Data Center GPU Max Series come to market, and supercomputers themselves surpass exascale and enter zettascale levels of performance, micromanaging hardware might become simply impossible. Petersen advises developers to “think in parallel.”

It’s easy for him to say: Petersen has been “thinking in parallel” since graduate school in the late 1980s. “In college I had the opportunity to be part of the international programming competitions,” Petersen says, “and I happened to be on the winning team one year.” He credits that little trophy on his CV to the folks who “took a chance on me and brought me into the Center for Supercomputing Research and Development at the University of Illinois.”

Petersen later got into a parallel computing research program led by David Kuck. After graduation, Petersen joined his company, Kuck & Associates, or KAI, to build performance-oriented compilers and programming tools.

In April 2000, Intel acquired Kuck’s company — Kuck himself is an Intel Fellow — “and since then we’ve been on a rocket ride up the insides of Intel, building different kinds of products,” Petersen says.

On Tap for oneAPI: More Openness, More Choice, More Performance

What’s next for the rocket ride of oneAPI? More ambition, of course.

On the developer’s side, oneAPI has been supporting more languages beyond C++, like Python, Java and Julia. On the hardware side, the forthcoming Intel oneAPI 2023 toolkits gain support for Intel’s latest and upcoming CPU, GPU and FPGA architectures, and include tools to ease the conversion from proprietary to multiarchitecture code.

On the performance side, Petersen envisions making oneAPI even more intelligent, able to load-balance across many CPUs, GPUs or other accelerators not only within one machine, but across racks and racks of them.

“We want users to be comfortable maintaining their software, even if they need to target new customers or new hardware. We’re creating the infrastructure that allows diversity of choice in the ecosystem — and we hope that customers choose Intel.”

Tags