A fascinating ACM paper by researchers Torsten Hoefler (ETH Zurich), Thomas Häner (Microsoft*) and Matthias Troyer (Microsoft) – “Disentangling Hype from Practicality: On Realistically Achieving Quantum Advantage” – and a Microsoft blog published yesterday by Troyer bring a much-needed dose of reality to the quantum computing conversation.

There’s no question that the excitement around quantum computing has produced a wide range of expectations and a deluge of hype. Today, quantum computers are far from the fault-tolerant ideal that will be needed to fulfill quantum computing’s promise. So-called NISQ (noisy intermediate-scale quantum) computers, with a relatively small number of error-prone qubits (tens to ~300 physical qubits), are today’s reality.

As the quantum ecosystem has expanded – hardware, software, middleware, etc. – a vigorous debate as arisen around whether early versions of NISQ quantum systems, hybrid classical-NISQ systems, or even so-called quantum-inspired algorithms run on classical systems can deliver some form of quantum advantage today. Frankly, you can be forgiven any confusion around what this really means, given the growing diversity of sales pitches and the many shifting definitions of quantum advantage.

Broadly, there is agreement that fault-tolerant quantum computing is the endgame and that it is years away (five, 10, 20 years; take your pick). Current thinking is that around a million physical qubits will be needed to deliver 10,000 error-corrected qubits.

What will we be able to do with such a machine that we can’t do on advanced classical HPC system?

This highly-regarded trio of researchers** (Troyer, Hoefler and Häner) set out to determine which applications will indeed benefit from quantum computing. “The promise of quantum computing at scale is real. It will solve some of the hardest challenges facing humanity. However, it will not solve every challenge,” wrote Troyer in his blog.

Here’s an excerpt from the blog:

“Many problems facing the world today boil down to chemistry and material science problems. Better and more efficient electric vehicles rely on finding better battery chemistries. More effective and targeted cancer drugs rely on computational biochemistry. And materials that can last long enough to be useful, but then biodegrade quickly afterwards, rely on discoveries in these fields.

“If quantum computers only benefited chemistry and material science, that would be enough. There is a reason that the major eras of innovation—Stone Age, Bronze Age, Iron Age, Silicon Age—are named for materials. Innovations in chemistry and material science are estimated to have an impact on 96 percent of all manufactured goods, which impact 100 percent of humanity.

“Our framework for evaluating quantum practicality shows that chemistry and material science problems benefit from quantum speedup because activities like simulating the interactions of a single chemical can be represented by a limited number of interaction strengths between electrons in their orbitals. While many approximate calculations of their properties are routinely performed, the operations are exponentially complex on classical computers, but efficient on quantum computers, falling within our stated guidelines.”

To evaluate quantum versus classical computing potential, the researchers created a general model of quantum computer capabilities and shortcomings. The idea was to compare a scaled quantum computer’s hypothetical performance (with “10,000 fast, error-corrected logical qubits, or about one million physical qubits”) to a classical computer with a single state-of-the-art GPU.

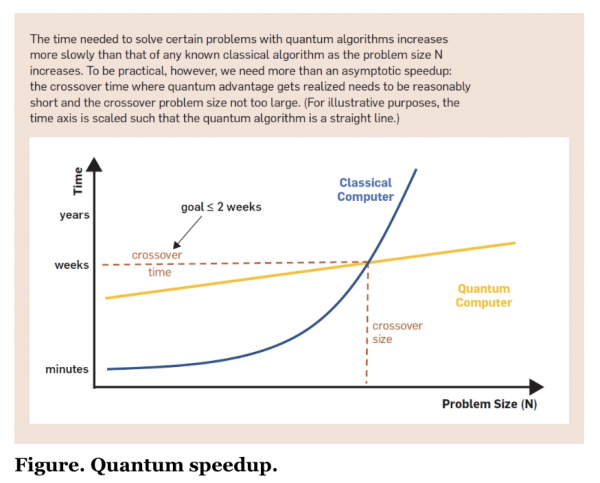

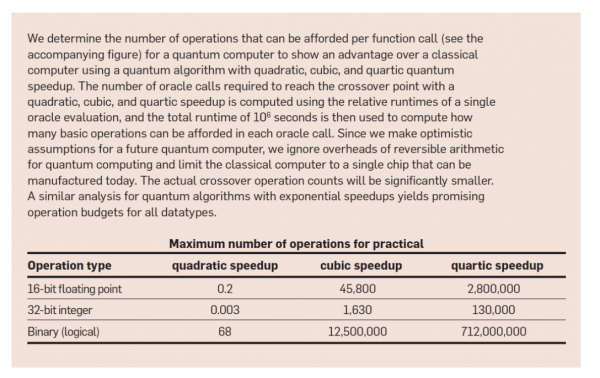

“For our analysis, we set a break-even point of two weeks, meaning a quantum computer should be able to perform better than a classical computer on problems that would take a quantum computer not more than two weeks to solve. Comparing the hypothetical future quantum computer to a single classical GPU available today, one finds that more than quadratic speedup—and ideally super-polynomial speedup—is needed. This is a significant finding since many proposed applications of quantum computing rely on the quadratic speedup of specific algorithms, such as Grover’s algorithm,” wrote Troyer in the blog.

Shown below are selected figures/tables from the Communications of the ACM paper. As always, it’s best to read the paper directly.

In the paper, the authors strike a cautious note.

“To establish reliable guidelines, or lower bounds for the required speedup of a quantum computer, we err on the side of being optimistic for quantum and overly pessimistic for classical computing. Despite our overly optimistic assumptions, our analysis shows a wide range of often-cited applications is unlikely to result in a practical quantum advantage without significant algorithmic improvements. We compare the performance of only a single classical chip fabricated like the one used in the Nvidia A100 GPU that fits around 54 billion transistors with an optimistic assumption for a hypothetical quantum computer that may be available in the next decades with 10,000 error-corrected logical qubits, 10μs gate time for logical operations, the ability to simultaneously perform gate operations on all qubits and all-to-all connectivity for fault tolerant two-qubit gates,” they write.

As key insights, they bullet out the following:

- “Most of today’s quantum algorithms may not achieve practical speedups. Material science and chemistry have a huge potential and we hope more practical algorithms will be invented based on our guidelines.”

- “Due to limitations of input and output bandwidth, quantum computers will be practical for “big compute” problems on small data, not big data problems.”

- “Quadratic speedups delivered by algorithms such as Grover’s search are insufficient for practical quantum advantage without significant improvements across the entire software/hardware stack.”

In many ways, it’s not clear if the ACM paper expresses a half-empty or half-full view. It should be noted that Hoefler was fairly skeptical of early quantum claims on a SC22 panel last November (see HPCwire article, “Quantum – Are We There (or Close) Yet? No, Says the Panel”).

Under the heading of practical and impractical applications, the researchers write in their ACM paper:

“We can now use these considerations to discuss several classes of applications where our fundamental bounds draw a line for quantum practicality. The most likely problems to allow for a practical quantum advantage are those with exponential quantum speedup. This includes the simulation of quantum systems for problems in chemistry, materials science, and quantum physics, as well as cryptanalysis using Shor’s algorithm. The solution of linear systems of equations for highly structured problems also has an exponential speedup, but the I/O limitations discussed above will limit the practicality and undo this advantage if the matrix has to be loaded from memory instead of being computed based on limited data or knowledge of the full solution is required (as opposed to just some limited information obtained by sampling the solution).

“Equally important, we identify likely dead ends in the maze of applications. A large range of problem areas with quadratic quantum speedups, such as many current machine learning training approaches, accelerating drug design and protein folding with Grover’s algorithm, speeding up Monte Carlo simulations through quantum walks, as well as more traditional scientific computing simulations including the solution of many non-linear systems of equations, such as fluid dynamics in the turbulent regime, weather, and climate simulations will not achieve quantum advantage with current quantum algorithms in the foreseeable future. We also conclude that the identified I/O limits constrain the performance of quantum computing for big data problems, unstructured linear systems, and database search based on Grover’s algorithm such that a speedup is unlikely in those cases. Furthermore, Aaronson et al.1 show the achievable quantum speedup of unstructured black-box algorithms is limited to O(N4). This implies that any algorithm achieving higher speedup must exploit structure in the problem it solves.

“These considerations help with separating hype from practicality in the search for quantum applications and can guide algorithmic developments. Specifically, our analysis shows it is necessary for the community to focus on super-quadratic speedups, ideally exponential speedups, and one needs to carefully consider I/O bottlenecks when deriving algorithms to exploit quantum computation best. Therefore, the most promising candidates for quantum practicality are small-data problems with exponential speedup. Specific examples where this is the case are quantum problems in chemistry and materials science, which we identify as the most promising application. We recommend using precise requirements models to get more reliable and realistic (less optimistic) estimates in cases where our rough guidelines indicate a potential practical quantum advantage.”

Apologies for the lengthy excerpting to both Microsoft and ACM, but it seems important to add credible cautionary comment to the ongoing quantum conversation. Balancing pragmatism with ongoing (and potentially revenue-generating) activity is a difficult balance for most companies developing quantum offerings.

Troyer, for example, balances the cautionary aspect of the paper with a more buoyant section in his blog: “While scaled quantum computing is required to solve the hardest, most complex chemistry and materials science problems, progress can be made today with Azure high-performance computing. For example, Johnson Matthey and Microsoft Azure Quantum chemists have accelerated some quantum chemistry calculations in their search for hydrogen fuel cell catalysts by combining high-performance computing and specific quantum functions to reduce the turnaround time for their scaled workloads from six months to a week.”

Stay tuned.

* Häner was at Microsoft during the work and has since moved to AWS

** Authors

Torsten Hoefler is Consulting Researcher at Microsoft Corporation, Redmond, WA, USA, and a professor at ETH Zurich, Switzerland.

Thomas Häner is Research Scientist at Amazon Web Services, Zurich, Switzerland. This work was done when he worked at Microsoft, Zurich, prior to his joining AWS.

Matthias Troyer is Technical Fellow and Corporate Vice President at Microsoft, Redmond, WA, USA.

Link to Microsoft blog: https://cloudblogs.microsoft.com/quantum/2023/05/01/quantum-advantage-hope-and-hype/

Link to ACM paper: https://cacm.acm.org/magazines/2023/5/272276-disentangling-hype-from-practicality-on-realistically-achieving-quantum-advantage/fulltext