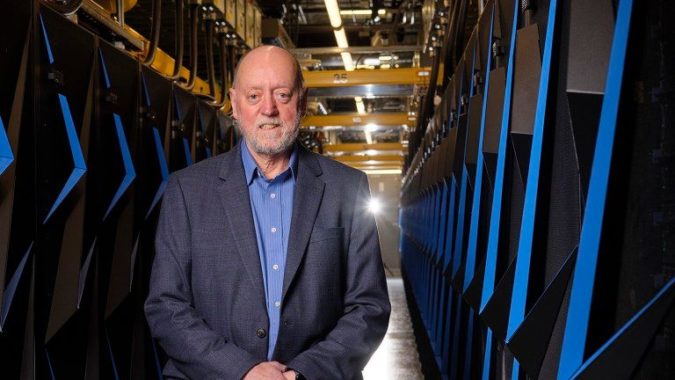

The selection of Jack Dongarra as the recipient of the 2021 Turing Award was a well-deserved recognition of his invaluable contributions to the field of high performance computing. His pioneering work in developing numerical algorithms, parallel computing techniques, and performance evaluation helped application software migrate through 40 years of HPC hardware advances. The award came as Dongarra was planning his retirement.

Though he has stepped back from his day job with the University of Tennessee, Knoxville and Oak Ridge National Lab, his work will provide a foundation for the next generation of computer scientists to build upon. In recognition of that undertaking, the new ISC High Performance Jack Dongarra Early Career Award and Lecture Series was established, the first of which will take place during ISC 2023. The series was designed to spotlight exceptional work by up-and-coming scientists and engineers in the HPC space. “I hope to be a role model for these young researchers in the field,” he shared.

Though he has stepped back from his day job with the University of Tennessee, Knoxville and Oak Ridge National Lab, his work will provide a foundation for the next generation of computer scientists to build upon. In recognition of that undertaking, the new ISC High Performance Jack Dongarra Early Career Award and Lecture Series was established, the first of which will take place during ISC 2023. The series was designed to spotlight exceptional work by up-and-coming scientists and engineers in the HPC space. “I hope to be a role model for these young researchers in the field,” he shared.

In a recent interview, ISC Comms Team Lead Nages Sieslack asked Dongarra to reflect upon his career, what drove him to pursue this particular path, and what the future might hold for his work.

Nages Sieslack: Let’s begin with the 2021 ACM A.M. Turing Award. What does this recognition mean to you?

Jack Dongarra: I was in shock when they told me that I was going to be the recipient of the year. And to be fair, I still cannot quite get my head around it. I know about all of the past Turing Award winners; I have learned from their books, I have read their papers, and I have used their theorems and techniques. So, to be in that class of people is overwhelming! And, of course, I must give credit to the generations of colleagues, students, and staff who helped me and have influenced me over the years to obtain this recognition. It comes about by having a great group of people and mentors who can push you in the right direction. I hope I can live up to all the greatness that the Turing Award has recognized and become a role model, as many of the past recipients have been, to the next generation of computer scientists.

Sieslack: What kindled your interest in studying computer science and pursuing a career in high performance computing? Where and how did it all begin?

Dongarra: As an undergraduate, I worked on EISPACK, a software package designed to solve eigenvalue problems. My role was helping develop test problems and ensure things were working correctly. It was a wonderful environment. Then, I completed my master’s degree, and they offered me a job.

LINPACK was an NSF funded project in the late 70’s that involved researchers at Argonne, University of New Mexico, University of California San Diego, and the University of Maryland. The goal was to design a software package for solving systems of linear equations that was based on state-of-the-art algorithms – and was portable, reliable, enhanced productivity, and provided efficient solutions to the scientific computer architectures in use at that time. As a way to measure efficiency, I constructed a benchmark to measure the performance of a computer when running the LINPACK software, which became the LINPACK benchmark. It appeared in a table in the LINPACK Users’ Guide.

With my colleagues in Germany, Hans Meuer and Eric Strohmaier, we put together the first Top500 in 1993. Before then, Hans had a list of the fastest computers and I had a benchmark that rated those computers; Hans approached me about putting it together and calling it the Top500.

The software packages that I have been involved in designing have found their way into the fabric of how problems in computational science are solved. As computer architectures evolve over time, from scaler to vector to multicore to distributed memory to hybrid architectures, the software packages are among the first to adapt to the changes. Thus, the software packages must be rewritten to embrace the architecture.

You can see this in the evolution and development of the packages. EISPACK was designed for scalar computers and LINPACK for vector architectures; LAPACK and the BLAS for use on cache-based and shared memory computers; ScaLAPACK and MPI were intended for distributed memory architectures, and PLASMA and MAGMA developed as the need for multicore and hardware accelerators (GPU’s) entered the computer landscape. And today we are working on SLATE, which addresses the challenges of exascale-based computing. Along the way, there is the need for performance evaluation, and that’s where the benchmarks fit into the picture.

Sieslack: If you hadn’t followed this career direction, what do you think you would have pursued?

Dongarra: My plan was to become a teacher and teach science to high school students. That was my plan when he enrolled at Chicago State College, which became Chicago State University by the time I graduated in 1972. Over the course of my studies, I began to be fascinated by computers. In my senior year, physics professor Harvey Leff suggested he apply for an internship at nearby Argonne National Laboratory, where I could gain some computing experience.

There, I joined a group developing EISPACK, a software library for calculating eigenvalues, components of linear algebra that are important to performing simulations of chemistry and physics. It was a heady experience. “I wasn’t really a terrific, outstanding student,” Dongarra recalls. “I was thrown into a group of 40 or 50 people from around the country who came from top universities and I got to mix with them.” Project leader Brian Smith became his mentor. “He was very, very patient with me. I didn’t have a very extensive background in computing, and he gave me attention and guided me along.”

The experience changed my plans. After earning a degree in mathematics, I began a master’s program in computer science at Illinois Institute on Technology. This was the beginning of a career in which I helped usher in high performance computing by creating software libraries that allowed programs to run on various processors.

Sieslack: Let’s jump into work. Your LINPACK benchmark became the basis for the Top500 project. Why do you think this pairing has been so successful?

Dongarra: The LINPACK benchmark has been in continuous use since the 1970s. It was born out of necessity, because it could quickly test the performance of vector subroutines, which served as a good approximation of performance for the rest of the LINPACK library. Thanks to the nature of the implementation, the LINPACK benchmark also served as a first-order approximation of other codes. That’s partially due to the well-balanced hardware of the time, which offered plentiful bandwidth for every floating-point operation. Over the years, Moore’s law eroded the compute-to-bandwidth balance, resulting in a memory wall.

To reassess application needs in this new and different hardware regime, it is worthwhile to look at computational simulations. Many computational simulations involve heat diffusion, electromagnetics, and fluid dynamics. Unlike LINPACK, which tests raw floating-point performance, these real-world applications rely on partial differential equations (PDEs) that govern the continuous representations of physical quantities like particle speed, momentum, etc. These PDEs involve sparse (not dense) matrices that represent the 3D embedding of the discretization mesh. While the size of the sparse data fills the available memory to accommodate the simulation models of interest, most of the optimization techniques that help achieve close to peak performance in dense matrix calculations are only marginally beneficial in sparse matrix computations originating from PDEs.

The Top500 LINPACK benchmark can be characterized as a dense matrix doing dense operations. Machines that do floating point operations efficiently are going to look good on this benchmark, even though most real-world problems don’t actually require it.

So we developed a benchmark called the high performance conjugate gradients, or HPCG. HPCG solves matrix problems not with a direct approach – not based on matrix multiplication, where, if you have two matrixes of roughly order n, the number of operations is n3 but the amount of data moved is only n2. Instead, HPCG uses an iterative approach that manipulates sparse matrices, which shows the characteristics of the hardware better in terms of real applications.

Just to put that into perspective, if I were to run the Top500 benchmark on a computer, I would expect to see performance reach around 75% of the theoretical peak. The HPCG benchmark shows performance of around 3% of the theoretical peak. That’s our “dirty little secret”: most applications are far from having reached the theoretical peak of these high-performance computers. Still, it’s a good way to expose the performance issues and look at how we might resolve and improve the situation.

We have a range of benchmarks to measure performance: HPL, HPCG, as well as HPL-MxP.

Sieslack: How would you like to see your work evolve by future investigators? Are there different avenues that you think should be explored?

Dongarra: Well, there’s always something new to learn and use in solving the current problems. I have been fortunate to work with an incredibly talented international community of people over the years to develop algorithms, software, and standards that have helped shape the computational science area. And that work could not have happened without those people.

Having students is instrumental in that respect, because it helps us push forward and explore multiple fronts. We try to do research, not just development, meaning we need to experiment and we need to fail, because that’s an important part of the learning process. Sometimes, our students come to me in search of a research problem to work on, and when I give them one, they immediately tell me that they don’t know how to do it. And I say, “That’s perfect. That’s exactly the point. I wouldn’t give you a problem if you already knew how to do it.” That’s where the excitement is, learning new things and overcoming obstacles.

Torsten Hoefler, a rising star in the HPC domain, is the first Jack Dongarra Award Early Career Award recipient. Hoefler is an associate professor at ETH Zurich, and his primary research is on performance-centric system design, which includes scalable networks, parallel programming techniques, and performance modeling for large-scale simulations and AI systems. The award comprises an invitation to deliver a lecture at ISC 2023 and a cash prize of 5,000 euros. Dongarra is the guest of honor at the awarding ceremony.

Hoefler will deliver a lecture titled Inheriting Excellence: High-Performance Computing at a Crossroads. You can catch this talk on Monday, May 22, from 11:25 am to 12 pm (Hall Z).

About the Author: Nages Sieslack is head of the ISC communications team. ISC will be commencing on Sunday, May 21, in Hamburg, Germany.