Nvidia launched a new Ethernet-based networking platform – the Nvidia Spectrum-X – that targets generative AI workloads. Based on tight coupling of the Nvidia Spectrum-4 Ethernet switch with the Nvidia BlueField-3 DPU, Spectrum-X delivers 1.7x better overall AI performance and power efficiency, along with consistent, predictable performance in multi-tenant environments, according to Nvidia, which made the announcement at Computex this week.

In a pre-briefing, Gilad Shainer, Nvidia senior vice president of networking, said, “An AI cloud system utilizes two Ethernet networks. One is used for cloud control and user access; [it’s] often called the North-South network. The other one is the compute fabric connecting GPUs and CPUs [and] is commonly referred to as the East-West network. Traditional Ethernet used for East West connectivity is simply too slow to handle modern generative AI workloads.”

The Spectrum-X platform, said Shainer, is the world’s first Ethernet offering that’s purpose-built for AI. The platform includes the Nvidia Spectrum-4 Ethernet switch and BlueField-3 DPUs to create an end-to-end Ethernet infrastructure for Gen AI clouds. “Spectrum-X introduces a lossless Ethernet network, one that does not drop data packets and therefore is able to maintain very short tail latency. It includes new adaptive routing technology for RoCE RDMA operations, which translates to a 2x higher network performance versus traditional Ethernet when we scale GPU connectivity,” he said.

Shainer was asked during Q&A how Spectrum-X is able to avoid dropped packets, as buffer sizes are finite, and re-sending packets is commonly required when doing end-to-end congestion control.

“Congestion control in traditional Ethernet is done, for example, by switches that try to detect cases and then create notifications that are used to do the congestion control,” said Shainer. “What we did [using] Spectrum-4 and BlueField-3, is to create a new mechanism for congestion control, which does not depend on the network to discover hotspots of congestion that have already occurred and then try to deal with them.”

“[Instead,] it’s actually using advanced telemetry to be able to understand latencies across the network and small changes in latency across the network in order to be able to identify [potential] hotspots. Getting that information very, very quickly to the DPUs actually controls the data injection rate, and with that, you can keep the network hotspot free. You are able to do lossless, you’re able to do adaptive routing on that case and on that network, and actually get a great level of performances,” said Shainer.

As described by Nvidia:

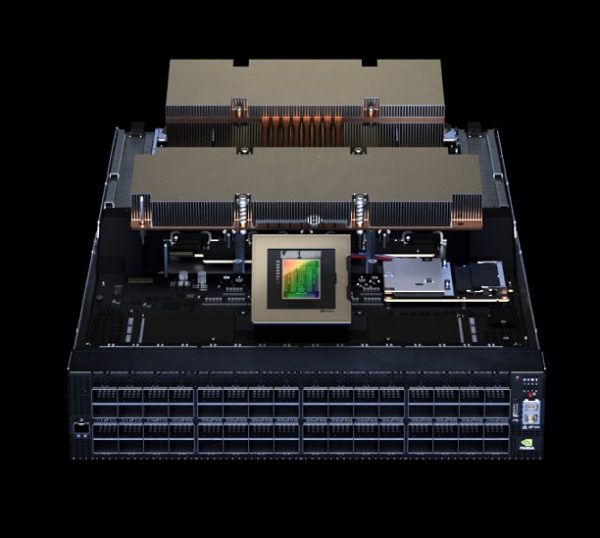

“The new platform starts with Spectrum-4, the world’s first 51Tb/sec Ethernet switch built specifically for AI networks. Advanced RoCE extensions work in concert across the Spectrum-4 switches, BlueField-3 DPUs and LinkX optics to create an end-to-end 400GbE network that is optimized for AI clouds. Spectrum-X enhances multi-tenancy with performance isolation to ensure tenants’ AI workloads perform optimally and consistently. It also offers better AI performance visibility, as it can identify performance bottlenecks and it features completely automated fabric validation.

“Acceleration software driving Spectrum-X includes powerful Nvidia SDKs such as Cumulus Linux, pure SONiC and NetQ – which together enable the networking platform’s extreme performance. It also includes the Nvidia DOCA software framework, which is at the heart of BlueField DPUs. Spectrum-X enables unprecedented scale of 256 200Gb/s ports connected by a single switch, or 16,000 ports in a two-tier leaf-spine topology to support the growth and expansion of AI clouds while maintaining high levels of performance and minimizing network latency.”

Spectrum-X, Spectrum-4 switches, and BlueField-3 DPUs are available now from system makers including Dell Technologies, Lenovo, and Supermicro.

Link to announcement, https://www.hpcwire.com/off-the-wire/nvidia-launches-accelerated-ethernet-platform-for-hyperscale-generative-ai/