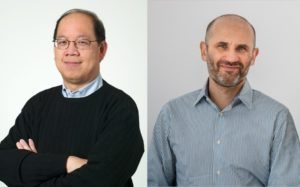

Aug. 13, 2020 — Over the next five years, the U.S. Department of Energy (DOE) will provide $57.5 million for two Scientific Discovery through Advanced Computing (SciDAC) Institutes: Frameworks, Algorithms, and Scalable Technologies for Mathematics (FASTMath) and Resource and Application Productivity through computation, Information, and Data Science (RAPIDS2). And, Lawrence Berkeley National Laboratory (Berkeley Lab) staff will continue to hold leadership positions in both—Computational Research Division (CRD) Deputy Esmond Ng as director of FASTMath and CRD Senior Scientist Lenny Oliker as deputy director of RAPIDS2.

The SciDAC program brings together the nation’s top experts in domain science, applied math, and computer science to help tackle some of the most challenging problems of interest to DOE, from climate science to accelerator physics, and more. And, the SciDAC Institutes provide expertise and develop the computational tools that allow scientists to take full advantage of DOE’s state-of-the-art supercomputing resources to take on those problems. In the next round of SciDAC funding, FASTMath will continue to be based at Berkeley Lab and RAPIDS2 will be based at Argonne National Laboratory.

“FASTMath software has made significant impacts on a wide range of science and engineering problems across DOE, in academia, and in industry. We are currently interacting with 23 SciDAC-4 partnership projects helping them achieve high-fidelity simulations of real-world conditions,” said Ng. “Our collaborative work, both within FASTMath and with the SciDAC-4 RAPIDS Institute, has increased the efficiency of many of DOE’s core technologies.”

But he notes that with the increasing use of low-cost, low-power accelerators, like GPUs, and the complexity of current and next-generation computer architectures, computational scientists will need to redesign their codes to use these systems more effectively.

“Domain scientists are eager to solve problems with ever-increasing fidelity, or realism, to pursue new physics, including the effective and accurate coupling of behaviors across multiple scales of time and space, and to quantify the uncertainty in their computation. They want to determine how to employ machine learning to more completely address their modeling needs and simulation workflows,” said Ng.

So, the FASTMath team, which includes five DOE laboratories and five universities, will be using this new round of SciDAC funding to develop new mathematical techniques, numerical algorithms, and machine learning expertise to solve multiphysics, multiscale, and multiresolution problems in increasingly complex computer architectures as the world moves into the age of exascale computing.

The RAPIDS2 Institute will also assist DOE Office of Science application teams in overcoming computer science, data, and artificial intelligence challenges in the use of DOE supercomputing resources to achieve scientific breakthroughs. Oliker notes that this SciDAC Institute will focus on assisting application teams in four areas: data understanding, platform readiness, scientific data management, and artificial intelligence. By bringing to bear mature technologies in these areas and enhancing these technologies to adapt to new science requirements and platforms, the team will enable scientific advances across the SciDAC portfolio.

“RAPIDS2 members have a long track record of successful collaborations across large-scale SciDAC projects, including the recent contribution to 25 SciDAC-4 Partnership applications. Our engagement in the forthcoming Institute will develop and deploy key technologies that will help maximize scientific impact on the latest generation of high-end computing platforms at the DOE,” said Oliker.

Total planned funding is $57.5 million over five years, with $11.5 million in Fiscal Year 2020 dollars and outyear funding contingent on congressional appropriations.

Read more about SciDAC-5: https://www.energy.gov/articles/department-energy-provide-575-million-science-computing-teams

About Computational Research at Berkeley Lab

The Computational Research Division conducts research and development in mathematical modeling and simulation, algorithm design, data storage, management and analysis, computer system architecture and high-performance software implementation. We collaborate directly with scientists across LBNL, the Department of Energy and industry to solve some of the world’s most challenging computational and data management and analysis problems in a broad range of scientific and engineering fields, including materials science, biology, climate modeling, astrophysics, fusion science, and many others. We also develop advanced capabilities for scientific data understanding through visualization and analytics. We perform research in high-performance computing (HPC) technology for extreme-scale computing systems, including research into performance optimization, performance analysis, benchmarking, and performance engineering of scientific applications, compilers, operating systems, and runtime systems. We also perform research in cloud computing, workflow tools, scheduling, computational frameworks and distributed systems. Our products range from peer-reviewed scientific publications to scientific research codes to end-to-end computational and data analysis capabilities that enable scientists and engineers to address complex and large-scale technical challenges.

Source: Linda Vu, Computational Research at Berkeley Lab