July 11, 2019 — The move toward cleaner, cheaper energy would be much easier if we had more powerful, safer battery technologies. Carnegie Mellon University (CMU) scientists have used the XSEDE-allocated Bridges system at the Pittsburgh Supercomputing Center (PSC) and Comet at the San Diego Supercomputer Center (SDSC) to simulate new battery component materials that are inherently safer and more powerful than currently possible.

Why It’s Important:

Better batteries might not make all our energy problems vanish. But they’d be a really good start. Companies as varied as Tesla, Chevrolet, Jaguar and Audi have begun selling electric cars. This promises a generation of vehicles that run on whatever technology at a given time provides the most economical—and cleanest—electricity. But the performance of today’s batteries falls short for use in larger vehicles, such as trucks and aircraft. By the same token, wind and, increasingly, solar power are becoming important actors in the U.S. energy grid. But they’d be far more cost-efficient if we could store the peak power they generate during the day, so that it can be used whenever needed. Again, today’s batteries aren’t quite up to it.

“Many companies are moving toward personal vehicles being electrified. Moving to larger vehicles such as trucks or aviation requires a higher energy density; and as we approach higher energy densities, the technical problems become bigger,” said Gregory Houchins, CMU.

There’s a gap between what today’s battery technology can do and what’s needed for these transformations. Batteries’ ability to store energy—their “energy density”—has to increase, and it has to happen without risk of fires, as seen in some devices. It would also be nice if these batteries didn’t contain so much cobalt, which is found in very few parts of the world and so increases their cost.

“We’re trying to find new solid electrolytes that can conduct ions quickly as [today’s] liquid electrolytes, which are flammable. And we need anodes with a very high energy density,” said Zeeshan Ahmad, CMU.

Graduate students Zeeshan Ahmad and Gregory Houchins, working in the CMU lab of Assistant Professor Venkat Viswanathan, have been pursuing different avenues in their group’s quest to find safer, more powerful solid-state lithium batteries. To do this, they turned to simulations and machine learning on two XSEDE-allocated systems: Bridges at PSC and Comet at SDSC.

How XSEDE Helped:

Houchins worked on the problem of finding cathodes—the positive pole of a battery—that contain lower amounts of expensive cobalt. He wrote software that randomly explores different cathode compositions and tests their efficiencies using the known properties of each simulated material’s components. This would have been a problem on a traditional supercomputer. That’s because the two steps of generating a candidate material and simulating its properties require repeatedly refining the application. A traditional system would have forced him to perform both steps for each material and wait to see if they worked properly—or failed, in which case he had to start again.

“One thing I like about Bridges is the interactive feature … I’ve written a code that will sample the composition space randomly, and from that read-in try and find the best model. It’s all automated, and debugging is difficult to do [in a single submitted batch to a traditional supercomputer]. I’m able to use the interactive mode to quickly debug that code,” said Gregory Houchins, CMU.

Bridges, though, emphasizes interactive access. That feature allowed Houchins to monitor the computation’s progress as it happened, and correct where needed. This helped him to debug quickly, wasting far less time. To date his software has identified over a dozen alternative cathodes predicted to perform as well as the high cobalt-containing materials now used in lithium batteries.

Ahmad, meanwhile, was working on another problem. Batteries consist of a positive cathode, a negative anode, and an electrolyte that allows electricity to flow between them. Lithium batteries are powerful and compact. But their liquid electrolytes are highly flammable. Also, the tendency of dendrites—literally, “little fingers”—to form on the anode and reach toward the cathode further risks fire by causing a short circuit between the anode and cathode. It also limits the lifetime of the battery.

“XSEDE has provided my group with the computational resources needed to tackle some of these very important problems,” said Venkat Viswanathan, CMU.

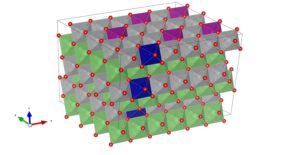

Ahmad and his collaborators used PSC’s Bridges and SDSC’s Comet to simulate non-flammable solid electrolytes that also wouldn’t allow dendrites to form on the anode. This project used machine learning, a type of artificial intelligence that makes the computer experiment with many random solutions until it finds ones that meet the goal. The scientists screened almost 13,000 candidate solid electrolytes, finding 10 predicted to discourage dendrite formation. They reported these results in the journal ACS Central Science last year.

Both projects need further development—both will screen for more candidates, and the materials identified need to be made and tested in the real world to confirm they have the predicted properties. But they’ve taken the first steps in cracking the fundamental problems that limit battery storage.

Deeper Dive: Machine Learning on Multiple Systems

Zeeshan Ahmad’s project using machine learning to predict whether anode materials would be likely to form dendrites had two major components, each of which required a different computer architecture to run well. The first method, used by Ahmad’s coauthor Tian Xie of Massachusetts Institute of Technology (MIT), was a convolutional neural network. CNNs consist of layers of analysis in which parts of the computation are programmed to behave roughly like nerve cells in the brain’s optical cortex, with each virtual “neuron” connecting with a set of neurons in the layer above and below it.

In the collaborators’ CNN, the neural network focused on two major properties of the materials—the shear modulus, or resistance against a shearing force, and the bulk modulus, or the resistance against compression. These properties determine whether dendrites will form in a battery using that material as the electrolyte. The CNN has good predictive power for these two properties since the training data are accurate and well established from first-principles.

But the candidate materials are anistropic—their properties vary in different directions. Because of this, the shear and bulk modulus are not enough to determine whether a material will form dendrites. To test the different anisotropic properties of each material, Ahmad used different regression techniques suited to the available data. The types of regression that worked best for these computations were gradient boosting and kernel ridge regression.

“We’re running calculations that have to store a lot of data on the quantum mechanical wavefunction of electron in different systems … the stored data increase to the third power of the number of electrons in the system. Bridges’ high memory per core enables us to use just one node; in another system, we would have to use multiple nodes—besides the data transfer reason, in conventional Slurm queuing systems, it would take more time for a multiple-node job to clear the queue,” said Zeeshan Ahmad, CMU.

GPUs, or graphics processing units, tend to make CNN computations run fastest. But the quantum mechanical calculations underlying the computation are extremely memory hungry. At the time, the GPU resources available would have been overcome by a roadblock in accessing the data. So each computation in effect required a different kind of computer.

The scientists ran the CNN on the GPU nodes in SDSC’s Comet, and the regression on the “regular memory” CPU nodes in PSC’s Bridges. The latter had enough memory—128 gigabytes, enough to qualify as large-memory on most HPC systems—to speed the regression and quantum mechanical computations. The two systems helped the scientists to run both computations quickly and efficiently. Since this phase of the research concluded, a new GPU-AI resource has been added to Bridges, including a DGX-2 node that will enable the entire workflow. The MIT group is continuing their research on this resource, which will make future such computations even faster and more efficient.

Source: Ken Chiacchia, Pittsburgh Supercomputing Center