July 26, 2019 — What should personalized, precision treatment of cancer look like in the future? We know that people are different, their tumors are different, and they respond differently to different therapies. Medical teams of the future might be able to create a “virtual twin” of a person and their tumor. Then, by tapping supercomputers, physician-led teams could simulate how tumor cells behave to test millions (or billions) of possible treatment combinations. Ultimately, the best combinations might offer clues towards a personalized, effective treatment plan.

Sound like wishful thinking? The first steps towards this vision have been undertaken by a multi-institution research collaboration that includes Jonathan Ozik and Nicholson Collier, computational scientists at the U.S. Department of Energy’s Argonne National Laboratory.

The research team, which includes collaborators at Indiana University and the University of Vermont Medical Center, brought the power of high-performance computing to the thorny challenge of improving cancer immunotherapy. The team tapped twin supercomputers at Argonne and the University of Chicago, finding that high-performance computing can yield clues in fighting cancer, as discussed in a June 7 article published in Molecular Systems Design and Engineering.

“With this new approach, researchers can use agent-based modeling in more scientifically robust ways,” said Nicholson Collier, computational scientist at Argonne and the University of Chicago

Standing up to cancer

Cancer immunotherapy is a promising treatment that realigns your immune system to reduce or eliminate cancer cells. The therapy, however, helps only 10 to 20 percent of patients — partly because the way in which cancer cells and immune cells mingle is complex and poorly understood. Proven rules are scarce.

To begin uncovering the rules of immunotherapy, the team turned to a set of three tools:

- Agent-based modeling, which predicted the behavior of individual “agents” – cancer and immune cells, in this case

- Argonne’s award-winning workflow technology to take full advantage of the supercomputers

- A guiding framework to explore models and dynamically direct and track results

The trio operate in a hierarchy. The framework, developed by Ozik, Collier, Argonne colleagues, and Gary An, a surgeon and professor at the University of Vermont Medical Center, is called Extreme-scale Model Exploration with Swift (EMEWS). It oversees the agent-based model and the workflow system, the Swift/T parallel scripting language, developed at Argonne and the University of Chicago.

What is unique about this combination of tools? “We are helping more people in a variety of computational science fields to do large-scale experimentation with their models,” said Ozik, who — like Collier — holds a joint appointment at the University of Chicago. “Building a model is fun. But without supercomputers, it is difficult to really understand the full potential of how models can behave.”

Working smarter, not harder

The team sought to find simulated scenarios in which:

- No additional cancer cells grew

- 90 percent of cancer cells died

- 99 percent of cancer cells died

They found that no cancer cells grew in 19 percent of simulations, 9 in 10 cancer cells died in 6percent of simulations, and 99 in 100 cancer cells died in about 2 percent of the simulations.

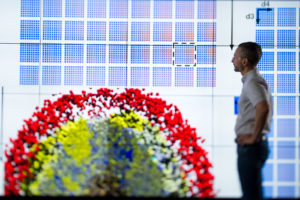

The team began with an agent-based model, built with the PhysiCell framework, designed by Indiana University’s Paul Macklin to explore cancer and other diseases. They assigned each cancer and immune cell characteristics — birth and death rates, for example — that govern their behavior and then let them loose.

“We use agent-based modeling to address many problems,” said Ozik. “But these models are often computationally intensive and produce a lot of random noise.”

Exploring every possible scenario within the PhysiCell model would have been impractical. “You can’t cover the entire model’s possible behavior space,” said Collier. So the team needed to work smarter, not harder.

The team relied on two approaches — genetic algorithms and active learning, which are forms of machine learning— to guide the PhysiCell model and find the parameters that best controlled or killed the simulated cancer cells.

Genetic algorithms seek those ideal parameters by simulating the model, say, 100 times and measuring the results. The model then repeats the process again and again using better-performing parameter values each time. “The process allows you to find the best set of parameters quickly, without having to run every single combination,” said Collier.

Active learning is different. It also repeatedly simulates the model, but, as it does, it tries to discover regions of parameter values where it would be most advantageous to further explore in order to get a full picture of what works and what doesn’t. In other words, “where you can sample to get the best bang for your buck,” said Ozik.

Meanwhile, Argonne’s EMEWS acted like a conductor, signaling the genetic and active learning algorithms at the right times and coordinating the large number of simulations on Argonne’s Bebop cluster in its Laboratory Computing Resource Center, as well as on the University of Chicago’s Beagle supercomputer.

Moving beyond medicine

The research team is applying similar approaches to challenges across different cancer types, including colon, breast and prostate cancer.

Argonne’s EMEWS framework can offer insights in areas beyond medicine. Indeed, Ozik and Collier are currently using the system to explore the complexities of rare earth metals and their supply chains. “With this new approach, researchers can use agent-based modeling in more scientifically robust ways,” said Collier.

The team also included Randy Heiland, a senior systems analyst and programmer at Indiana University. Argonne researchers were funded by the National Institutes of Health.

About Argonne National Laboratory

Argonne National Laboratory seeks solutions to pressing national problems in science and technology. The nation’s first national laboratory, Argonne conducts leading-edge basic and applied scientific research in virtually every scientific discipline. Argonne researchers work closely with researchers from hundreds of companies, universities, and federal, state and municipal agencies to help them solve their specific problems, advance America’s scientific leadership and prepare the nation for a better future. With employees from more than 60 nations, Argonne is managed by UChicago Argonne, LLC for the U.S. Department of Energy’s Office of Science.

About the U.S. Department of Energy’s Office of Science

The U.S. Department of Energy’s Office of Science is the single largest supporter of basic research in the physical sciences in the United States and is working to address some of the most pressing challenges of our time. For more information, visit https://energy.gov/science.

Source: Dave Bukey, Argonne National Laboratory