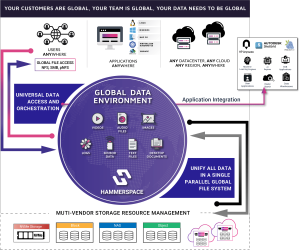

SAN MATEO, Calif., Nov. 11, 2022 — Hammerspace, a pioneer of the global data environment, has unveiled its latest performance capabilities. With Release 5, Hammerspace delivers the performance needed to free workloads from data silos, eliminate copy proliferation, and provide direct data access to applications and users, no matter where the data is stored. Hammerspace allows organizations to take full advantage of the performance capabilities of any server, storage system and network anywhere in the world. This capability enables a unified, fast, and efficient global data environment for the entire workflow, from data creation to processing, collaboration, and archiving across edge devices, data centers, and public and private clouds.

1. High-Performance Across Data Centers and to the Cloud: Saturate the Available Internet or Private Links

1. High-Performance Across Data Centers and to the Cloud: Saturate the Available Internet or Private Links

Instruments, applications, compute clusters and the workforce are increasingly decentralized. With Hammerspace, all users and applications have globally shared, secured access, to all data no matter which storage platform or location it is on, as if it were all on a local NAS.

Hammerspace overcomes data gravity to make remote data fast to use locally. Modern data architectures require data placement to be as local as possible to match the user or application’s latency and performance requirements. Hammerspace’s Parallel Global File System orchestrates data automatically and by policy in advance to make data present locally without wasting time waiting for data placement. And data placement occurs fast: using dual, 100Gb/E networks, Hammerspace can intelligently orchestrate data at 22.5GB/second to where it is needed. This performance level enables workflow automation to orchestrate data in the background on a file-granular basis directly, by policy, making it possible to start working with the data as soon as the first file is transferred and without needing to wait for the entire data set to be moved locally.

Unstructured data workloads in the cloud can take full advantage of as many compute cores as allocated and take advantage of as much bandwidth as is needed for the job, even saturating the network within the cloud when desired to connect the compute environment with applications. A recent analysis of EDA workloads in Microsoft Azure showed that Hammerspace scales performance linearly, taking full advantage of the network configuration available in Azure. This high-performance cloud file access is necessary for compute-intensive use cases, including processing genomics data, rendering visual effects, training machine learning models and implementing high-performance computing architectures in the cloud.

High-performance across data centers and to the cloud in the Release 5 software include:

- Backblaze, Zadara, and Wasabi support.

- Continual system-wide optimization to increase scalability, improve back-end performance, and improve resilience in very large, distributed environments.

- New Hammerspace Management GUI, with user-customizable tiles, better administrator experience, and increased observability of activity within shares.

- Increased scale, increasing the number of Hammerspace clusters supported in a single global data environment from 8 to 16 locations.

2. High-Performance Across Interconnect within the Data Center: Saturate Ethernet or InfiniBand Networks within the Data Center

Data centers need massive performance to ingest data from instruments and large compute clusters. Hammerspace makes it possible to reduce the friction between resources, to get the most out of both your compute and storage environment, reducing the idle time waiting on data to ingest into storage.

Hammerspace supports a wide range of high-performance storage platforms that organizations have in place today. The power of the Hammerspace architecture is its ability to saturate even the fastest storage and network infrastructures, orchestrating direct I/O and scaling linearly across otherwise incompatible platforms to maximize aggregate throughput and IOPS. It does this while providing the performance of a parallel file system coupled with the ease of standards-based global NAS connectivity and out-of-band metadata updates.

Hammerspace supports a wide range of high-performance storage platforms that organizations have in place today. The power of the Hammerspace architecture is its ability to saturate even the fastest storage and network infrastructures, orchestrating direct I/O and scaling linearly across otherwise incompatible platforms to maximize aggregate throughput and IOPS. It does this while providing the performance of a parallel file system coupled with the ease of standards-based global NAS connectivity and out-of-band metadata updates.

In one recent test with moderately sized server configurations deploying just 16 DSX nodes, the Hammerspace file system took advantage of the full storage performance to hit 1.17 Tbits/second, which was the max throughput the NVMe storage could handle, and with 32kb file sizes and low CPU utilization. The tests demonstrated that the performance would scale linearly to extreme levels if additional storage and networking were added.

High-performance across interconnect within the data center enhancements in the Release 5 software include:

- 20 percent increase in metadata performance to accelerate file creation in primary storage use cases.

- Accelerated collaboration on shared files in high client count environments.

- RDMA support for global data over NFS v4.2, providing high-performance, coupled with the simplicity and open standards of NAS protocols to all data in the global data environment, no matter where it is located.

3. High-Performance Server-local IO: Deliver to Applications Near Theoretical I/O Subsystem Maximum Performance of Cloud Instances, VMs, and Bare Metal Servers

High-performance use cases, edge environments and DevOps workloads all benefit from leveraging the full performance of the local server. Hammerspace takes full advantage of the underlying infrastructure, delivering 73.12 Gbits/sec performance from a single NVMe-based server, providing nearly the same performance through the file system that would be achieved on the same server hardware with direct-to-kernel access.

The Hammerspace Parallel Global File System architecture separates the metadata control plane from the data path and can use embedded parallel file system clients with NFS v4.2 in Linux, resulting in minimal overhead in the data path.

The Hammerspace Parallel Global File System architecture separates the metadata control plane from the data path and can use embedded parallel file system clients with NFS v4.2 in Linux, resulting in minimal overhead in the data path.

For servers running at the Edge, Hammerspace elegantly handles situations where edge or remote sites become disconnected. Since file metadata is global across all sites, local read/write continues until the site reconnects, at which time the metadata synchronizes with the rest of the global data environment.

“Technology typically follows a continuum of incremental advancements over previous generations. But every once in a while, a quantum leap forward is taken with innovation that changes paradigms,” commented Hammerspace CEO David Flynn. “Another paradigm shift is upon us to create high-performance global data architectures incorporating instruments and sensors, edge sites, data centers, and diverse cloud regions.”

About Hammerspace

Hammerspace delivers a Global Data Environment that spans across on-prem data centers and public cloud infrastructure enabling the decentralized cloud. With origins in Linux, NFS, open standards, flash and deep file system and data management technology leadership, Hammerspace delivers the world’s first and only solution to connect global users with their data and applications, on any existing data center infrastructure or public cloud services including AWS, Google Cloud, Microsoft Azure and Seagate Lyve Cloud.

Source: Hammerspace