June 29 — When most people think of a supercomputer center, they may think of one massive computer performing a single task. Inside the data center at the San Diego Supercomputer Center (SDSC) at the University of California San Diego, however, there are several large supercomputer systems, each performing multiple tasks simultaneously across a wide range of science domains that include genome sequencing to help pave the way to personalized medical treatment, coming up with new drug designs for conditions such as Parkinson’s and Alzheimer’s disease, or creating detailed fluid dynamics simulations for hypersonic aircraft.

Keeping SDSC’s main data center cool enough so that its Comet and Gordon supercomputers, among smaller clusters, don’t overheat is a complex yet mission-critical task, according to Todor Milkov, SDSC’s senior project engineer. A computing architecture such as the one found in Comet, SDSC’s newest supercomputer, requires one megawatt of power to operate the system. Using that much electricity generates a tremendous amount of heat, so SDSC, with the help of outside experts, developed three cooling system prototypes and conducted research to determine the most efficient system.

Each prototype system was designed using vendor-specific technology controlling five air handlers as a baseline to evaluate system performance. One of the prototypes used wireless temperature sensors that read the temperature of the hot and cold aisles every three minutes to increase battery life.

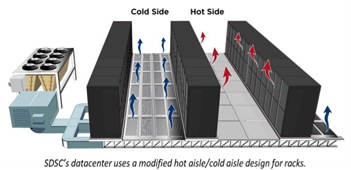

Many data centers use a standard hot aisle/cold aisle design. This design involves lining up server racks in alternating rows, with cold air intakes facing one way and hot air exhausts facing the other. The rows composed of rack fronts are called cold aisles. Typically, cold aisles face air conditioner output ducts. The rows that the heated exhausts pour into are called hot aisles. Typically, hot aisles face air conditioner return ducts.

Many data centers use a standard hot aisle/cold aisle design. This design involves lining up server racks in alternating rows, with cold air intakes facing one way and hot air exhausts facing the other. The rows composed of rack fronts are called cold aisles. Typically, cold aisles face air conditioner output ducts. The rows that the heated exhausts pour into are called hot aisles. Typically, hot aisles face air conditioner return ducts.

Containment systems can help isolate hot aisles and cold aisles from each other and prevent hot and cold air from mixing. Such systems started out as using physical barriers that simply separated the hot and cold aisles with vinyl plastic sheeting or Plexiglas covers. Modern containment systems offer plenums and other commercial options that combine containment with variable fan drives (VFDs) to prevent cold air and hot air from mixing.

At SDSC, however, the entire area under the raised floor is used for the supply plenum, and the entire area above the ceiling is for the return plenum. Cold aisles use perforated floor tiles with specifically designed hole sizes to control the air flow volume from the space below the floor, while the hot aisles use ceiling grates that allow heated air to enter the space above the ceiling.

Controlling the air flow from all air handlers discharging into one common plenum presents a difficult problem, especially since these spaces also contain obstructions such as pipes and conduits. Moreover, not all of the compute clusters run at full capacity at any given time, and systems loads also change regularly as research projects start up or stop. These constantly changing factors cause the amount of heat dissipated from the supercomputer systems to fluctuate from minute to minute. The data center cooling system has to quickly adjust to accommodate these fluctuations in temperature.

“We learned a lot during the prototype and research phase of the cooling system design,” said Milkov. “We started by collecting a lot of data on how air flowed through the data center. We found that three minutes between temperature readings was too long an interval to keep the data center within the desired temperature ranges. Because of the longer interval, we used more electricity bringing the data center back to its temperature set points than we needed if we took temperature readings over shorter intervals and could make changes to the cooling system sooner.”

Realizing that a different approach was needed, Milkov put together a vendor evaluation process for an updated data center management system with the objective of reducing energy use while increasing the level of control capability available to the SDSC operations staff.

After extensive research, Milkov selected three companies for prototype installations. At the conclusion of a detailed evaluation, systems integration company Earth Base One (EBO) Corporation and a SNAP PAC-based control system were chosen for providing extensive control capabilities and energy savings.

Milkov and Michael Hyde, EBO’s president, approached the project with the same vision. “Rather than adapting an off-the-shelf data center management system to SDSC, we designed a tailor-built system for SDSC’s unique challenges,” said Hyde.

Opto 22, which develops and manufactures hardware and software products for applications in industrial automation, remote monitoring, and data acquisition, was chosen as the primary controls manufacturer. “The Opto 22 hardware and software not only won the competition for control and energy savings, but was also the least expensive vendor solution,” said Hyde. “The software’s excellent historical data collection and trending abilities allowed SDSC engineers to continue improving the system based on real data.”

“We appreciated the outstanding technical support SDSC received from Opto 22 during our design and prototype phase,” said Milkov. “When you’re trying to protect millions of dollars’ worth of research, you need a control system you can rely on.”

The full case study is available here.

About SDSC

As an Organized Research Unit of UC San Diego, SDSC is considered a leader in data-intensive computing and cyberinfrastructure, providing resources, services, and expertise to the national research community, including industry and academia. Cyberinfrastructure refers to an accessible, integrated network of computer-based resources and expertise, focused on accelerating scientific inquiry and discovery. SDSC supports hundreds of multidisciplinary programs spanning a wide variety of domains, from earth sciences and biology to astrophysics, bioinformatics, and health IT. SDSC’s Comet joins the Center’s data-intensive Gordon cluster, and are both part of the National Science Foundation’s XSEDE (eXtreme Science and Engineering Discovery Environment) program, the most advanced collection of integrated digital resources and services in the world.

About Opto 22

Opto 22 develops and manufactures hardware and software products for applications in industrial automation, remote monitoring, and data acquisition. Using standard, commercially available Internet, networking, and computer technologies, Opto 22’s input/output and control systems allow customers to monitor, control, and acquire data from all of the mechanical, electrical, and electronic assets that are key to their business operations. More information is at www.opto22.com.

Source: SDSC