May 12, 2023 — The Message Passing Interface (MPI) is recognized as the ubiquitous communications framework for scalable distributed high-performance computing (HPC) programming. Created in 1994,[1] MPI is arguably the core building block of distributed HPC computing. According to an Exascale Computing Project (ECP) estimate, more than 90% of the ECP codes use MPI—either directly or indirectly.[2] In a chicken-and-egg analogy, where MPI goes so goes HPC and vice versa.

David Bernholdt, principal investigator of the ECP OMPI-X project and distinguished R&D staff at ORNL observed, “Programmers who wish to use MPI in a threaded, heterogenous environment need to understand how the updates to the MPI standard address multithreading performance bottlenecks in MPI libraries prior to the MPI 4.0 standard. Due to the efforts of many, including the OMPI-X team, programmers can now use many of these performance enhancements in the latest release of Open MPI.”

The scientific computing community’s increasing need for HPC has driven continued growth in hardware scale and heterogeneity. These innovations have placed unforeseen demands on the legacy MPI communications standard and library implementations. Many novel approaches have been integrated into the MPI 4.0 specification to adapt the venerable MPI standard and library implementations (e.g., Open MPI v5.0.x) so they can run more efficiently in modern, heavily threaded, and GPU-accelerated computational environments. Eliminating lock inefficiencies in parallel codes, supporting heterogeneity, and reducing resource utilization have been key focal points as modern HPC clusters can now run thousands to millions of concurrent threads of execution—a degree of parallelism that was simply not possible in 1994.

The rise of these architectures has in turn increased the importance of hybrid programming models in which node-level programming models such as OpenMP are coupled with MPI. This is commonly referred to as MPI+X. These changes are not just theoretical, and this is why the OMPI-X team has been advocating, driving, and developing updates to the open-source Open MPI library. This has been a community effort that encompasses significant work by many individuals, discussions in the MPI Forum, and feedback from the various organizations that comprise the MPI standards committee.

In leading the ECP OMPI-X project, Bernholdt has been both participant and advocate in correcting many of the issues that limit MPI performance in heavily threaded, heterogenous computing environments and incorporating these updates into the Open MPI library.

Technical Introduction

Bernholdt explained the driving focus behind the OMPI-X efforts, “We recognize that MPI serves a very broad community. The current leadership-class systems are only a portion of that community, but these systems are important because they act as a proving ground. For this reason, we pay attention to ensure that the forthcoming MPI specification serves the needs of the high-end systems as well as the needs of the entire community.”

He continued by noting that when the OMPI-X project started, the HPC community had only an approximate vision of what an exascale system would look like. For this reason, the team picked concepts that were important and useful. They then advocated and eventually facilitated the adoption of these concepts by the standards committee—a time-consuming and laborious process that incorporated feedback from many projects and the MPI Forum. As part of the OMPI-X effort, the team also worked to implement desirable contributions in the new standard.

These innovations appear in the MPI 4.0 standard and the Open MPI 5.0.x library software releases. The updates are extensive and are the subject of numerous publications in the literature:

- Partitioned communications support increased flexibility and the overlap of communication and computation. Partitioned communication is applicable to highly threaded CPU-side MPI codes but has significant utility for GPU-side MPI kernel calls with low expected overheads. This includes the addition of performance-oriented partitioned point-to-point communication primitives and autotuning collective operations.

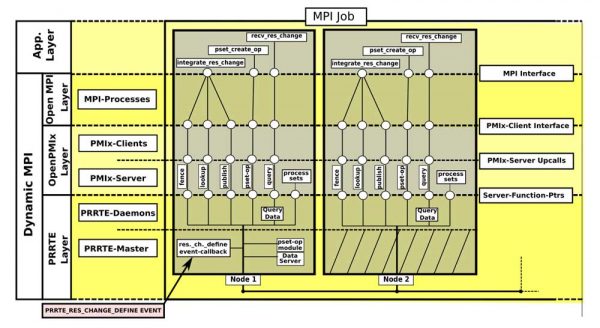

- Sessions (and PMIx) introduces a concept of isolation into MPI by relaxing the requirements for global initialization, which currently produces a global communicator. Each MPI Session creates its own isolated MPI environment, potentially with different settings, optimization opportunities, and communication data structures.[3] Sessions enable dynamic resource allocation that leverages the Process Management Interface for Exascale (PMIx). PMIx communicates with other layers of the software stack and can be used by job schedulers such as SLURM. It also permits better interactions with file systems and dynamic process groups for managing asynchronous group construction and destruction. The “PMIx: A Tutorial” slide deck in the PMIx GitHub repository provides a quick overview of the features and benefits of PMIx.

- Standardization of error management within MPI. The MPI 4.0 standard allows for asynchronous operations, which required updating the MPI error notification mechanism.

- Resilience related research considers fault-tolerance constructs as possible additions to MPI, including User Level Failure Mitigation (ULFM) and Reinit. The ULFM proposal as developed by the MPI Forum’s Fault Tolerance Working Group supports the continued operation of MPI programs after node failures have impacted application execution. See the paper and research hub for more information. Reinit++ is a redesign of the Reinit[4],[5] approach and variants[6],[7] that leverages checkpoint-restart.

- Usual performance/scalability improvements as reflected in benchmarks using the Open MPI library.

Multithreaded Implications for MPI: Performance, Portability, Scalability, and Robustness

User-level threading to exploit the performance capabilities of modern hardware has motivated the extensive analysis performed by the OMPI-X team and other investigators. Topics include the best threading models (e.g., Pthreads or Qthreads) as well as autotuning. The OMPI-X team has also dedicated efforts to improving testing and the all-important continuous integration (CI) to ensure correct operation on many HPC platforms.

To continue reading ECP’s news on MPI, click here.

Source: Rob Farber, ECP

MPI is arguably the core building block of distributed HPC computing. Read more…" share_counter=""]