Dec. 12, 2022 — This month marks a decade since the Neuroscience Gateway (NSG) started providing access to high performance computing (HPC) resources, storage and neuroscience software for about 1,500 neuroscience researchers and educators from around the world. Based at the San Diego Supercomputer Center (SDSC) at UC San Diego, the NSG team has collaborated with the university’s EEG researchers on a new project called NEMAR as described in Database: The Journal of Biological Databases and Curation.

Entitled NEMAR: An Open Access Data, Tools, and Compute Resource Operating on NeuroElectroMagnetic Data, the paper discusses how neuroscientists worked with cyberinfrastructure (CI) developers to create a forum for sharing, mining, analyzing, visualizing and archiving NeuroElectroMagnetic (NEM) imaging data from several sources.

Entitled NEMAR: An Open Access Data, Tools, and Compute Resource Operating on NeuroElectroMagnetic Data, the paper discusses how neuroscientists worked with cyberinfrastructure (CI) developers to create a forum for sharing, mining, analyzing, visualizing and archiving NeuroElectroMagnetic (NEM) imaging data from several sources.

“NEMAR provides various capabilities for users such as searching NEM data and viewing various data characteristics,” said NEMAR Principal Investigator (PI) Scott Makeig, director of the Swartz Center for Computational Neuroscience (SCCN) at the Institute for Neural Computation at UC San Diego. “Very importantly, NEMAR allows users to analyze data via NSG using SDSC’s supercomputers.”

According to NEMAR Co-PI and NSG PI Amit Majumdar, who also serves as director of SDSC’s Data Enabled Scientific Computing (DESC) Divison, NEMAR was built at SDSC so that it could have storage and supercomputing closely coupled at the back end.

“NEMAR storage is mounted on SDSC’s Expanse supercomputer and users can directly process NEMAR data via NSG without having to deal with downloading and uploading data for processing,” said Majumdar. “This is a great collaborative effort with Choonhan Youn, a senior CI developer and other experts from the Hubzero team, Dung Truong from SCCN and Kenneth Yoshimoto from the NSG team that jointly created the web interface tied to backend CI for NEMAR and demonstrated the important role of CI developers when building such a portal.”

Subhashini Sivagnanam, co-PI of NSG who was involved in architecting the NSG said, “It’s great to see how new data focused projects, such as NEMAR, can take advantage of NSG for the processing of data.”

Another important aspect of NEMAR data is that it follows the Brain Imaging Data Structure (BIDS), which is a widely used standard for describing, organizing and annotating neuroimaging data. Where available, NEMAR data also provides detailed descriptions of experimental events stored using the Hierarchical Event Descriptor (HED) system.

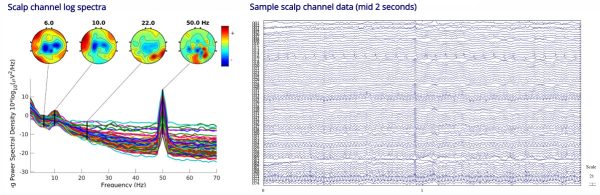

NEMAR connects brain electromagnetic data from OpenNeuro.org, a human brain imaging data archive with more than 29,000 users, to computational resources including the EEGLAB software environment for processing continuous and event-related EEG, magnetoencephalography (MEG) and other electrophysiological data. Additionally, the close integration of NEMAR with NSG enables users to process data seamlessly on HPC resources using tools including EEGLAB, Matlab, Python, TensorFlow and PyTorch.

“Our NEMAR project is a great example of DATCOR – data, tools and compute resource – and we are on the cutting edge of providing this publicly shared data portal for the neuroscience community and beyond,” said NEMAR Co-PI and Research Scientist at SCCN Arnaud Delorme, professor at Paul Sabatier University in Toulouse, France, and first author on the paper. “Resources, such as NEMAR, have great potential for enabling research in EEG, iEEG, MEG and open up more opportunities for applications of AI/ML.”

The NEMAR project is supported by the National Institutes of Health (award no. R24MH120037). The OpenNeuro project is supported by NIH (award no. R24MH117179). The NSG project is supported by NIH (award nos. U24EB029005 and R01EB023297) and the National Science Foundation (award nos. 1935749 and 1935771).

Source: Kimberly Mann Bruch, SDSC