Jan. 17, 2023 — UNSW Sydney engineers have discovered a new way of precisely controlling single electrons nestled in quantum dots that run logic gates. The new mechanism is also less bulky and requires fewer parts, which could prove essential to making large-scale silicon quantum computers a reality.

The serendipitous discovery, made by engineers at the quantum computing start-up Diraq and UNSW, is detailed in the journal Nature Nanotechnology.

“This was a completely new effect we’d never seen before, which we didn’t quite understand at first,” said lead author Dr. Will Gilbert, a quantum processor engineer at Diraq, a UNSW spin-off company based at its Kensington campus. “But it quickly became clear that this was a powerful new way of controlling spins in a quantum dot. And that was super exciting.”

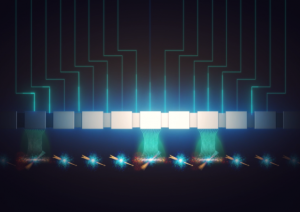

Logic gates are the basic building block of all computation. They allow ‘bits’ – or binary digits (0s and 1s) – to work together to process information. However, a quantum bit (or qubit) exists in both of these states at once – a condition known as a ‘superposition’. This allows a multitude of computation strategies – some exponentially faster, some operating simultaneously – that are beyond classical computers. Qubits themselves are made up of ‘quantum dots’ – tiny nanodevices which can trap one or a few electrons. Precise control of the electrons is necessary for computation to occur.

Using Electric Rather Than Magnetic Fields

While experimenting with different geometrical combinations of devices just billionths of a meter in size that control quantum dots, along with various types of minuscule magnets and antennas that drive their operations, Dr. Tuomo Tanttu from UNSW Engineering stumbled across a strange effect.

“I was trying to really accurately operate a two-qubit gate, iterating through a lot of different devices, slightly different geometries, different materials stacks and different control techniques,” said Dr. Tanttu, who is also a measurement engineer at Diraq. “Then this strange peak popped up. It looked like the rate of rotation for one of the qubits was speeding up, which I’d never seen in four years of running these experiments.”

What he had discovered, the engineers later realized, was a new way of manipulating the quantum state of a single qubit by using electric fields, rather than the magnetic fields they had been using previously. Since the discovery was made in 2020, the engineers have been perfecting the technique – which has become another tool in their arsenal to fulfill Diraq’s ambition of building billions of qubits on a single chip.

“This is a new way to manipulate qubits, and it’s less bulky to build – you don’t need to fabricate cobalt micro-magnets or an antenna right next to the qubits to generate the control effect,” said Dr. Gilbert. “It removes the requirement of placing extra structures around each gate. So, there’s less clutter.”

Controlling single electrons without disturbing others nearby is essential for quantum information processing in silicon. There are two established methods: electron spin resonance (ESR) using an on-chip microwave antenna, and electric dipole spin resonance (EDSR), which relies on an induced gradient magnetic field. The newly discovered technique is known as ‘intrinsic spin-orbit EDSR’.

“Normally, we design our microwave antennas to deliver purely magnetic fields,” said Dr. Tanttu. “But this particular antenna design generated more of an electric field than we wanted – but that turned out to be lucky, because we discovered a new effect we can use to manipulate qubits. That’s serendipity for you.”

Building on Making Quantum Computing in Silicon a Reality

“This is a gem of a new mechanism, which just adds to the trove of proprietary technology we’ve developed over the past 20 years of research,” said Professor Andrew Dzurak, Scientia Professor in Quantum Engineering at UNSW and CEO and founder of Diraq. Professor Dzurak led the team that built the first quantum logic gate in silicon in 2015.

“It builds on our work to make quantum computing in silicon a reality, based on essentially the same semiconductor component technology as existing computer chips, rather than relying on exotic materials.

“Since it’s based on the same CMOS technology as today’s computer industry, our approach will make it easier and faster to scale up for commercial production and achieve our goal of fabricating billions of qubits on a single chip.”

CMOS (or complementary metal-oxide-semiconductor, pronounced “see-moss”) is the fabrication process at the heart of modern computers. It’s used for making all sorts of integrated circuit components – including microprocessors, microcontrollers, memory chips and other digital logic circuits, as well as analogue circuits such as image sensors and data converters.

Building a quantum computer has been called the ‘space race of the 21st century’ – a difficult and ambitious challenge with the potential to deliver revolutionary tools for tackling otherwise impossible calculations, such as the design of complex drugs and advanced materials, or the rapid search of massive, unsorted databases.

“We often think of landing on the Moon as humanity’s greatest technological marvel,” said Professor Dzurak. “But the truth is, today’s CMOS chips – with billions of operating devices integrated together to work like a symphony, and that you carry in your pocket – that’s an astounding technical achievement and one that’s revolutionized modern life. Quantum computing will be equally astonishing.”

Source: UNSW Sydney