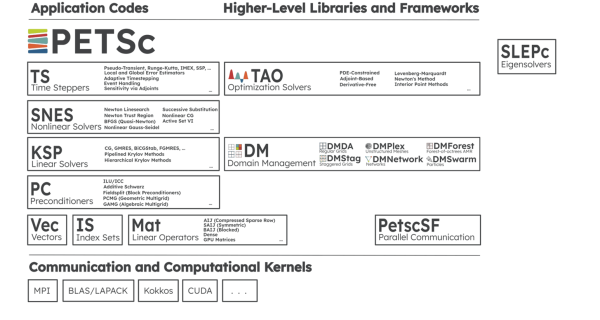

Nov. 23, 2022 — The Portable, Extensible Toolkit for Scientific Computation (PETSc) reflects a long-term investment in software infrastructure for the scientific community. As Mark Adams et al. observe in their paper, “The PETSc Community is the Infrastructure,” “The human infrastructure—people and their interactions as a community, within the broader DOE, HPC [high-performance computing], and computational science communities—is foundational and enables the creation of sustainable software infrastructure.” PETSc provides scalable solvers for nonlinear time-dependent differential and algebraic equations and for numerical optimization. PETSc is also referred to as PETSc/TAO (Figure 1) because it contains the Toolkit for Advanced Optimization (TAO) software library.

Lessons from a Successful, Decades-Long Community Effort

The PETSc/TAO project reflects a decades-long success story in how to create, maintain, and modernize an essential capability of scientific software.

The PETSc project began in the 1990s as an early software project of Argonne National Laboratory (Argonne) for parallel numerical algorithms. The creation of academic-grade software through an exploratory project reflects a common beginning for many HPC projects. Unfortunately, success frequently locks many HPC projects into code and design decisions that become increasingly unsustainable over time.

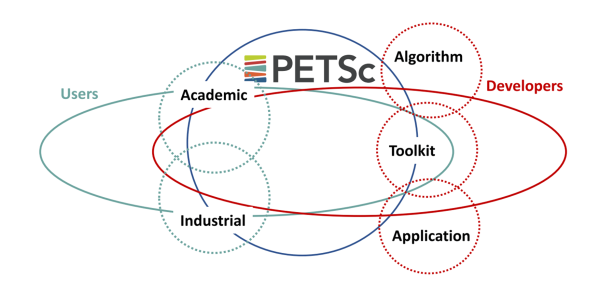

The current production-quality version of PETSc/TAO illustrates a time-proven use case in connecting users and developers (Figure 2), who together have added capabilities and adapted the software to the unforeseen and radical system architectures installed in data centers during the ensuing decades. The advent of GPU accelerators is the most recent example. When interviewed for this article, Richard Mills (Figure 4), a computational scientist at Argonne, noted that he found the usefulness of the toolkit so compelling that he joined the PETSc team.

The PETSc/TAO success story of long-term sustainability through community fits perfectly into the Exascale Computing Project (ECP) mission to transform the current HPC ecosystem into “a software stack that is both exascale-capable and usable on US industrial- and academic-scale systems.” With ECP funding and through collaborations within the ECP, the PETSc/TAO team has been preparing the toolkit to run on the forthcoming GPU-accelerated exascale systems. Both the ECP and PETSc/TAO efforts recognize that “to ensure the full potential of these new and diverse architectures, as well as the longevity and sustainability of science applications, we need to embrace software ecosystems as first-class citizens.”

The benefit of first-class citizenship includes funding, but that tells only part of the story because community support for these ecosystems is also essential to their survival. Otherwise, “those without a community die out, have only a fringe usership, or are maintained (as orphan software) by other communities.” Such sustainable, long-term, community-based thinking permeates the ECP as a myriad of software technology and application development efforts and strategies. These ECP efforts are tackling challenges in how research software is developed, sustained, and stewarded. The impacts are far-reaching, as exemplified by the National Nuclear Security Administration–funded Advanced Technology Development and Mitigation programs within the ECP. These programs focus on the long-term viability of essential, community-based, open-source software infrastructure that can support the latest generation of HPC architectures. The ability to rely on such up-to-date performance software ecosystems contributes to the success of national security programs and contributes to projects within the global scientific and HPC communities.

The PETSc/TAO effort has successfully navigated the HPC landscape to earn both funding and extensive community support. This success makes it a good use case in how to incorporate good software practices and is worthy of study for any software effort.

Good software practices result in robust, portable, and high-performance code. It is not a burden but rather a benefit. Incorporating good software practices does not distract from the science but rather enables it. Meanwhile, even the smallest community open-source projects cannot exist as entities unto themselves. Mark Adams et al. highlight this principle: “Members of the PETSc community are actively engaged with program development, including communicating with program managers at the [DOE], the National Science Foundation, and with institutional management to ensure that support is provided and maintained. This form of interaction is crucial to the long-term viability of all open-source software communities; PETSc users and community members have played important roles in various local, national, and international conversations, including recent DOE activities related to software sustainability.”

Successful Applications Tell the PETSc/TAO Success Story

An essential numerical toolkit for many applications, PETSc/TAO provides the workhorse numerical methods required to find solutions to the complex systems of equations that scientists use to model a system of interest.

These applications tell the success story of the toolkit by demonstrating the wide-spread adoption, usability, accuracy, portability, and performance of the PETSc/TAO solvers in a supercomputer environment. At the Oak Ridge Leadership Computing Facility (OLCF) at Oak Ridge National Laboratory (ORNL), performance and portability are, of course, also reflected in microbenchmarks and reports such as the “.” The 40-petaflops Crusher supercomputer has identical hardware and similar software to the OLCF’s Frontier exascale system. For this reason, preliminary results on Crusher are valid indicators of performance on Frontier. Part I does not reference Crusher, so only Part II of the report is referenced here.

Within the ECP, the PETSc/TAO team has been working with applications such as Chombo-Crunch, which addresses carbon sequestration, and with the Whole Device Model Application (WDMApp) for fusion reactors. WDMApp is developing a high-fidelity planning and optimization tool to help understand and control plasmas in tokamak fusion devices.

The societal and scientific importance of these projects is very real.

The DOE, for example, identified whole-device modeling (WDM) as a priority for “assessments of reactor performance in order to minimize risk and qualify operating scenarios for next-step burning plasma experiments.” The fusion energy project of the International Thermonuclear Experimental Reactor (ITER) is one high-profile example that is pressing the limits of human knowledge because it will utilize plasmas well beyond the physics regimes accessible in any previous fusion experiments. Understanding how plasmas behave in these new physics regimes requires both adapting existing computer models and using exascale supercomputers. PETSc/TAO meets both requirements.

In a win-win for the ECP, PETSc, and WDMApp projects, WDMApp became the first simulation software in fusion history to couple tokamak core to edge physics.

To continue reading, please click here.

Source: Rob Farber, ECP