OAK RIDGE, Tenn., Oct. 23, 2019—A joint research team from Google Inc., NASA Ames Research Center, and the Department of Energy’s Oak Ridge National Laboratory has demonstrated that a quantum computer can outperform a classical computer at certain tasks, a feat known as quantum supremacy.

Quantum computers use the laws of quantum mechanics and units known as qubits to greatly increase the threshold at which information can be transmitted and processed. Whereas traditional “bits” have a value of either 0 or 1, qubits are encoded with values of both 0 and 1, or any combination thereof, allowing for a vast number of possibilities for storing data.

While still in their early stages, quantum systems have the potential to be exponentially more powerful than today’s leading classical computing systems and promise to revolutionize research in materials, chemistry, high-energy physics, and across the scientific spectrum. The team’s results, published today in Nature, provide a proof of concept for quantum supremacy and establish a baseline comparison of time-to-solution and energy consumption.

“This achievement of quantum supremacy is a testament to the strength of American innovation, and DOE’s Labs are helping lead the way in this groundbreaking area of research,” DOE Under Secretary for Science Paul Dabbar said. “The mastery of quantum technology is creating a new Information Age that offers new ways to process information to benefit science and society.”

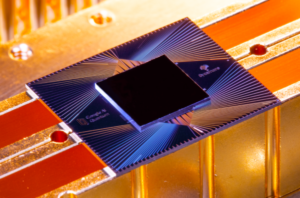

In this case the quantum computer, built by Google and dubbed Sycamore, consisted of 53 qubits. The classical computer was ORNL’s Summit, housed at the Oak Ridge Leadership Computing Facility (OLCF) and ranked as the world’s most powerful thanks to its more than 4,600 compute nodes.

Both systems performed a task known as random circuit sampling (RCS), designed specifically to measure the performance of quantum devices such as Sycamore. The simulations took 200 seconds on the quantum computer; after running the same simulations on Summit the team extrapolated that the calculations would have taken the world’s most powerful system more than 10,000 years to complete with current state-of-the-art algorithms, providing experimental evidence of quantum supremacy and critical information for the design of future quantum computers. Not only was Sycamore faster than its classical counterpart, but it was also approximately 10 million times more energy efficient.

The researchers also estimated the performance of individual components to accurately predict the performance of the entire Sycamore device, demonstrating that quantum information behaves consistently as it is scaled up—a necessary property for the design of large-scale quantum computers.

“This experiment establishes that today’s quantum computers can outperform the best conventional computing for a synthetic benchmark,” said ORNL researcher and Director of the laboratory’s Quantum Computing Institute Travis Humble. “There have been other efforts to try this, but our team is the first to demonstrate this result on a real system.”

That real system was critical. Researchers at Google and NASA’s Ames Research Center in Silicon Valley attempting to tackle the problem using NASA resources quickly realized they needed a more powerful computer. And none are more powerful than ORNL’s Summit.

Porting a quantum calculation to the classical Summit was no easy task. The RCS simulator, known as qFlex, initially included a CPU-only implementation. Summit, however, derives much of its world-class speed from NVIDIA graphics processing units (GPUs), which tackle computationally intensive math problems while the CPUs efficiently direct tasks.

A library developed by the OLCF’s Dmitry Liakh for performing tensor algebra operations on multicore CPUs and GPUs allowed the team to take advantage of all of Summit which, along with the IBM system’s 512 gigabytes of memory per node, increased the speed of the simulation 46-fold per node (using 4,550 of Summit’s nodes) as compared to the previous implementation that ran solely on CPUs.

“That the simulation proving the validity of a next-generation architecture such as quantum was run on ORNL’s Summit is indicative of the lab’s long history of accelerated computing innovation and the necessity of classical supercomputers in realizing the potential of quantum computing,” ORNL Associate Laboratory Director for Computing and Computational Sciences Jeff Nichols said.

ORNL’s Titan was the first leadership computing system to harness the power of GPUs, allowing it to debut at number one in 2012 and remain among the top 10 world’s most powerful systems until 2018. It was Titan’s success that enabled the development of Summit and, by extension, is driving the design of Frontier, slated to be among the nation’s first exascale computers when it is delivered in 2021.

The laboratory has been planning for post-exascale platforms for more than a decade via dedicated research programs in quantum computing, networking, sensing and quantum materials. These efforts aim to accelerate the understanding of how near-term quantum computing resources can help tackle today’s most daunting scientific challenges and support the recently announced National Quantum Initiative, a federal effort to ensure American leadership in quantum sciences, particularly computing.

Such leadership will require systems like Summit to ensure the steady march from devices such as Sycamore to larger-scale quantum systems exponentially more powerful than anything in operation today.

“Realizing the potential of quantum computing requires partnerships that leverage the strengths of innovators like Google and ORNL,” ORNL Director Thomas Zacharia said. “This milestone is an inspiration to the next generation of researchers who will help push the frontiers of what’s possible in computing and scientific discovery.”

The research was supported by DOE’s Office of Science. The OLCF is a DOE Office of Science User Facility.

UT-Battelle LLC manages Oak Ridge National Laboratory for DOE’s Office of Science, the single largest supporter of basic research in the physical sciences in the United States. DOE’s Office of Science is working to address some of the most pressing challenges of our time. For more information, visit https://energy.gov/science.

Source: Oak Ridge National Laboratory