HOUSTON, Feb. 4, 2020 — Rice University engineers have created a deep learning computer system that taught itself to accurately predict extreme weather events, like heat waves, up to five days in advance using minimal information about current weather conditions.

Ironically, Rice’s self-learning “capsule neural network” uses an analog method of weather forecasting that computers made obsolete in the 1950s. During training, it examines hundreds of pairs of maps. Each map shows surface temperatures and air pressures at five-kilometers height, and each pair shows those conditions several days apart. The training includes scenarios that produced extreme weather — extended hot and cold spells that can lead to deadly heat waves and winter storms. Once trained, the system was able to examine maps it had not previously seen and make five-day forecasts of extreme weather with 85% accuracy.

With further development, the system could serve as an early warning system for weather forecasters, and as a tool for learning more about the atmospheric conditions that lead to extreme weather, said Rice’s Pedram Hassanzadeh, co-author of a study about the system published online this week in the American Geophysical Union’s Journal of Advances in Modeling Earth Systems.

The accuracy of day-to-day weather forecasts has improved steadily since the advent of computer-based numerical weather prediction (NWP) in the 1950s. But even with improved numerical models of the atmosphere and more powerful computers, NWP cannot reliably predict extreme events like the deadly heat waves in France in 2003 and in Russia in 2010.

“It may be that we need faster supercomputers to solve the governing equations of the numerical weather prediction models at higher resolutions,” said Hassanzadeh, an assistant professor of mechanical engineering and of Earth, environmental and planetary sciences at Rice. “But because we don’t fully understand the physics and precursor conditions of extreme-causing weather patterns, it’s also possible that the equations aren’t fully accurate, and they won’t produce better forecasts, no matter how much computing power we put in.”

In late 2017, Hassanzadeh and study co-authors and graduate students Ashesh Chattopadhyay and Ebrahim Nabizadeh decided to take a different approach.

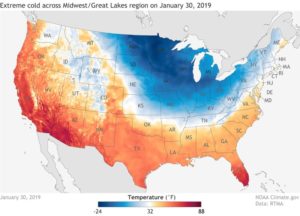

“When you get these heat waves or cold spells, if you look at the weather map, you are often going to see some weird behavior in the jet stream, abnormal things like large waves or a big high-pressure system that is not moving at all,” Hassanzadeh said. “It seemed like this was a pattern recognition problem. So we decided to try to reformulate extreme weather forecasting as a pattern-recognition problem rather than a numerical problem.”

Deep learning is a form of artificial intelligence, in which computers are “trained” to make humanlike decisions without being explicitly programmed for them. The mainstay of deep learning, the convolutional neural network, excels at pattern recognition and is the key technology for self-driving cars, facial recognition, speech transcription and dozens of other advances.

“We decided to train our model by showing it a lot of pressure patterns in the five kilometers above the Earth, and telling it, for each one, ‘This one didn’t cause extreme weather. This one caused a heat wave in California. This one didn’t cause anything. This one caused a cold spell in the Northeast,’” Hassanzadeh said. “Not anything specific like Houston versus Dallas, but more of a sense of the regional area.”

At the time, Hassanzadeh, Chattopadhyay and Nabizadeh were barely aware that analog forecasting had once been a mainstay of weather prediction and even had a storied role in the D-Day landings in World War II.

“One way prediction was done before computers is they would look at the pressure system pattern today, and then go to a catalog of previous patterns and compare and try to find an analog, a closely similar pattern,” Hassanzadeh said. “If that one led to rain over France after three days, the forecast would be for rain in France.”

He said one of the advantages of using deep learning is that the neural network didn’t need to be told what to look for.

“It didn’t matter that we don’t fully understand the precursors because the neural network learned to find those connections itself,” Hassanzadeh said. “It learned which patterns were critical for extreme weather, and it used those to find the best analog.”

To demonstrate a proof-of-concept, the team used model data taken from realistic computer simulations. The team had reported early results with a convolutional neural network when Chattopadhyay, the lead author of the new study, heard about capsule neural networks, a new form of deep learning that debuted with fanfare in late 2017, in part because it was the brainchild of Geoffrey Hinton, the founding father of convolutional neural network-based deep learning.

Unlike convolutional neural networks, capsule neural networks can recognize relative spatial relationships, which are important in the evolution of weather patterns.

“The relative positions of pressure patterns, the highs and lows you see on weather maps, are the key factor in determining how weather evolves,” Hassanzadeh said.

Another significant advantage of capsule neural networks was that they don’t require as much training data as convolutional neural networks. There’s only about 40 years of high-quality weather data from the satellite era, and Hassanzadeh’s team is working to train its capsule neural network on observational data and compare its forecasts with those of state-of-the-art NWP models.

“Our immediate goal is to extend our forecast lead time to beyond 10 days, where NWP models have weaknesses,” he said.

Though much more work is needed before Rice’s system can be incorporated into operational forecasting, Hassanzadeh hopes it might eventually improve forecasts for heat waves and other extreme weather.

“We are not suggesting that at the end of the day this is going to replace NWP,” he said. “But this might be a useful guide for NWP. Computationally, this could be a super cheap way to provide some guidance, an early warning, that allows you to focus NWP resources specifically where extreme weather is likely.”

Hassanzadeh said his team is also interested in finding out what patterns the capsule neural network uses to make its predictions.

“We want to leverage ideas from explainable AI (artificial intelligence) to interpret what the neural network is doing,” he said. “This might help us identify the precursors to extreme-causing weather patterns and improve our understanding of their physics.”

The research was supported by NASA (80NSSC17K0266), the National Academies’ Gulf Research Program and a BP High-Performance Computing Graduate Fellowship from Rice’s Ken Kennedy Institute. Computing resources were provided by the Texas Advanced Computing Center and Pittsburgh Supercomputing Center under the National Science Foundation-supported XSEDE project (ATM170020) and Rice’s Center for Research Computing in partnership with the Ken Kennedy Institute.

Links and resources:

The DOI of the JAMES paper is: 10.1029/2019MS001958

A copy of the paper is available at: https://doi.org/10.1029/2019MS001958

About Rice University

Located on a 300-acre forested campus in Houston, Rice University is consistently ranked among the nation’s top 20 universities by U.S. News & World Report. Rice has highly respected schools of Architecture, Business, Continuing Studies, Engineering, Humanities, Music, Natural Sciences and Social Sciences and is home to the Baker Institute for Public Policy. With 3,962 undergraduates and 3,027 graduate students, Rice’s undergraduate student-to-faculty ratio is just under 6-to-1. Its residential college system builds close-knit communities and lifelong friendships, just one reason why Rice is ranked No. 1 for lots of race/class interaction and No. 4 for quality of life by the Princeton Review. Rice is also rated as a best value among private universities by Kiplinger’s Personal Finance.

Source: Jade Boyd, Rice University