July 2, 2020 — The National Science Foundation (NSF) has awarded the San Diego Supercomputer Center (SDSC) at UC San Diego a $5 million grant to develop a high-performance resource for conducting artificial intelligence (AI) research across a wide swath of science and engineering domains. Called Voyager, the system will be the first-of-its-kind available in the NSF resource portfolio. In addition to the $5 million acquisition award, an equivalent amount of funding is expected to support community engagement and operation of the resource.

The award is part of a $40 million NSF funding initiative aimed at expanding the agency’s portfolio of innovative computational resources that take advantage of rapidly changing technologies.

With an innovative system architecture uniquely optimized for deep learning (DL) operations and AI workloads, Voyager will provide an opportunity for researchers to explore and implement new deep learning techniques using well-established deep learning frameworks such as PyTorch, MXNet, and Tensorflow. This includes convolutional neural networks used for image classification such as traffic signals; and generative adversarial networks, a machine learning (ML) model where two neural networks compete with each other to generate synthetic data that is statistically indistinguishable from real data. Researchers will also be able to develop their own AI techniques using software tools and libraries built specifically for Voyager.

In advance of the Voyager award, SDSC established the AI Technology Lab (AITL) in October 2019, which provides a framework for leveraging Voyager to foster new industry collaborations aimed at exploring emerging AI and ML technology for scientific and industrial uses, while helping to prepare the next-generation workforce.

“These awards represent a suite of complementary advanced computational capabilities and services aimed to empower new fundamental research in many fields,” said Amy Friedlander, acting director of the NSF’s Office of Advanced Cyberinfrastructure. “NSF’s long-standing investments in advanced and innovative computing respond to the rapid evolution and expansion of computational- and data-intensive research being conducted across all of science and engineering.”

“A rigorous evaluation of AI-optimized hardware is of keen interest across the entire AI research community,” said Amitava Majumdar, head of SDSC’s Data Enabled Scientific Computing division and principal investigator for the Voyager project. “SDSC has always been at the forefront of deploying innovative advanced computing and data systems. SDSC researchers work closely with researchers across computational science to implement and optimize applications on these emerging architectures.”

“Machine learning techniques are rapidly becoming more prevalent across numerous science domains, from astrophysics to drug discovery and even the social sciences,” noted SDSC Director Michael Norman, who also directs UC San Diego’s Laboratory for Computational Astrophysics. “Voyager will significantly contribute to the high-performance computing community’s understanding of how such a system, built specifically for artificial intelligence, can be used to advance computational science and engineering research.”

Co-principal investigators include Rommie Amaro, a professor of chemistry and biochemistry and director of the National Biomedical Computation Resource at UC San Diego; and UC San Diego Physics Professor Javier Duarte. SDSC co-PIs are Robert Sinkovits, lead for scientific applications; and Mai Nguyen, lead for data analytics.

Data center preparation at SDSC and build-out of Voyager is expected to begin in the first half of 2021. The NSF award is structured as a three-year ‘test bed’ phase with a select set of research teams, followed by a two-year phase, where the system will be more widely available using an NSF-approved allocation process. Key design specifications of Voyager will be announced at a later date.

Voyager’s technology partner and system integrator is Supermicro. “We are excited to support SDSC, a pioneer in the HPC community conducting ground-breaking research and looking for innovative ways to improve science and engineering leveraging new AI solutions,” said Charles Liang, president and CEO of Supermicro. “The Voyager AI experiment could yield sophisticated and optimized design tools for the scientific and research community to create the next generation of AI solutions. Supermicro looks forward to supporting SDSC’s multi-year scientific exploration and further collaborations with academia, where many important computing advancements are underway.”

SDSC researchers and collaborators will work closely with a small number of research teams to explore and evaluate the performance of Voyager’s hardware, specialized compilers, and system libraries. Education, outreach, and training activities include semi-annual workshops that will bring together teams to share lessons learned, and develop the knowledge and best practices that inform future researchers who will be given access during the Allocations Phase. The Voyager External Advisory Board will assist in recruiting early users and providing guidance to the project.

Voyager Use Case: Particle Collisions

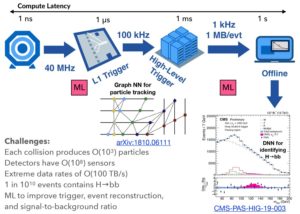

| Particle accelerators, such as the CERN Large Hadron Collider (LHC), generate massive amounts of data. More than 99 percent of the events detected by LHC’s ATLAS and CMS detectors, which were responsible for the discovery of the Higgs boson, are discarded immediately, nonetheless yielding many petabytes of data for further analysis.

Javier Duarte, a professor with UC San Diego’s Division of Physical Sciences and co-PI for the Voyager project, uses artificial intelligence (AI) techniques for triggering, event reconstruction, and data analysis of LHC experiments, including identifying Higgs boson decay candidates. Duarte will train AI algorithms on Voyager to improve particle identification and event reconstruction and study Voyager’s accelerated inference for the software-based triggering step of the data processing pipeline. Data processing pipeline for Higgs boson to bottom quark event processing can benefit from Voyager’s inference processors to filter data coming out of detector and Voyager’s training processors in processing data that passes the high-level trigger. Credit: Javier Duarte, UC San Diego Division of Physical Sciences For triggering, machine learning (ML) improves signal selection efficiency while reducing the false positive rate for accepting background events. For data analysis, various ML algorithms (including dense, convolutional, recurrent, and graph neural networks) are used to classify each event as signal or background and to identify particle signatures such as Higgs boson decay candidates. Duarte has a special interest in fast implementations on specialized hardware that will be part of Voyager. |

About SDSC

The San Diego Supercomputer Center (SDSC) is a leader and pioneer in high-performance and data-intensive computing, providing cyberinfrastructure resources, services, and expertise to the national research community, academia, and industry. Located on the UC San Diego campus, SDSC supports hundreds of multidisciplinary programs spanning a wide variety of domains, from astrophysics and earth sciences to disease research and drug discovery. In late 2020 SDSC will launch its newest National Science Foundation-funded supercomputer, Expanse. At over twice the performance of Comet, Expanse supports SDSC’s theme of ‘Computing without Boundaries’ with a data-centric architecture, public cloud integration, and state-of-the art GPUs for incorporating experimental facilities and edge computing.

Source: SDSC