July 1, 2021 — Additive manufacturing has the potential to allow one to create parts or products on demand in manufacturing, automotive engineering, and even in outer space. However, it’s a challenge to know in advance how a 3D printed object will perform, now and in the future.

Physical experiments — especially for metal additive manufacturing (AM) — are slow and costly. Even modeling these systems computationally is expensive and time-consuming.

“The problem is multi-phase and involves gas, liquids, solids, and phase transitions between them,” said University of Illinois Ph.D. student Qiming Zhu. “Additive manufacturing also has a wide range of spatial and temporal scales. This has led to large gaps between the physics that happens on the small scale and the real product.”

“The problem is multi-phase and involves gas, liquids, solids, and phase transitions between them,” said University of Illinois Ph.D. student Qiming Zhu. “Additive manufacturing also has a wide range of spatial and temporal scales. This has led to large gaps between the physics that happens on the small scale and the real product.”

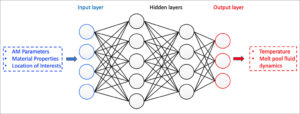

Zhu, Zeliang Liu (a software engineer at Apple), and Jinhui Yan (professor of Civil and Environmental Engineering at the University of Illinois), are trying to address these challenges using machine learning. They are using deep learning and neural networks to predict the outcomes of complex processes involved in additive manufacturing.

“We want to establish the relationship between processing, structure, properties, and performance,” Zhu said.

Current neural network models need large amounts of data for training. But in the additive manufacturing field, obtaining high-fidelity data is difficult, according to Zhu. To reduce the need for data, Zhu and Yan are pursuing ‘physics informed neural networking,’ or PINN.

“By incorporating conservation laws, expressed as partial differential equations, we can reduce the amount of data we need for training and advance the capability of our current models,” he said.

In the 1D solidification case, they input data from experiments into their neural network. In the laser beam melting tests, they used experimental data as well as results from computer simulations. They also developed a ‘hard’ enforcement method for boundary conditions, which, they say, is equally important in the problem-solving.

The team’s neural network model was able to recreate the dynamics of the two experiments. In the case of the NIST Challenge, it predicted the temperature and melt pool length of the experiment within 10% of the actual results. They trained the model on data from 1.2 to 1.5 microseconds and made predictions at further time steps up to 2.0 microseconds.

The team published their results in Computational Mechanics in January 2021.

“This is the first time that neural networks have been applied to metal additive manufacturing process modeling,” Zhu said. “We showed that physics-informed machine learning, as a perfect platform to seamlessly incorporate data and physics, has big potential in the additive manufacturing field.”

Zhu sees engineers in the future using neural networks as fast prediction tools to provide guidance on the parameter selection for the additive manufacturing process — for instance, the speed of the laser or the temperature distribution — and to map the relationships between additive manufacturing process parameters and the properties of the final product, such as its surface roughness.

“If your client requires a specific property, then you’ll know what you should use for your manufacturing process parameters,” Zhu said.

In a separate paper in Computational Methods in Applied Mechanics and Engineering published online in May 2021, Zhu and Yan proposed a modification of the existing finite element method framework used in additive manufacturing to see if their technique could get better predictions over existing benchmarks.

Mirroring a recent additive manufacturing experiment from Argonne National Lab involving a moving laser, the researchers showed that simulations, performed on Frontera, differed in depth from those in the experiment by less than 10.3% and captured the common experimentally-observed chevron-type shape on the metal top surface.

Zhu and Yan’s research benefits from the continued growth of computing technologies and federal investment in high performance computing.

Frontera not only speeds up studies such as theirs, it opens the door to machine and deep learning studies in fields where training data is not widely available, broadening the potential of AI research.

“The most exciting point is when you see that your model can predict the future using only a small amount of existing data,” Zhu said. “It’s somehow learning about the evolution of the process.

“Previously, I was not very confident on whether we’d be able to predict with good accuracy over temperature, velocity, and geometry of the gas-metal interface. We showed that we’re able to make nice data inferences.”

Click here for the full article.

Source: TACC