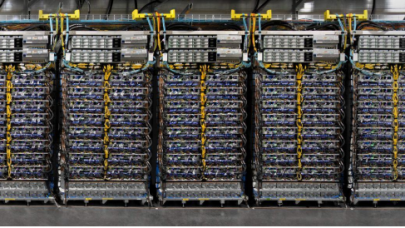

Google AI Supercomputer Shows the Potential of Optical Interconnects

April 10, 2023

There are limits on the speed of how fast copper wires can move data between computers, and a transition to light speed will ultimately drive AI and high-performance computing forward. Every major chipmaker is in agreement that optical interconnects will be needed to reach zettascale computing in an energy-efficient way. That opinion was... Read more…

Rockport Networks Names New Co-CEO as It Closes on $48M Funding Round

December 14, 2021

Rockport Networks, a new entrant in the HPC networking space with its switchless fabric offering, today announced the appointment of Marc Sultzbaugh to co-CEO. An active Rockport board member since December 2020, Sultzbaugh will lead the company alongside Rockport Networks Co-Founder and Co-CEO Doug Carwardine. Sultzbaugh previously spent 20 years at HPC networking company Mellanox... Read more…

With New Owner and New Roadmap, an Independent Omni-Path Is Staging a Comeback

July 23, 2021

Put on a shelf by Intel in 2019, Omni-Path faced a uncertain future, but under new custodian Cornelis Networks, OmniPath is looking to make a comeback as an independent high-performance interconnect solution. A "significant refresh" – called Omni-Path Express – is coming later this year according to the company. Cornelis Networks formed last September as a spinout of Intel's Omni-Path division. Read more…

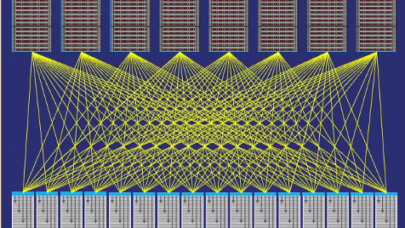

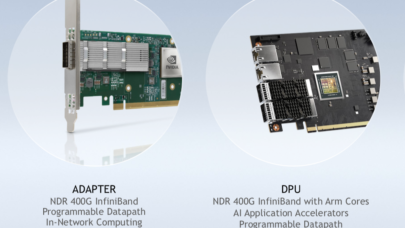

Nvidia (Mellanox) Debuts NDR 400 Gigabit InfiniBand at SC20

November 16, 2020

Nvidia today introduced its Mellanox NDR 400 gigabit-per-second InfiniBand family of interconnect products, which are expected to be available in Q2 of 2021. Th Read more…

AmLight ExP Activates New, High-Speed Research Links Spanning Three Continents

March 5, 2020

Research institutions are constantly announcing new, more powerful systems and data sources – but for researchers who aren’t located near those tools, stron Read more…

CXL Consortium Launches CPU-to-Anything High Speed Interconnect Protocol

March 14, 2019

Another front has been opened in the long campaign to enable any-to-any connectivity in high performance datacenter computing. The Compute Express Link (CXL) consortium – with tech heavies Intel, Google, HPE, Dell EMC, Microsoft, Facebook, Cisco, Huawei and Alibaba – has ratified version 1.0 of the CXL specification... Read more…

Data Vortex Users Contemplate the Future of Supercomputing

October 19, 2017

Last month (Sept. 11-12), HPC networking company Data Vortex held its inaugural users group at Pacific Northwest National Laboratory (PNNL) bringing together ab Read more…

Intersect360 Survey Shows Continued InfiniBand Dominance

July 4, 2017

There were few surprises in Intersect360 Research’s just released report on interconnect use in HPC. InfiniBand and Ethernet remain the dominant protocols acr Read more…

- Click Here for More Headlines

Whitepaper

Transforming Industrial and Automotive Manufacturing

In this era, expansion in digital infrastructure capacity is inevitable. Parallel to this, climate change consciousness is also rising, making sustainability a mandatory part of the organization’s functioning. As computing workloads such as AI and HPC continue to surge, so does the energy consumption, posing environmental woes. IT departments within organizations have a crucial role in combating this challenge. They can significantly drive sustainable practices by influencing newer technologies and process adoption that aid in mitigating the effects of climate change.

While buying more sustainable IT solutions is an option, partnering with IT solutions providers, such and Lenovo and Intel, who are committed to sustainability and aiding customers in executing sustainability strategies is likely to be more impactful.

Learn how Lenovo and Intel, through their partnership, are strongly positioned to address this need with their innovations driving energy efficiency and environmental stewardship.

Download Now

Sponsored by Lenovo

Whitepaper

How Direct Liquid Cooling Improves Data Center Energy Efficiency

Data centers are experiencing increasing power consumption, space constraints and cooling demands due to the unprecedented computing power required by today’s chips and servers. HVAC cooling systems consume approximately 40% of a data center’s electricity. These systems traditionally use air conditioning, air handling and fans to cool the data center facility and IT equipment, ultimately resulting in high energy consumption and high carbon emissions. Data centers are moving to direct liquid cooled (DLC) systems to improve cooling efficiency thus lowering their PUE, operating expenses (OPEX) and carbon footprint.

This paper describes how CoolIT Systems (CoolIT) meets the need for improved energy efficiency in data centers and includes case studies that show how CoolIT’s DLC solutions improve energy efficiency, increase rack density, lower OPEX, and enable sustainability programs. CoolIT is the global market and innovation leader in scalable DLC solutions for the world’s most demanding computing environments. CoolIT’s end-to-end solutions meet the rising demand in cooling and the rising demand for energy efficiency.

Download Now

Sponsored by CoolIT

Advanced Scale Career Development & Workforce Enhancement Center

Featured Advanced Scale Jobs:

HPCwire Resource Library

HPCwire Product Showcase

© 2024 HPCwire. All Rights Reserved. A Tabor Communications Publication

HPCwire is a registered trademark of Tabor Communications, Inc. Use of this site is governed by our Terms of Use and Privacy Policy.

Reproduction in whole or in part in any form or medium without express written permission of Tabor Communications, Inc. is prohibited.