ESnet6 Launches, Heralding a New Era in Scientific Networking

October 11, 2022

The launch of ESnet6 was announced at an event at Berkeley Lab this morning. ESnet – short for “energy sciences network” – is managed by Berkeley Lab, f Read more…

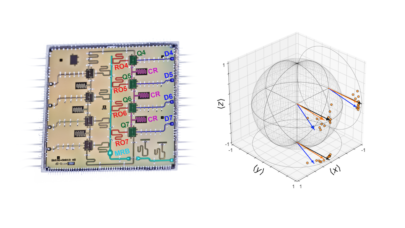

LBNL Reports Crucial Leap in Error Mitigation for Quantum Computers

December 9, 2021

Researchers at Lawrence Berkeley National Laboratory’s Advanced Quantum Testbed (AQT) demonstrated that an experimental method known as randomized compiling (RC) can dramatically reduce error rates in quantum algorithms and lead to more accurate and... Read more…

Fireside Chat with LBNL’s Advanced Quantum Testbed Director

October 26, 2021

Last week, Irfan Siddiqi led a “fireside chat” with a few media and analysts to introduce the Department of Energy’s relatively new Advanced Quantum Testb Read more…

Supercomputers Illuminate Supernova Formation

March 6, 2021

Supernovae are perhaps the galaxy’s best fireworks shows, with dying stars’ death rattles coming in the form of unimaginably large explosions. Astrophysicis Read more…

What’s New in HPC Research: Astra, Cabernet, the Cloud Performance Gap & More

December 8, 2020

In this regular feature, HPCwire highlights newly published research in the high-performance computing community and related domains. From parallel programming Read more…

Lawrence Livermore Announces Mammoth Cluster to Fight COVID-19

November 4, 2020

Over the last nine months, innumerable HPC systems around the world have turned their computing power toward the coronavirus – however, relatively few have be Read more…

NERSC-9 Clues Found in NERSC 2017 Annual Report

October 8, 2018

If you’re eager to find out who’ll supply NERSC’s next-gen supercomputer, codenamed NERSC-9, here’s a project update to tide you over until the winning bid and system details are revealed. The upcoming system is referenced several times in the recently published 2017 NERSC annual report. Read more…

LBNL-led Effort Receives $3M to Advance Quantum Computing

September 27, 2017

Two teams led by Lawrence Berkeley National Laboratory researchers have received $3 million from the Department of Energy to advance quantum computing software Read more…

- Click Here for More Headlines

Whitepaper

Transforming Industrial and Automotive Manufacturing

In this era, expansion in digital infrastructure capacity is inevitable. Parallel to this, climate change consciousness is also rising, making sustainability a mandatory part of the organization’s functioning. As computing workloads such as AI and HPC continue to surge, so does the energy consumption, posing environmental woes. IT departments within organizations have a crucial role in combating this challenge. They can significantly drive sustainable practices by influencing newer technologies and process adoption that aid in mitigating the effects of climate change.

While buying more sustainable IT solutions is an option, partnering with IT solutions providers, such and Lenovo and Intel, who are committed to sustainability and aiding customers in executing sustainability strategies is likely to be more impactful.

Learn how Lenovo and Intel, through their partnership, are strongly positioned to address this need with their innovations driving energy efficiency and environmental stewardship.

Download Now

Sponsored by Lenovo

Whitepaper

How Direct Liquid Cooling Improves Data Center Energy Efficiency

Data centers are experiencing increasing power consumption, space constraints and cooling demands due to the unprecedented computing power required by today’s chips and servers. HVAC cooling systems consume approximately 40% of a data center’s electricity. These systems traditionally use air conditioning, air handling and fans to cool the data center facility and IT equipment, ultimately resulting in high energy consumption and high carbon emissions. Data centers are moving to direct liquid cooled (DLC) systems to improve cooling efficiency thus lowering their PUE, operating expenses (OPEX) and carbon footprint.

This paper describes how CoolIT Systems (CoolIT) meets the need for improved energy efficiency in data centers and includes case studies that show how CoolIT’s DLC solutions improve energy efficiency, increase rack density, lower OPEX, and enable sustainability programs. CoolIT is the global market and innovation leader in scalable DLC solutions for the world’s most demanding computing environments. CoolIT’s end-to-end solutions meet the rising demand in cooling and the rising demand for energy efficiency.

Download Now

Sponsored by CoolIT

Advanced Scale Career Development & Workforce Enhancement Center

Featured Advanced Scale Jobs:

HPCwire Resource Library

HPCwire Product Showcase

© 2024 HPCwire. All Rights Reserved. A Tabor Communications Publication

HPCwire is a registered trademark of Tabor Communications, Inc. Use of this site is governed by our Terms of Use and Privacy Policy.

Reproduction in whole or in part in any form or medium without express written permission of Tabor Communications, Inc. is prohibited.