ARM Opens Door to Make Custom Chips for HPC, AI

October 19, 2023

It is safe to say that ARM isn't a scrappy startup that was once the pride of the UK. The US-based IPO made the chip designer a big-game chip player, and the Read more…

EU Grabs Arm for First Exaflops Supercomputer, x86 Misses Out

October 4, 2023

The configuration of Europe's first exascale supercomputer, Jupiter, has been finalized, and it is a win for Nvidia and a disappointment for x86 chip vendors In Read more…

Nvidia Delivering New Options for MLPerf and HPC Performance

September 28, 2023

As HPCwire reported recently, the latest MLperf benchmarks are out. Not unsurprisingly, Nvidia was the leader across many categories. The HGX H100 GPU systems, which contain eight H100 GPUs, delivered the highest throughput on every MLPerf inference test in this round. Read more…

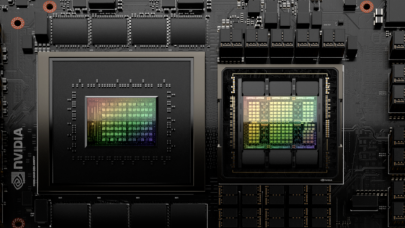

Nvidia Adds Faster HBM3e Memory to the GH200 Grace Hopper Platform

August 9, 2023

Nvidia Grace-Hopper offers a tightly integrated CPU + GPU solution for what is becoming a generative AI dominated market. To increase performance, the graphics Read more…

Arm Aims to Be at the Center of Increasingly Diverse Datacenter

November 5, 2022

Arm's been riding high on the mobile market for decades now, but has struggled to make its mark on servers. But the company hopes to reverse that with some new initiatives that Arm executives addressed at the recent Arm DevSummit held last week. The top initiative revolves around providing programming and design tools so its chip... Read more…

AWS Arm-based Graviton3 Instances Now in Preview

December 1, 2021

Three years after unveiling the first generation of its AWS Graviton chip-powered instances in 2018, Amazon Web Services announced that the third generation of the processors – the AWS Graviton3 – will power all-new Amazon Elastic Compute 2 (EC2) C7g instances that are now available in preview. Debuting at the AWS re:Invent 2021... Read more…

Hot Chips: Here Come the DPUs and IPUs from Arm, Nvidia and Intel

August 25, 2021

The emergence of data processing units (DPU) and infrastructure processing units (IPU) as potentially important pieces in cloud and datacenter architectures was Read more…

Arm Details Neoverse V1, N2 Platforms with New Mesh Interconnect, Advances Partner Ecosystem

April 27, 2021

Chip designer Arm Holdings is sharing details about its Neoverse V1 and N2 cores, introducing its new CMN-700 interconnect, and showcasing its partners' plans t Read more…

- Click Here for More Headlines

Whitepaper

Transforming Industrial and Automotive Manufacturing

In this era, expansion in digital infrastructure capacity is inevitable. Parallel to this, climate change consciousness is also rising, making sustainability a mandatory part of the organization’s functioning. As computing workloads such as AI and HPC continue to surge, so does the energy consumption, posing environmental woes. IT departments within organizations have a crucial role in combating this challenge. They can significantly drive sustainable practices by influencing newer technologies and process adoption that aid in mitigating the effects of climate change.

While buying more sustainable IT solutions is an option, partnering with IT solutions providers, such and Lenovo and Intel, who are committed to sustainability and aiding customers in executing sustainability strategies is likely to be more impactful.

Learn how Lenovo and Intel, through their partnership, are strongly positioned to address this need with their innovations driving energy efficiency and environmental stewardship.

Download Now

Sponsored by Lenovo

Whitepaper

How Direct Liquid Cooling Improves Data Center Energy Efficiency

Data centers are experiencing increasing power consumption, space constraints and cooling demands due to the unprecedented computing power required by today’s chips and servers. HVAC cooling systems consume approximately 40% of a data center’s electricity. These systems traditionally use air conditioning, air handling and fans to cool the data center facility and IT equipment, ultimately resulting in high energy consumption and high carbon emissions. Data centers are moving to direct liquid cooled (DLC) systems to improve cooling efficiency thus lowering their PUE, operating expenses (OPEX) and carbon footprint.

This paper describes how CoolIT Systems (CoolIT) meets the need for improved energy efficiency in data centers and includes case studies that show how CoolIT’s DLC solutions improve energy efficiency, increase rack density, lower OPEX, and enable sustainability programs. CoolIT is the global market and innovation leader in scalable DLC solutions for the world’s most demanding computing environments. CoolIT’s end-to-end solutions meet the rising demand in cooling and the rising demand for energy efficiency.

Download Now

Sponsored by CoolIT

Advanced Scale Career Development & Workforce Enhancement Center

Featured Advanced Scale Jobs:

HPCwire Resource Library

HPCwire Product Showcase

© 2024 HPCwire. All Rights Reserved. A Tabor Communications Publication

HPCwire is a registered trademark of Tabor Communications, Inc. Use of this site is governed by our Terms of Use and Privacy Policy.

Reproduction in whole or in part in any form or medium without express written permission of Tabor Communications, Inc. is prohibited.