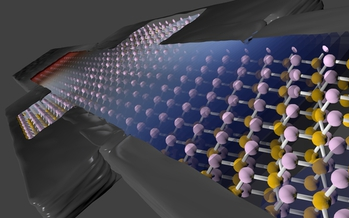

MIT Makes a Big Breakthrough in Nonsilicon Transistors

December 10, 2020

What if Silicon Valley moved beyond silicon? In the 80’s, Seymour Cray was asking the same question, delivering at Supercomputing 1988 a talk titled “What’s All This About Gallium Arsenide?” The supercomputing legend intended to make gallium arsenide (GaA) the material of the future... Read more…

How the Gordon Bell Prize Winners Used Summit to Illuminate Transistors

November 22, 2019

At SC19, the Association for Computing Machinery (ACM) awarded the prestigious Gordon Bell Prize to the Swiss Federal Institute of Technology (ETH) Zurich. The Read more…

Researchers Shrink Transistor Gate to One Nanometer

October 13, 2016

A team of US scientists may have just breathed new life into a faltering Moore’s law and advanced the limits of microelectronic miniaturization with the fabrication of a transistor with a 1nm gate. The breakthrough portends a path beyond silicon-based transistors, which have been widely predicted to hit a wall at 5-nanometers. Read more…

Intel’s Fryman: “It’s not that we love CMOS; it’s the only real choice.”

September 1, 2016

Forget for a moment the prevailing high anxiety over Moore’s Law’s fate. In the near-term – which could easily mean a decade – CMOS will remain the only viable, volume technology driving computing. Pursue alternatives? Of course, urged Josh Fryman, principal engineer and engineering manager, Intel. Read more…

Transistors Won’t Shrink Beyond 2021, Says Final ITRS Report

July 28, 2016

The final International Technology Roadmap for Semiconductors (ITRS) is now out. The highly-detailed multi-part report, collaboratively published by a group of international semiconductor experts, offers guidance on the technological challenges and opportunities for the semiconductor industry through 2030. One of the major takeaways is the insistence that Moore's law will continue for some time even though traditional transistor scaling (through smaller feature sizes) is expected to hit an economic wall in 2021. Read more…

Pushing Back the Limits of Microminaturization

March 4, 2015

Over the last half a century, computers have transformed nearly every facet of society. The information age and its continuing evolution can be traced to the i Read more…

Stanford Group Creates Four-Layer Stacked Chip

December 18, 2014

With transistor scaling slated to come up against some fundamental limits over the next five to seven years, chip designers are hot on the trail of technologies Read more…

Will Magnets Be the Cure for What Ails Moore’s Law?

October 1, 2014

With silicon-based processors facing some inexorable limits, scientists are looking elsewhere to keep computing on its exponential growth track. One potential a Read more…

- Click Here for More Headlines

Whitepaper

Transforming Industrial and Automotive Manufacturing

In this era, expansion in digital infrastructure capacity is inevitable. Parallel to this, climate change consciousness is also rising, making sustainability a mandatory part of the organization’s functioning. As computing workloads such as AI and HPC continue to surge, so does the energy consumption, posing environmental woes. IT departments within organizations have a crucial role in combating this challenge. They can significantly drive sustainable practices by influencing newer technologies and process adoption that aid in mitigating the effects of climate change.

While buying more sustainable IT solutions is an option, partnering with IT solutions providers, such and Lenovo and Intel, who are committed to sustainability and aiding customers in executing sustainability strategies is likely to be more impactful.

Learn how Lenovo and Intel, through their partnership, are strongly positioned to address this need with their innovations driving energy efficiency and environmental stewardship.

Download Now

Sponsored by Lenovo

Whitepaper

How Direct Liquid Cooling Improves Data Center Energy Efficiency

Data centers are experiencing increasing power consumption, space constraints and cooling demands due to the unprecedented computing power required by today’s chips and servers. HVAC cooling systems consume approximately 40% of a data center’s electricity. These systems traditionally use air conditioning, air handling and fans to cool the data center facility and IT equipment, ultimately resulting in high energy consumption and high carbon emissions. Data centers are moving to direct liquid cooled (DLC) systems to improve cooling efficiency thus lowering their PUE, operating expenses (OPEX) and carbon footprint.

This paper describes how CoolIT Systems (CoolIT) meets the need for improved energy efficiency in data centers and includes case studies that show how CoolIT’s DLC solutions improve energy efficiency, increase rack density, lower OPEX, and enable sustainability programs. CoolIT is the global market and innovation leader in scalable DLC solutions for the world’s most demanding computing environments. CoolIT’s end-to-end solutions meet the rising demand in cooling and the rising demand for energy efficiency.

Download Now

Sponsored by CoolIT

Advanced Scale Career Development & Workforce Enhancement Center

Featured Advanced Scale Jobs:

HPCwire Resource Library

HPCwire Product Showcase

© 2024 HPCwire. All Rights Reserved. A Tabor Communications Publication

HPCwire is a registered trademark of Tabor Communications, Inc. Use of this site is governed by our Terms of Use and Privacy Policy.

Reproduction in whole or in part in any form or medium without express written permission of Tabor Communications, Inc. is prohibited.