According to the Bible, the universe was created in about a week. Astrophysicists are currently building a virtual universe that will be completed in about four months, using 2048 processors of the MareNostrum supercomputer. Hosted by the Barcelona Supercomputing Center, this 10,240-processor IBM machine is able to perform more than 94 trillion operations per second. This unique facility, the largest in Europe and ironically located inside an old chapel, is the perfect place to compute the formation and evolution of a virtual replica of our own universe.

The latest generation of astronomical instruments has allowed astronomers to have a clear view of the universe at its infancy, based on the so-called “cosmic microwave background,” as well as a very detailed knowledge of the universe at present, in its fully adult, grown-up age. In order to fill the gap in between, and to prepare for the next generation of astronomical instruments, astrophysicists from all over Europe have gathered in Barcelona to run a single application that can compute the evolution of large scale structures in the universe.

The MareNostrum galaxy formation project is a multidisciplinary collaboration between astrophysicists of France, Germany, Spain, Israel and USA, together with computer experts from IDRIS (Institut du Développement et des Ressources en Informatique Scientifique) and BSC (Barcelona Supercomputing Center). The application solves a very complex set of mathematical equations by translating them into sophisticated computational algorithms. These algorithms are based on state-of-the-art adaptive mesh refinement techniques and advanced programming technologies in order to optimize the timely execution of the same application on several thousands of processors in parallel.

The MareNostrum galaxy formation project is a multidisciplinary collaboration between astrophysicists of France, Germany, Spain, Israel and USA, together with computer experts from IDRIS (Institut du Développement et des Ressources en Informatique Scientifique) and BSC (Barcelona Supercomputing Center). The application solves a very complex set of mathematical equations by translating them into sophisticated computational algorithms. These algorithms are based on state-of-the-art adaptive mesh refinement techniques and advanced programming technologies in order to optimize the timely execution of the same application on several thousands of processors in parallel.

The simulation is now computing the evolution of a patch of our universe — a cubic box of 150 millions light years on a side — with unprecedented accuracy. It requires roughly 10 billion computational elements to describe the different kinds of matter that are believed to compose each individual galaxy of our universe: dark matter, gas and stars. This requires the combined power of 2048 PowerPC 970 MP processors and up to 3.2 TB of RAM memory. Contrary to other large computational problems in which information can be split into independent tasks, and because of the non-local nature of the physical processes we are dealing with, all 2048 processors have to exchange large amounts of data very frequently. To support this type of processing, the application takes advantage of the high bandwidth and low latency Myrinet interconnect installed on the MareNostrum computer. A personal computer, provided it had enough memory to store all the data, would need around 114 years to do the same task.

In about four months, using almost one million CPU hours, several billions years of the history of the universe will be simulated. The simulation makes intensive use of the I/O sub-system. To allow such a huge simulation to run smoothly during weeks of computation and to get an optimal performance of the system, application tuning was required: the simulation package provides a restart mechanism that allowed for recovery and resumption of the computation. In this way, the application is able to deal with hardware failures without having to restart from the beginning. This mechanism requires around 30 TB of data to be written to save the application state. In order to minimize the Global File System contention, an optimized directory structure has been proposed, supporting a sustained “parallel write” performance of 1.6 Gbps. Other design aspects were taken into account in order to improve a massive “parallel read and broadcast” over the Myrinet network, in order to read and dispatch the initial condition data over all the processors.

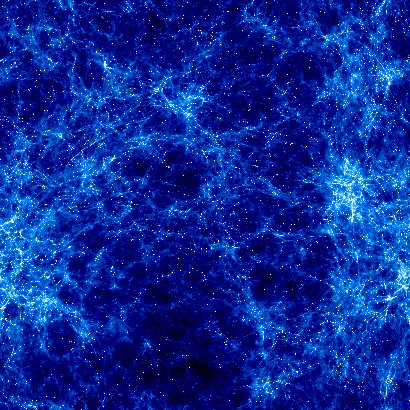

As in computer-simulated movies, a large number of snapshots are stored in sequence in order to provide realistic animation. The total amount of scientific data generated will exceed 40 TB. This unique database will constitute a virtual universe that astrophysicists will explore in order to create mock observations and to shed light in the many different processes that gave birth to the galaxies, and in particular, our own Milky Way galaxy.

The first week of computation was performed last September, during which 34 snapshots were generated, producing more than 3 TB of data. During the entire week only two hours were lost because of a hardware failure. This was due to failure of a single compute node — out of the 2100 processors reserved for the run.

The MareNostrum virtual universe was evolved up to the age of 1.5 billion years. Astrophysicists believe that this is precisely the era of the formation for the first Milky Way-like galaxies. Researchers have detected roughly 50 such large objects, with more than 100,000 additional galaxies of smaller size in the simulated universe. They are currently analyzing their physical properties in the virtual catalogue, as well as preparing for the next rounds of computations that will be needed in order to complete the history of the virtual universe.

One of the most important issues of numerical modelling of complex physical phenomena is the accuracy of the results. Unfortunately, the researchers cannot compare the results from the numerical simulations with laboratory experiments, like in other areas of computational fluid dynamics. Instead, the reliability of the simulations can be assessed by comparing results from different numerical codes starting from the same initial conditions. In this regard, the researchers are also simulating the MareNostrum Universe with a totally different numerical approach, using more than two billion particles to represent the different fluid components. This simulation is part of a long-term project named The MareNostrum Numerical Cosmology Project (MNCP). Its aim is to use the capabilities of MareNostrum supercomputer to perform simulations of the universe with unprecedented resolution.

—–

Source: Barcelona Supercomputing Center, http://www.bsc.es/