Nvidia Aims Clara Healthcare at Drug Discovery, Imaging via DGX

April 12, 2021

Nvidia Corp. continues to expand its Clara healthcare platform with the addition of computational drug discovery and medical imaging tools based on its DGX A100 platform, related InfiniBand networking and its AGX developer kit. The Clara partnerships announced during... Read more…

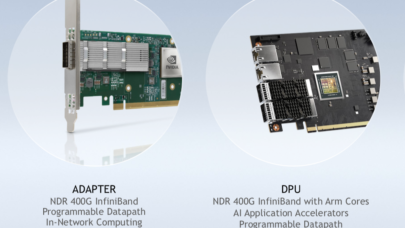

Nvidia (Mellanox) Debuts NDR 400 Gigabit InfiniBand at SC20

November 16, 2020

Nvidia today introduced its Mellanox NDR 400 gigabit-per-second InfiniBand family of interconnect products, which are expected to be available in Q2 of 2021. Th Read more…

Nvidia Goes Deep with Mellanox Datacenter Net Security

June 22, 2020

When Nvidia announced its acquisition of Mellanox, the GPU leader noted that datacenters would eventually be built like high performance computers. Hence, it’ Read more…

China Green-lights Nvidia Acquisition of Mellanox

April 17, 2020

With Chinese regulators issuing their approval, the final piece has fallen into place for Nvidia’s $6.9 billion acquisition of high performance interconnect provider Mellanox, announced in March 2019. The deal has already gone through the regulatory process in the U.S. and the European Union, with unconditional approvals.... Read more…

2020 Stanford Conference – virtual experience

April 10, 2020

Gather, remotely, with fellow experts for two days of insightful talks on the cutting edge domains and disciplines propelling the innovative research, tools and Read more…

Nvidia-Mellanox $6.8B Deal Wins EU Clearance

December 20, 2019

U.S. chipmaker Nvidia has been granted unconditional approval by the European Union to acquire Israel networking company Mellanox Technologies, clearing a hurdl Read more…

San Diego Supercomputer Center to Welcome ‘Expanse’ Supercomputer in 2020

July 18, 2019

With a $10 million dollar award from the National Science Foundation, San Diego Supercomputer Center (SDSC) at the University of California San Diego is procuri Read more…

Mellanox Partners with Storage Startups

July 10, 2019

As GPU leader Nvidia closes its $6.9 billion acquisition of Mellanox Technologies announced earlier this year, the HPC network interconnect specialist’s venture arm remains active, investing in two storage startups. Read more…

- Click Here for More Headlines

Whitepaper

Why IT Must Have an Influential Role in Strategic Decisions About Sustainability

In this era, expansion in digital infrastructure capacity is inevitable. Parallel to this, climate change consciousness is also rising, making sustainability a mandatory part of the organization’s functioning. As computing workloads such as AI and HPC continue to surge, so does the energy consumption, posing environmental woes. IT departments within organizations have a crucial role in combating this challenge. They can significantly drive sustainable practices by influencing newer technologies and process adoption that aid in mitigating the effects of climate change.

While buying more sustainable IT solutions is an option, partnering with IT solutions providers, such and Lenovo and Intel, who are committed to sustainability and aiding customers in executing sustainability strategies is likely to be more impactful.

Learn how Lenovo and Intel, through their partnership, are strongly positioned to address this need with their innovations driving energy efficiency and environmental stewardship.

Download Now

Sponsored by Lenovo

Whitepaper

How Direct Liquid Cooling Improves Data Center Energy Efficiency

Data centers are experiencing increasing power consumption, space constraints and cooling demands due to the unprecedented computing power required by today’s chips and servers. HVAC cooling systems consume approximately 40% of a data center’s electricity. These systems traditionally use air conditioning, air handling and fans to cool the data center facility and IT equipment, ultimately resulting in high energy consumption and high carbon emissions. Data centers are moving to direct liquid cooled (DLC) systems to improve cooling efficiency thus lowering their PUE, operating expenses (OPEX) and carbon footprint.

This paper describes how CoolIT Systems (CoolIT) meets the need for improved energy efficiency in data centers and includes case studies that show how CoolIT’s DLC solutions improve energy efficiency, increase rack density, lower OPEX, and enable sustainability programs. CoolIT is the global market and innovation leader in scalable DLC solutions for the world’s most demanding computing environments. CoolIT’s end-to-end solutions meet the rising demand in cooling and the rising demand for energy efficiency.

Download Now

Sponsored by CoolIT

Advanced Scale Career Development & Workforce Enhancement Center

Featured Advanced Scale Jobs:

HPCwire Resource Library

HPCwire Product Showcase

© 2024 HPCwire. All Rights Reserved. A Tabor Communications Publication

HPCwire is a registered trademark of Tabor Communications, Inc. Use of this site is governed by our Terms of Use and Privacy Policy.

Reproduction in whole or in part in any form or medium without express written permission of Tabor Communications, Inc. is prohibited.