For the first time in 62 years, the four-man Olympics bobsled team from the US captured the gold medal, setting a course world record in the process. The winning bobsled, piloted by Steve Holcomb and his winning Night Train crew, had some state-of-the-art engineering behind it, including CFD software from Exa Corporation. As it turned out, that software may have proved to be the margin of difference in the race.

While bobsledding doesn’t enjoy the same level of popularity (and ensuing money) as NASCAR and Formula 1 racing, the engineers that design the sleds need to apply the same aerodynamic principles to their designs. Prior to this season, US bobsled teams relied almost exclusively on European-built hardware, which was all designed via traditional wind tunnels. Exa thought its CFD software could give the US teams a critical edge by offering the advantages of HPC simulations. Using the computational horsepower of a mid-sized cluster, Exa software would be able to simulate the airflow and drag of the sled and its crew.

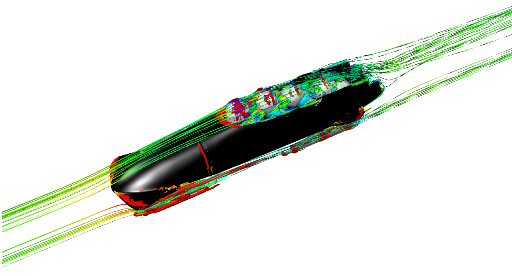

According to Brad Duncan, Exa’s director of aerodynamics applications, it’s much easier to optimize the sled in the computer since, unlike the wind tunnel, you can actually see and quantify the airflow. And no matter how skilled the European engineers are, they can’t access that level of detail in the tunnel. “It’s not that we’re smarter than they are,” says Duncan. “We just have more data when it comes to interpreting the results and designing the sled.”

In 2008, the non-profit Bo-Dyn Bobsled Project, founded by former NASCAR driver Geoff Bodine, tapped Exa to help them design and build home-grown bobsleds for the US team. Exa contributed both software and engineering services to the effort, becoming a non-official sponsor. Using a 19-teraflop IBM cluster (x3550 and x3450 blades), loaded with Exa’s CFD software, Bo-Dyn was able to optimize the aerodynamics of the sled and minimize the drag. Around 30 simulations were performed over a period of a couple of months, iteratively refining the design.

Through the CFD simulations, they were able to improve sled aerodynamics by around 2 percent. According to Duncan, every 1 percent of drag translated into 0.1 seconds of extra speed on a typical run. So the Bo-Dyn engineers were ecstatic to get 2 percent, because that could lead to a 0.2 second faster time per run, and potentially 0.8 seconds for the four runs that make up the Olympic event.

Eight-tenths of a second might not seem like much of an edge in a competition that runs for nearly three and half minutes of track time. But in Vancouver, Night Train’s cumulative margin of difference over the second place finisher (Germany) was a mere 0.38 seconds, and just 0.39 seconds over the third place finisher (Canada). A track record was established by the US team during its second run. Night Train’s Olympic success follows a first place finish in the 2009 World Championship games in Lake Placid, New York, which was the first time the US had captured that title in the last 50 years.

Bringing HPC-style NASCAR engineering to bobsledding is a natural extension of Exa’s mainstream CFD/CAE business. The software maker’s principle customers are ground transport companies — manufacturers of automobiles, race cars, tractors, trains, etc. It has accounts with most major automakers, including BMW, Ford, Renault and Chrysler, who use Exa’s flagship PowerFLOW fluid dynamics software to optimize the aerodynamic, aeroacoustic and thermal properties of vehicles as well as individual components.

The strength of the PowerFLOW software, according to Duncan, is that it supports fully transient flow rather than just a steady state solution. So instead of an approximation, PowerFLOW delivers a high fidelity, time-accurate simulation. The better accuracy is the result the employing Lattice Boltzmann methods (LBM), rather than the more traditional Navier-Stokes equations. At this point, PowerFLOW is the only commercially-available CFD solver based on LBM technology. In line with that high-end approach, Exa also provides application-specific engineering support to help customers solve specific design problems — everything from brake cooling to incorporating aerodynamics into styling.

The overall strategy has served the company well, especially with its auto manufacturing customer base. In good times, Exa claimed 40 percent year-over-year growth. But the company’s heavy reliance on revenues from automobile manufacturers put a hold on double-digit expansion during the 2009 downturn. As the auto industry and other manufacturers dig out of the recession, Exa is looking to get back on track in 2010.