Since its creation by Facebook in April 2011, the Open Compute Project (OCP) has been rapidly evolving – and that’s good news for the HPC community.

As originally conceived by Facebook, OCP’s charter was to develop open standards for the design and delivery of the most efficient server, storage and data center hardware designs for scalable computing. It’s no surprise that particular emphasis was placed on large data centers focused on huge web workloads. The computational and energy requirements of Facebook’s 334,000 square foot Prineville, Oregon data center was a major motivator for the OCP initiative.

And it’s paid off. At last January’s Open Compute Summit, both Facebook CEO Mark Zuckerberg and Jay Parikh, VP of Engineering, reported that implementing an OCP compliant infrastructure has helped the company save $1.2 billion over the past three years. Obviously members of the HPC community can benefit from the savings inherent in this approach.

Although hyperscale computing environments have been the focus of OCP’s initial efforts, no one anticipated the initiative’s rapid growth and the remarkable changes its mission has undergone. As of January 2014, the Project had 150 official members.

Along with this growth, the feedback from these new and enthusiastic members has substantially reshaped OCP’s charter.

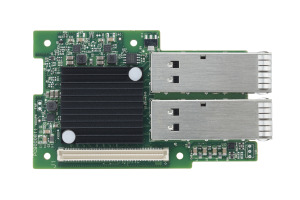

In addition to addressing the needs of hyperscale data centers, the initiative has evolved to include benefits such as energy efficiency, power and cooling breakthroughs, and densities that can be leveraged by the HPC community, especially the research institutions and national labs. Of particular importance is the incorporation of faster interconnects and lowered latency – capabilities much in demand in the HPC world. For example, Mellanox has announced support for 40GbE NICs with RDMA support that meet the OCP specifications.

So, the latest version of the initiative, what insiders call OCP 3.0, is particularly attractive to smaller organizations – whether they are OEMs or end users – that want to embrace HPC without purchasing high end supercomputers or building elaborate data centers.

By leveraging servers, storage, and HPC infrastructure that comply with OCP specifications, these non-hyperscale customers can save on energy, cooling and total cost of ownership. They can economically add to their HPC mix superfast interconnects like 40GbE and powerful processors like the 22 nm Ivy Bridge and future generations of Intel products. Implementations can range from a single rack to large clusters – in either case, considerable savings can be realized by using hardware and infrastructure built to OCP specifications.

There are now seven official OCP Solution Providers supporting the OCP ecosystem. These include AMAX, Avnet, CTC, Hyve, Penguin Computing, Quanta, and Racklive.

Quanta is a good example of a successful company that has embraced the OCP movement and is adding impetus to the initiative’s evolution. Quanta has contributed its entire line of Open Rack compatible products, which they co-developed with Rackspace. In the process they are changing the nature of the marketplace. Wikibon senior analyst Stu Miniman, quoted by siliconANGLE, notes that “The technology is already starting to change the balance of power in the server market, where original design manufacturers (ODMs) like Quanta are quickly gaining ground against established suppliers such as Dell and IBM.”

For example, Quanta is offering Rackgo X, a rack solution inspired by the OCP standard that integrates Quanta’s server, storage and top of rack switch. The solution is designed for low CAPEX and OPEX, with an emphasis on simple and efficient hardware design and the utmost in high density, serviceability and manageability.

The solution meets the needs of cloud service providers and large enterprise datacenters. But also, because of its adherence to OCP standards, it is a welcome addition to the HPC community. The Rackgo 300 for example, provides up to 64 compute nodes with 128 Intel Xeon E5-2600 V2 CPUs, or 1536 cores. HPC users will appreciate that the Rackgo X300 is capable of supporting up to 32TB DDR3 1600Mhz of memory and 30 teraflops of processing power per rack. For those seeking even higher server transactional processing performance, Fusion-io recently announced that its Fusion ioScale flash memory products are integrated into Quanta Rackgo X products.

Quanta is typical of the forward-looking companies supporting the OCP initiative. Their commitment and participation has prompted Frank Frankovsky, chairman and president of the OCP Foundation, to blog, “Looking at all this progress and forward momentum, I can’t help but think that 2014 is our year. New technologies are being developed and contributed; new products and new businesses are being launched; and new OCP technologies are being adopted. We are reinventing the industry together, in the open…”

The OCP initiative is playing a major role in bringing highly scalable and power-efficient hardware to hyperscale computing users such as the big cloud providers and large enterprises. But it’s not just the hyperscale crowd that’s benefitting – the rapidly growing and evolving OCP, which is bringing new capabilities to the HPC community in the form of innovative, affordable solutions, is changing the face of high end computing.